Summary:

The check in db_bench for table_cache_numshardbits was 0 < bits <= 20, whereas the check in LRUCache was 0 < bits < 20. Changed the two values to match to avoid a crash in db_bench on a null cache.

Fixes https://github.com/facebook/rocksdb/issues/7393

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8110

Reviewed By: zhichao-cao

Differential Revision: D27353522

Pulled By: mrambacher

fbshipit-source-id: a414bd23b5bde1f071146b34cfca5e35c02de869

Summary:

Ran a spell check over the comments in the include/rocksdb directory and fixed any mis-spellings.

There are still some variable names that are spelled incorrectly (like SizeApproximationOptions::include_memtabtles, SstFileMetaData::oldest_ancester_time) that were not fixed, as those would break compilation.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8120

Reviewed By: zhichao-cao

Differential Revision: D27366034

Pulled By: mrambacher

fbshipit-source-id: 6a3f3674890bb6acc751e9c5887a8fbb6adca5df

Summary:

Currently, partitioned filter does not support user-defined timestamp. Disable it for now in ts stress test so that

the contrun jobs can proceed.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8127

Test Plan: make crash_test_with_ts

Reviewed By: ajkr

Differential Revision: D27388488

Pulled By: riversand963

fbshipit-source-id: 5ccff18121cb537bd82f2ac072cd25efb625c666

Summary:

Because build_version.cc is dependent on the library objects (to force a re-generation of it), the library objects would be built in order to satisfy this rule. Because there is a build_version.d file, it would need generated and included.

Change the ALL_DEPS/FILES to not include build_version.cc (meaning no .d file for it, which is okay since it is generated). Also changed the rule on whether or not to generate DEP files to skip tags.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8097

Reviewed By: ajkr

Differential Revision: D27299815

Pulled By: mrambacher

fbshipit-source-id: 1efbe8a56d062f57ae13b6c2944ad3faf775087e

Summary:

Currently, we only truncate the latest alive WAL files when the DB is opened. If the latest WAL file is empty or was flushed during Open, its not truncated since the file will be deleted later on in the Open path. However, before deletion, a new WAL file is created, and if the process crash loops between the new WAL file creation and deletion of the old WAL file, the preallocated space will keep accumulating and eventually use up all disk space. To prevent this, always truncate the latest WAL file, even if its empty or the data was flushed.

Tests:

Add unit tests to db_wal_test

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8122

Reviewed By: riversand963

Differential Revision: D27366132

Pulled By: anand1976

fbshipit-source-id: f923cc03ef033ccb32b140d36c6a63a8152f0e8e

Summary:

For some branches, I see an error during analyze on this code. I do not know why it is not persistent, but this should address the error:

Logic error | Result of operation is garbage or undefined | trace_replay.cc | Replay | 436 | 30 | View Report

DecodeCFAndKey(trace.payload, &get_payload.cf_id, &get_payload.get_key);

--

433 | } else {

434 | TracerHelper::DecodeGetPayload(&trace, &get_payload);

| 25←Calling 'TracerHelper::DecodeGetPayload'→ | 25 | ← | Calling 'TracerHelper::DecodeGetPayload' | →

25 | ← | Calling 'TracerHelper::DecodeGetPayload' | →

| 29←Returning from 'TracerHelper::DecodeGetPayload'→ | 29 | ← | Returning from 'TracerHelper::DecodeGetPayload' | →

29 | ← | Returning from 'TracerHelper::DecodeGetPayload' | →

435 | }

436 | if (get_payload.cf_id > 0 &&

| 30←The left operand of '>' is a garbage value | 30 | ← | The left operand of '>' is a garbage value

30 | ← | The left operand of '>' is a garbage value

437 | cf_map_.find(get_payload.cf_id) == cf_map_.end()) {

438 | return Status::Corruption("Invalid Column Family ID.");

439 | }

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8121

Reviewed By: zhichao-cao

Differential Revision: D27366022

Pulled By: mrambacher

fbshipit-source-id: 309c05dbab08cd7ab7f15389e8456f09196f37f6

Summary:

The snapshot structure returned by rocksdb_transaction_get_snapshot is

supposed to be freed by calling rocksdb_free(), so allocate using malloc

rather than new. Fixes https://github.com/facebook/rocksdb/issues/6112

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8114

Reviewed By: akankshamahajan15

Differential Revision: D27362923

Pulled By: anand1976

fbshipit-source-id: e93a8b1ffe26dafbe22529907f72b796ae971214

Summary:

The patch adds a resource management/RAII class called `ThreadGuard`,

which can be used to ensure that the managed thread is joined when the

`ThreadGuard` is destroyed, regardless of whether it is due to the

object going out of scope, an early return, an exception etc. This is

important because if an `std::thread` object is destroyed without having

been joined (or detached) first, the process is aborted (via

`std::terminate`).

For now, `ThreadGuard` is only used in the test case

`ExternalSSTFileTest.PickedLevelBug`; however, it could come in handy

elsewhere in the codebase as well (both in test code and "real" code).

Case in point: in the `PickedLevelBug` test case, with the earlier code we

could end up in the above situation when the following assertion (which is

before the threads are joined) is triggered:

```

ASSERT_FALSE(bg_compact_started.load());

```

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8112

Test Plan:

```

make check

gtest-parallel --repeat=10000 ./external_sst_file_test --gtest_filter="*PickedLevelBug"

```

Reviewed By: riversand963

Differential Revision: D27343185

Pulled By: ltamasi

fbshipit-source-id: 2a8c3aa68bc78cc03ec0dbae909fb25c2cd15c69

Summary:

There is bug in the current code base introduced in https://github.com/facebook/rocksdb/issues/8049 , we still set the SST file write IO Error only case as hard error. Fix it by removing the logic.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8107

Test Plan: make check, error_handler_fs_test

Reviewed By: anand1976

Differential Revision: D27321422

Pulled By: zhichao-cao

fbshipit-source-id: c014afc1553ca66b655e3bbf9d0bf6eb417ccf94

Summary:

Previously it only applied to block-based tables generated by flush. This restriction

was undocumented and blocked a new use case. Now compression sampling

applies to all block-based tables we generate when it is enabled.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8105

Test Plan: new unit test

Reviewed By: riversand963

Differential Revision: D27317275

Pulled By: ajkr

fbshipit-source-id: cd9fcc5178d6515e8cb59c6facb5ac01893cb5b0

Summary:

`strerror()` is not thread-safe, using `strerror_r()` instead. The API could be different on the different platforms, used the code from 0deef031cb/folly/String.cpp (L457)

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8087

Reviewed By: mrambacher

Differential Revision: D27267151

Pulled By: jay-zhuang

fbshipit-source-id: 4b8856d1ec069d5f239b764750682c56e5be9ddb

Summary:

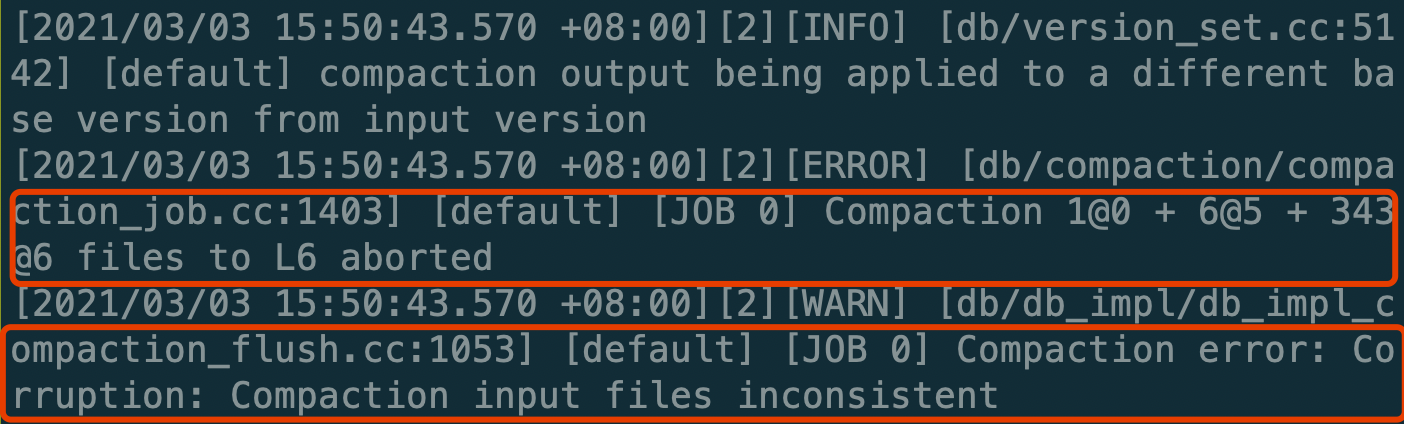

**Summary:**

When doing CompactFiles on the files of multiple levels(num_level > 2) with L0 is included, the compaction would fail like this.

The reason is that in `VerifyCompactionFileConsistency` it checks the levels between the L0 and base level should be empty, but it regards the compaction triggered by `CompactFiles` as an L0 -> base level compaction wrongly.

The condition is committed several years ago, whereas it isn't correct anymore.

```c++

if (vstorage->compaction_style_ == kCompactionStyleLevel &&

c->start_level() == 0 && c->num_input_levels() > 2U)

```

So this PR just deletes the incorrect check.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8024

Test Plan: make check

Reviewed By: jay-zhuang

Differential Revision: D26907060

Pulled By: ajkr

fbshipit-source-id: 538cef32faf464cd422e3f8de236ea3e58880c2b

Summary:

Improved handling of -bits_per_key other than 10, but at least

the OptimizeForMemory test is simply not designed for generally handling

other settings. (ribbon_test does have a statistical framework for this

kind of testing, but it's not important to do that same for Bloom right

now.)

Closes https://github.com/facebook/rocksdb/issues/7019

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8093

Test Plan: for I in `seq 1 20`; do ./bloom_test --gtest_filter=-*OptimizeForMemory* --bits_per_key=$I &> /dev/null || echo FAILED; done

Reviewed By: mrambacher

Differential Revision: D27275875

Pulled By: pdillinger

fbshipit-source-id: 7362e8ac2c41ea11f639412e4f30c8b375f04388

Summary:

Fix race condition in

DBSSTTest.DBWithMaxSpaceAllowedWithBlobFiles where background flush

thread updates delete_blob_file but in test thread Flush() already

completes after getting bg_error and delete_blob_file remains false.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8092

Test Plan: Ran ASAN job few times on CircleCI

Reviewed By: riversand963

Differential Revision: D27275815

Pulled By: akankshamahajan15

fbshipit-source-id: 2939ad1671403881573bbe07c71aa474c5019130

Summary:

As title. All core db implementations should stay in db_impl.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8082

Test Plan: make check

Reviewed By: ajkr

Differential Revision: D27211442

Pulled By: riversand963

fbshipit-source-id: e0953fde75064740e899aaff7989ff033b7f5232

Summary:

Fix the following error while running `make crash_test`

```

Traceback (most recent call last):

File "tools/db_crashtest.py", line 705, in <module>

main()

File "tools/db_crashtest.py", line 696, in main

blackbox_crash_main(args, unknown_args)

File "tools/db_crashtest.py", line 479, in blackbox_crash_main

+ list({'db': dbname}.items())), unknown_args)

File "tools/db_crashtest.py", line 414, in gen_cmd

finalzied_params = finalize_and_sanitize(params)

File "tools/db_crashtest.py", line 331, in finalize_and_sanitize

dest_params.get("user_timestamp_size") > 0):

TypeError: '>' not supported between instances of 'NoneType' and 'int'

```

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8091

Test Plan: make crash_test

Reviewed By: ltamasi

Differential Revision: D27268276

Pulled By: riversand963

fbshipit-source-id: ed2873b9587ecc51e24abc35ef2bd3d91fb1ed1b

Summary:

This is a small fix to what I think is a mistype in two comments in `DBOptionsInterface.java`. If it was not an error, feel free to close.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8086

Reviewed By: ajkr

Differential Revision: D27260488

Pulled By: mrambacher

fbshipit-source-id: 469daadaf6039d5b5187132b8e0c7c3672842f21

Summary:

As title.

Always specify namespace::symbol_name...

Test plan

CircleCI and other CI results

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8090

Reviewed By: ltamasi

Differential Revision: D27256130

Pulled By: riversand963

fbshipit-source-id: b9b9ae2b3a8b4a16f0384292e71c6aecca93c570

Summary:

Add some basic test for user-defined timestamp to db_stress. Currently,

read with timestamp always tries to read using the current timestamp.

Due to the per-key timestamp-sequence ordering constraint, we only add timestamp-

related tests to the `NonBatchedOpsStressTest` since this test serializes accesses

to the same key and uses a file to cross-check data correctness.

The timestamp feature is not supported in a number of components, e.g. Merge, SingleDelete,

DeleteRange, CompactionFilter, Readonly instance, secondary instance, SST file ingestion, transaction,

etc. Therefore, db_stress should exit if user enables both timestamp and these features at the same

time. The (currently) incompatible features can be found in

`CheckAndSetOptionsForUserTimestamp`.

This PR also fixes a bug triggered when timestamp is enabled together with

`index_type=kBinarySearchWithFirstKey`. This bug fix will also be in another separate PR

with more unit tests coverage. Fixing it here because I do not want to exclude the index type

from crash test.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8061

Test Plan: make crash_test_with_ts

Reviewed By: jay-zhuang

Differential Revision: D27056282

Pulled By: riversand963

fbshipit-source-id: c3e00ad1023fdb9ebbdf9601ec18270c5e2925a9

Summary:

Since our stress/crash tests by default generate values of size 8, 16, or 24,

it does not make much sense to set `min_blob_size` to 256. The patch

updates the set of potential `min_blob_size` values in the crash test

script and in `db_stress` where it might be set dynamically using

`SetOptions`.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8085

Test Plan: Ran `make check` and tried the crash test script.

Reviewed By: riversand963

Differential Revision: D27238620

Pulled By: ltamasi

fbshipit-source-id: 4a96f9944b1ed9220d3045c5ab0b34c49009aeee

Summary:

The implementation of TransactionDB::WrapDB() and

TransactionDB::WrapStackableDB() are almost identical, except for the

type of the first argument `db`. This PR adds a new template function in

anonymous namespace, and calls it in the above two functions.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8079

Test Plan: make check

Reviewed By: lth

Differential Revision: D27184575

Pulled By: riversand963

fbshipit-source-id: f2855a6db3a7e897d0d611f7050ca4b696c56a7a

Summary:

This does not add any new public APIs or published

functionality, but adds the ability to read and use (and in tests,

write) backups with a new meta file schema, based on the old schema

but not forward-compatible (before this change). The new schema enables

some capabilities not in the old:

* Explicit versioning, so that users get clean error messages the next

time we want to break forward compatibility.

* Ignoring unrecognized fields (with warning), so that new non-critical

features can be added without breaking forward compatibility.

* Rejecting future "non-ignorable" fields, so that new features critical

to some use-cases could potentially be added outside of linear schema

versions, with broken forward compatibility.

* Fields at the end of the meta file, such as for checksum of the meta

file's contents (up to that point)

* New optional 'size' field for each file, which is checked when present

* Optionally omitting 'crc32' field, so that we aren't required to have

a crc32c checksum for files to take a backup. (E.g. to support backup

via hard links and to better support file custom checksums.)

Because we do not have a JSON parser and to share code, the new schema

is simply derived from the old schema.

BackupEngine code is updated to allow missing checksums in some places,

and to make that easier, `has_checksum` and `verify_checksum_after_work`

are eliminated. Empty `checksum_hex` indicates checksum is unknown. I'm

not too afraid of regressing on data integrity, because

(a) we have pretty good test coverage of corruption detection in backups, and

(b) we are increasingly relying on the DB itself for data integrity rather than

it being an exclusive feature of backups.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8069

Test Plan:

new unit tests, added to crash test (some local run with

boosted backup probability)

Reviewed By: ajkr

Differential Revision: D27139824

Pulled By: pdillinger

fbshipit-source-id: 9e0e4decfb42bb84783d64d2d246456d97e8e8c5

Summary:

Add the new Append and PositionedAppend API to env WritableFile. User is able to benefit from the write checksum handoff API when using the legacy Env classes. FileSystem already implemented the checksum handoff API.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8071

Test Plan: make check, added new unit test.

Reviewed By: anand1976

Differential Revision: D27177043

Pulled By: zhichao-cao

fbshipit-source-id: 430c8331fc81099fa6d00f4fff703b68b9e8080e

Summary:

Currently, a few ldb commands do not check the execution result of

database operations. This PR checks the execution results and tries to

improve the error reporting.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8072

Test Plan:

```

make check

```

and

```

ASSERT_STATUS_CHECKED=1 make -j20 ldb

python tools/ldb_test.py

```

Reviewed By: zhichao-cao

Differential Revision: D27152466

Pulled By: riversand963

fbshipit-source-id: b94220496a4b3591b61c1d350f665860a6579f30

Summary:

In previous codebase, if WAL is used, all the retryable IO Error will be treated as hard error. So write is stalled. In this PR, the retryable IO error from WAL sync is separated from SST file flush io error. If WAL Sync is ok and retryable IO Error only happens during SST flush, the error is mapped to soft error. So user can continue insert to Memtable and append to WAL.

Resolve the bug that if WAL sync fails, the memtable status does not roll back due to calling PickMemtable early than calling and checking SyncClosedLog.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8049

Test Plan: added new unit test, make check

Reviewed By: anand1976

Differential Revision: D26965529

Pulled By: zhichao-cao

fbshipit-source-id: f5fecb66602212523c92ee49d7edcb6065982410

Summary:

WriteController had a number of issues:

* It could introduce a delay of 1ms even if the write rate never exceeded the

configured delayed_write_rate.

* The DB-wide delayed_write_rate could be exceeded in a number of ways

with multiple column families:

* Wiping all pending delay "debts" when another column family joins

the delay with GetDelayToken().

* Resetting last_refill_time_ to (now + sleep amount) means each

column family can write with delayed_write_rate for large writes.

* Updating bytes_left_ for a partial refill without updating

last_refill_time_ would essentially give out random bonuses,

especially to medium-sized writes.

Now the code is much simpler, with these issues fixed. See comments in

the new code and new (replacement) tests.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8064

Test Plan: new tests, better than old tests

Reviewed By: mrambacher

Differential Revision: D27064936

Pulled By: pdillinger

fbshipit-source-id: 497c23fe6819340b8f3d440bd634d8a2bc47323f

Summary:

Add statistics and info log for error handler: counters for bg error, bg io error, bg retryable io error, auto resume, auto resume total retry, and auto resume sucess; Histogram for auto resume retry count in each recovery call.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8050

Test Plan: make check and add test to error_handler_fs_test

Reviewed By: anand1976

Differential Revision: D26990565

Pulled By: zhichao-cao

fbshipit-source-id: 49f71e8ea4e9db8b189943976404205b56ab883f

Summary:

Extend support to track blob files in SST File manager.

This PR notifies SstFileManager whenever a new blob file is created,

via OnAddFile and an obsolete blob file deleted via OnDeleteFile

and delete file via ScheduleFileDeletion.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8037

Test Plan: Add new unit tests

Reviewed By: ltamasi

Differential Revision: D26891237

Pulled By: akankshamahajan15

fbshipit-source-id: 04c69ccfda2a73782fd5c51982dae58dd11979b6

Summary:

support getUsage and getPinnedUsage in JavaAPI for Cache

also fix a typo in LRUCacheTest.java that the highPriPoolRatio is not valid(set 5, I guess it means 0.05)

Pull Request resolved: https://github.com/facebook/rocksdb/pull/7925

Reviewed By: mrambacher

Differential Revision: D26900241

Pulled By: ajkr

fbshipit-source-id: 735d1e40a16fa8919c89c7c7154ba7f81208ec33

Summary:

The new options are:

* compact0 - compact L0 into L1 using one thread

* compact1 - compact L1 into L2 using one thread

* flush - flush memtable

* waitforcompaction - wait for compaction to finish

These are useful for reproducible benchmarks to help get the LSM tree shape

into a deterministic state. I wrote about this at:

http://smalldatum.blogspot.com/2021/02/read-only-benchmarks-with-lsm-are.html

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8027

Reviewed By: riversand963

Differential Revision: D27053861

Pulled By: ajkr

fbshipit-source-id: 1646f35584a3db03740fbeb47d91c3f00fb35d6e

Summary:

Fixes 3 minor Javadoc copy-paste errors in the `RocksDB#newIterator()` and `Transaction#getIterator()` variants that take a column family handle but are talking about iterating over "the database" or "the default column family".

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8034

Reviewed By: jay-zhuang

Differential Revision: D26877667

Pulled By: mrambacher

fbshipit-source-id: 95dd95b667c496e389f221acc9a91b340e4b63bf

Summary:

These classes were wraps of Env that provided only extensions to the FileSystem functionality. Changed the classes to be FileSystems and the wraps to be of the CompositeEnvWrapper.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/7968

Reviewed By: anand1976

Differential Revision: D26900253

Pulled By: mrambacher

fbshipit-source-id: 94001d8024a3c54a1c11adadca2bac66c3af2a77

Summary:

When timestamp is enabled, key comparison should take this into account.

In `BlockBasedTableReader::Get()`, `BlockBasedTableReader::MultiGet()`,

assume the target key is `key`, and the timestamp upper bound is `ts`.

The highest key in current block is (key, ts1), while the lowest key in next

block is (key, ts2).

If

```

ts1 > ts > ts2

```

then

```

(key, ts1) < (key, ts) < (key, ts2)

```

It can be shown that if `Compare()` is used, then we will mistakenly skip the next

block. Instead, we should use `CompareWithoutTimestamp()`.

The majority of this PR makes some existing tests in `db_with_timestamp_basic_test.cc`

parameterized so that different index types can be tested. A new unit test is

also added for more coverage.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/8062

Test Plan: make check

Reviewed By: ltamasi

Differential Revision: D27057557

Pulled By: riversand963

fbshipit-source-id: c1062fa7c159ed600a1ad7e461531d52265021f1