Tag:

Branch:

Tree:

b7db7eae26

main

oxigraph-8.1.1

oxigraph-8.3.2

oxigraph-main

${ noResults }

1274 Commits (b7db7eae26f199f0942f60de48e97fd16ecc8959)

| Author | SHA1 | Message | Date |

|---|---|---|---|

|

|

f241d082b6 |

Prevent double caching in the compressed secondary cache (#9747)

Summary: ### **Summary:** When both LRU Cache and CompressedSecondaryCache are configured together, there possibly are some data blocks double cached. **Changes include:** 1. Update IS_PROMOTED to IS_IN_SECONDARY_CACHE to prevent confusions. 2. This PR updates SecondaryCacheResultHandle and use IsErasedFromSecondaryCache to determine whether the handle is erased in the secondary cache. Then, the caller can determine whether to SetIsInSecondaryCache(). 3. Rename LRUSecondaryCache to CompressedSecondaryCache. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9747 Test Plan: **Test Scripts:** 1. Populate a DB. The on disk footprint is 482 MB. The data is set to be 50% compressible, so the total decompressed size is expected to be 964 MB. ./db_bench --benchmarks=fillrandom --num=10000000 -db=/db_bench_1 2. overwrite it to a stable state: ./db_bench --benchmarks=overwrite,stats --num=10000000 -use_existing_db -duration=10 --benchmark_write_rate_limit=2000000 -db=/db_bench_1 4. Run read tests with diffeernt cache setting: T1: ./db_bench --benchmarks=seekrandom,stats --threads=16 --num=10000000 -use_existing_db -duration=120 --benchmark_write_rate_limit=52000000 -use_direct_reads --cache_size=520000000 --statistics -db=/db_bench_1 T2: ./db_bench --benchmarks=seekrandom,stats --threads=16 --num=10000000 -use_existing_db -duration=120 --benchmark_write_rate_limit=52000000 -use_direct_reads --cache_size=320000000 -compressed_secondary_cache_size=400000000 --statistics -use_compressed_secondary_cache -db=/db_bench_1 T3: ./db_bench --benchmarks=seekrandom,stats --threads=16 --num=10000000 -use_existing_db -duration=120 --benchmark_write_rate_limit=52000000 -use_direct_reads --cache_size=520000000 -compressed_secondary_cache_size=400000000 --statistics -use_compressed_secondary_cache -db=/db_bench_1 T4: ./db_bench --benchmarks=seekrandom,stats --threads=16 --num=10000000 -use_existing_db -duration=120 --benchmark_write_rate_limit=52000000 -use_direct_reads --cache_size=20000000 -compressed_secondary_cache_size=500000000 --statistics -use_compressed_secondary_cache -db=/db_bench_1 **Before this PR** | Cache Size | Compressed Secondary Cache Size | Cache Hit Rate | |------------|-------------------------------------|----------------| |520 MB | 0 MB | 85.5% | |320 MB | 400 MB | 96.2% | |520 MB | 400 MB | 98.3% | |20 MB | 500 MB | 98.8% | **Before this PR** | Cache Size | Compressed Secondary Cache Size | Cache Hit Rate | |------------|-------------------------------------|----------------| |520 MB | 0 MB | 85.5% | |320 MB | 400 MB | 99.9% | |520 MB | 400 MB | 99.9% | |20 MB | 500 MB | 99.2% | Reviewed By: anand1976 Differential Revision: D35117499 Pulled By: gitbw95 fbshipit-source-id: ea2657749fc13efebe91a8a1b56bc61d6a224a12 |

4 years ago |

|

|

25e31d1a94 |

tools/db_bench_tool.cc use uint64_t instead of size_t (#9800)

Summary: to fix compilation for 32bit Pull Request resolved: https://github.com/facebook/rocksdb/pull/9800 Reviewed By: riversand963 Differential Revision: D35404447 fbshipit-source-id: 6a1185bb38f3a718357aa120e3b26a1ea77f023d |

4 years ago |

|

|

c3d7e16252 |

Add WAL compression to stress tests (#9811)

Summary: Add the WAL compression feature to the stress test. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9811 Reviewed By: riversand963 Differential Revision: D35414316 Pulled By: anand1976 fbshipit-source-id: 0c17b1ec55679a52f088ad368798b57139bd921a |

4 years ago |

|

|

49623f9c8e |

Account memory of big memory users in BlockBasedTable in global memory limit (#9748)

Summary: **Context:** Through heap profiling, we discovered that `BlockBasedTableReader` objects can accumulate and lead to high memory usage (e.g, `max_open_file = -1`). These memories are currently not saved, not tracked, not constrained and not cache evict-able. As a first step to improve this, similar to https://github.com/facebook/rocksdb/pull/8428, this PR is to track an estimate of `BlockBasedTableReader` object's memory in block cache and fail future creation if the memory usage exceeds the available space of cache at the time of creation. **Summary:** - Approximate big memory users (`BlockBasedTable::Rep` and `TableProperties` )' memory usage in addition to the existing estimated ones (filter block/index block/un-compression dictionary) - Charge all of these memory usages to block cache on `BlockBasedTable::Open()` and release them on `~BlockBasedTable()` as there is no memory usage fluctuation of concern in between - Refactor on CacheReservationManager (and its call-sites) to add concurrent support for BlockBasedTable used in this PR. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9748 Test Plan: - New unit tests - db bench: `OpenDb` : **-0.52% in ms** - Setup `./db_bench -benchmarks=fillseq -db=/dev/shm/testdb -disable_auto_compactions=1 -write_buffer_size=1048576` - Repeated run with pre-change w/o feature and post-change with feature, benchmark `OpenDb`: `./db_bench -benchmarks=readrandom -use_existing_db=1 -db=/dev/shm/testdb -reserve_table_reader_memory=true (remove this when running w/o feature) -file_opening_threads=3 -open_files=-1 -report_open_timing=true| egrep 'OpenDb:'` #-run | (feature-off) avg milliseconds | std milliseconds | (feature-on) avg milliseconds | std milliseconds | change (%) -- | -- | -- | -- | -- | -- 10 | 11.4018 | 5.95173 | 9.47788 | 1.57538 | -16.87382694 20 | 9.23746 | 0.841053 | 9.32377 | 1.14074 | 0.9343477536 40 | 9.0876 | 0.671129 | 9.35053 | 1.11713 | 2.893283155 80 | 9.72514 | 2.28459 | 9.52013 | 1.0894 | -2.108041632 160 | 9.74677 | 0.991234 | 9.84743 | 1.73396 | 1.032752389 320 | 10.7297 | 5.11555 | 10.547 | 1.97692 | **-1.70275031** 640 | 11.7092 | 2.36565 | 11.7869 | 2.69377 | **0.6635807741** - db bench on write with cost to cache in WriteBufferManager (just in case this PR's CRM refactoring accidentally slows down anything in WBM) : `fillseq` : **+0.54% in micros/op** `./db_bench -benchmarks=fillseq -db=/dev/shm/testdb -disable_auto_compactions=1 -cost_write_buffer_to_cache=true -write_buffer_size=10000000000 | egrep 'fillseq'` #-run | (pre-PR) avg micros/op | std micros/op | (post-PR) avg micros/op | std micros/op | change (%) -- | -- | -- | -- | -- | -- 10 | 6.15 | 0.260187 | 6.289 | 0.371192 | 2.260162602 20 | 7.28025 | 0.465402 | 7.37255 | 0.451256 | 1.267813605 40 | 7.06312 | 0.490654 | 7.13803 | 0.478676 | **1.060579461** 80 | 7.14035 | 0.972831 | 7.14196 | 0.92971 | **0.02254791432** - filter bench: `bloom filter`: **-0.78% in ms/key** - ` ./filter_bench -impl=2 -quick -reserve_table_builder_memory=true | grep 'Build avg'` #-run | (pre-PR) avg ns/key | std ns/key | (post-PR) ns/key | std ns/key | change (%) -- | -- | -- | -- | -- | -- 10 | 26.4369 | 0.442182 | 26.3273 | 0.422919 | **-0.4145720565** 20 | 26.4451 | 0.592787 | 26.1419 | 0.62451 | **-1.1465262** - Crash test `python3 tools/db_crashtest.py blackbox --reserve_table_reader_memory=1 --cache_size=1` killed as normal Reviewed By: ajkr Differential Revision: D35136549 Pulled By: hx235 fbshipit-source-id: 146978858d0f900f43f4eb09bfd3e83195e3be28 |

4 years ago |

|

|

6534c6dea4 |

Fix remaining uses of "backupable" (#9792)

Summary: Various renaming and fixes to get rid of remaining uses of "backupable" which is terminology leftover from the original, flawed design of BackupableDB. Now any DB can be backed up, using BackupEngine. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9792 Test Plan: CI Reviewed By: ajkr Differential Revision: D35334386 Pulled By: pdillinger fbshipit-source-id: 2108a42b4575c8cccdfd791c549aae93ec2f3329 |

4 years ago |

|

|

cd59b139fc |

Fix some typos in comments and HISTORY.md (#9798)

Summary: compation --> compaction Pull Request resolved: https://github.com/facebook/rocksdb/pull/9798 Reviewed By: ajkr Differential Revision: D35341611 Pulled By: jay-zhuang fbshipit-source-id: 5ea07527c311de75cade219456b6ee52b23020f6 |

4 years ago |

|

|

bcabee737f |

Improve comments for some files (#9793)

Summary: Update the comments, e.g. fixing typo, formatting, etc. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9793 Reviewed By: jay-zhuang Differential Revision: D35323989 Pulled By: gitbw95 fbshipit-source-id: 4a72fc02b67abaae8be0d1439b68f9967a68052d |

4 years ago |

|

|

bfea9e7c02 |

Add benchmark for GetMergeOperands() (#9785)

Summary: There's an existing benchmark, "getmergeoperands", but it is unconventional in that it has multiple phases and hardcoded setup parameters. This PR adds a different one, "readrandomoperands", that follows the pattern of other benchmarks of having a single phase and taking its configuration from existing flags. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9785 Test Plan: ``` $ ./db_bench -benchmarks=mergerandom -merge_operator=StringAppendOperator -write_buffer_size=1048576 -max_bytes_for_level_base=4194304 -target_file_size_base=1048576 -compression_type=none -disable_auto_compactions=true $ ./db_bench -use_existing_db=true -benchmarks=readrandomoperands -merge_operator=StringAppendOperator -disable_auto_compactions=true -duration=10 ... readrandomoperands : 542.082 micros/op 1844 ops/sec; 0.2 MB/s (11980 of 18999 found) ``` Reviewed By: jay-zhuang Differential Revision: D35290412 Pulled By: ajkr fbshipit-source-id: fb367ca614b128cef844a75f0e5d9dd7c3328d85 |

4 years ago |

|

|

fd66005628 |

Add 'adaptive_readahead' and 'async_io' options to db_stress (#9750)

Summary: Same as title Pull Request resolved: https://github.com/facebook/rocksdb/pull/9750 Test Plan: export CRASH_TEST_EXT_ARGS=" --async_io=1 --adaptive_readahead=1; make -j crash_test Reviewed By: jay-zhuang Differential Revision: D35114326 Pulled By: akankshamahajan15 fbshipit-source-id: 8b05c95be09f7aff6cb9eb757aa20a6520349d45 |

4 years ago |

|

|

60106b91ac |

Add 7.0.fb/7.1.fb to check_format_compatible.sh (#9772)

Summary: As titled Pull Request resolved: https://github.com/facebook/rocksdb/pull/9772 Test Plan: `./tools/check_format_compatible.sh 7.1.fb` (and manually removed 2.7.fb due to pre-existing assertion failure) passed compatibility test Reviewed By: ajkr Differential Revision: D35233659 Pulled By: hx235 fbshipit-source-id: 6b93263a5724d752347e04f1396628804c24a880 |

4 years ago |

|

|

37de4e1d08 |

Correctly set ThreadState::tid (#9757)

Summary: Fixes a bug introduced by me in https://github.com/facebook/rocksdb/pull/9733 That PR added a counter so that the per-thread seeds in ThreadState would be unique even when --benchmarks had more than one test. But it incorrectly used this counter as the value for ThreadState::tid as well. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9757 Test Plan: Confirm that unexpectedly good QPS results on the regression tests return to normal with this fix. I have confirmed that the QPS increase starts with the PR 9733 diff. Reviewed By: jay-zhuang Differential Revision: D35149303 Pulled By: mdcallag fbshipit-source-id: dee5cc36b7faaba6c3be6d6a253d3c2eaad72864 |

4 years ago |

|

|

1a130fa3c1 |

db_bench should use a good seed when --seed is not set or set to 0 (#9740)

Summary: This is for https://github.com/facebook/rocksdb/issues/9737 I have wasted more than a few hours running db_bench benchmarks where --seed was not set and getting better than expected results because cache hit rates are great because multiple invocations of db_bench used the same value for --seed or did not set it, and then all used 0. The result is that all see the same sequence of keys. Others have done the same. The problem is worse in that it is easy to miss and the result is a benchmark with results that are misleading. A good way to avoid this is to set it to the equivalent of gettimeofday() when either --seed is not set or it is set to 0 (the default). With this change the actual seed is printed when it was 0 at process start: Set seed to 1647992570365606 because --seed was 0 Pull Request resolved: https://github.com/facebook/rocksdb/pull/9740 Test Plan: Perf results: ./db_bench --benchmarks=fillseq,readrandom --num=1000000 --reads=4000000 readrandom : 6.469 micros/op 154583 ops/sec; 17.1 MB/s (4000000 of 4000000 found) ./db_bench --benchmarks=fillseq,readrandom --num=1000000 --reads=4000000 --seed=0 readrandom : 6.565 micros/op 152321 ops/sec; 16.9 MB/s (4000000 of 4000000 found) ./db_bench --benchmarks=fillseq,readrandom --num=1000000 --reads=4000000 --seed=1 readrandom : 6.461 micros/op 154777 ops/sec; 17.1 MB/s (4000000 of 4000000 found) ./db_bench --benchmarks=fillseq,readrandom --num=1000000 --reads=4000000 --seed=2 readrandom : 6.525 micros/op 153244 ops/sec; 17.0 MB/s (4000000 of 4000000 found) Reviewed By: jay-zhuang Differential Revision: D35145361 Pulled By: mdcallag fbshipit-source-id: 2b35b153ccec46b27d7c9405997523555fc51267 |

4 years ago |

|

|

409635cb2a |

Add --slow_usecs option to determine when long op message is printed (#9732)

Summary: This adds the --slow_usecs option with a default value of 1M. Operations that take this much time have a message printed when --histogram=1, --stats_interval=0 and --stats_interval_seconds=0. The current code hardwired this to 20,000 usecs and for some stress tests that reduced throughput by 20% or more. This is for https://github.com/facebook/rocksdb/issues/9620 Pull Request resolved: https://github.com/facebook/rocksdb/pull/9732 Test Plan: ./db_bench --benchmarks=fillrandom,readrandom --compression_type=lz4 --slow_usecs=100 --histogram=1 ./db_bench --benchmarks=fillrandom,readrandom --compression_type=lz4 --slow_usecs=100000 --histogram=1 Reviewed By: jay-zhuang Differential Revision: D35121522 Pulled By: mdcallag fbshipit-source-id: daf27f937efd748980545d6395db332712fc078b |

4 years ago |

|

|

f219e3d5d8 |

db_bench should fail on bad values for --compaction_fadvice and --value_size_distribution_type (#9741)

Summary: db_bench quietly parses and ignores bad values for --compaction_fadvice and --value_size_distribution_type I prefer that it fail for them as it does for bad option values in most other cases. Otherwise a benchmark result will be provided for the wrong configuration and the result will be misleading. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9741 Test Plan: These now fail: ./db_bench --compaction_fadvice=noney Unknown compaction fadvice:noney ./db_bench --value_size_distribution_type=norma Cannot parse distribution type 'norma' While correct values continue to work: ./db_bench --value_size_distribution_type=normal Initializing RocksDB Options from the specified file Initializing RocksDB Options from command-line flags ./db_bench --compaction_fadvice=none Initializing RocksDB Options from the specified file Initializing RocksDB Options from command-line flags Reviewed By: siying Differential Revision: D35115973 Pulled By: mdcallag fbshipit-source-id: c2b10de5c2d1ea7c7539e676f5bd556351f5d370 |

4 years ago |

|

|

d583d23d86 |

Avoid seed reuse when --benchmarks has more than one test (#9733)

Summary: When --benchmarks has more than one test then the threads in one benchmark will use the same set of seeds as the threads in the previous benchmark. This diff fixe that. This fixes https://github.com/facebook/rocksdb/issues/9632 Pull Request resolved: https://github.com/facebook/rocksdb/pull/9733 Test Plan: For this command line the block cache is 8GB, so it caches at most 1024 8KB blocks. Note that without this diff the second run of readrandom has a much better response time because seed reuse means the second run reads the same 1000 blocks as the first run and they are cached at that point. But with this diff that does not happen. ./db_bench --benchmarks=fillseq,flush,compact0,waitforcompaction,levelstats,readrandom,readrandom --compression_type=zlib --num=10000000 --reads=1000 --block_size=8192 ... ``` Level Files Size(MB) -------------------- 0 0 0 1 11 238 2 9 253 3 0 0 4 0 0 5 0 0 6 0 0 ``` --- perf results without this diff DB path: [/tmp/rocksdbtest-2260/dbbench] readrandom : 46.212 micros/op 21618 ops/sec; 2.4 MB/s (1000 of 1000 found) DB path: [/tmp/rocksdbtest-2260/dbbench] readrandom : 21.963 micros/op 45450 ops/sec; 5.0 MB/s (1000 of 1000 found) --- perf results with this diff DB path: [/tmp/rocksdbtest-2260/dbbench] readrandom : 47.213 micros/op 21126 ops/sec; 2.3 MB/s (1000 of 1000 found) DB path: [/tmp/rocksdbtest-2260/dbbench] readrandom : 42.880 micros/op 23299 ops/sec; 2.6 MB/s (1000 of 1000 found) Reviewed By: jay-zhuang Differential Revision: D35089763 Pulled By: mdcallag fbshipit-source-id: 1b50143a07afe876b8c8e5fa50dd94a8ce57fc6b |

4 years ago |

|

|

c18c4a081c |

Add new determinators for multiops transactions stress test (#9708)

Summary: Add determinators for multiops transactions stress test with write-committed and write-prepared policies. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9708 Test Plan: Internal CI Reviewed By: jay-zhuang Differential Revision: D34967263 Pulled By: riversand963 fbshipit-source-id: 170a0842d56dccb6ed6bc0c5adfd33849acd6b31 |

4 years ago |

|

|

6904fd0c86 |

db_bench should fail when an option uses an invalid compression type (#9729)

Summary: This changes db_bench to fail at startup for invalid compression types. It had been changing them to Snappy. For other invalid options it fails at startup. This is for https://github.com/facebook/rocksdb/issues/9621 Pull Request resolved: https://github.com/facebook/rocksdb/pull/9729 Test Plan: This continues to work: ./db_bench --benchmarks=fillrandom --compression_type=lz4 This now fails rather than changing the compression type to Snappy ./db_bench --benchmarks=fillrandom --compression_type=lz44 Cannot parse compression type 'lz44' Reviewed By: jay-zhuang Differential Revision: D35081323 Pulled By: mdcallag fbshipit-source-id: 9b38c835abddce11aa7feb235df63f53cf829981 |

4 years ago |

|

|

d71e5a5beb |

Add number of running flushes & compactions to --stats_per_interval output (#9726)

Summary: This is for https://github.com/facebook/rocksdb/issues/9709 and add two lines to the end of DB Stats for num-running-compactions and num-running-flushes. For example ... ** DB Stats ** Uptime(secs): 6.0 total, 1.0 interval Cumulative writes: 915K writes, 915K keys, 915K commit groups, 1.0 writes per commit group, ingest: 0.11 GB, 18.95 MB/s Cumulative WAL: 915K writes, 0 syncs, 915000.00 writes per sync, written: 0.11 GB, 18.95 MB/s Cumulative stall: 00:00:0.000 H:M:S, 0.0 percent Interval writes: 133K writes, 133K keys, 133K commit groups, 1.0 writes per commit group, ingest: 16.62 MB, 16.53 MB/s Interval WAL: 133K writes, 0 syncs, 133000.00 writes per sync, written: 0.02 GB, 16.53 MB/s Interval stall: 00:00:0.000 H:M:S, 0.0 percent num-running-compactions: 0 num-running-flushes: 0 Pull Request resolved: https://github.com/facebook/rocksdb/pull/9726 Reviewed By: jay-zhuang Differential Revision: D35066759 Pulled By: mdcallag fbshipit-source-id: c161fadd3c15c5aa715a820dab6bfedb46dc099b |

4 years ago |

|

|

f07eec1bf8 |

Add async_io read option in db_bench (#9735)

Summary: Add async_io Read option in db_bench Pull Request resolved: https://github.com/facebook/rocksdb/pull/9735 Test Plan: ./db_bench -use_existing_db=true -db=/tmp/prefix_scan_prefetch_main -benchmarks="seekrandom" -key_size=32 -value_size=512 -num=5000000 -use_direct_reads=true -seek_nexts=327680 -duration=120 -ops_between_duration_checks=1 -async_io=1 Reviewed By: riversand963 Differential Revision: D35058482 Pulled By: akankshamahajan15 fbshipit-source-id: 1522b638c79f6d85bb7408c67f6ab76dbabeeee7 |

4 years ago |

|

|

63a284a6ad |

For db_bench --benchmarks=fillseq with --num_multi_db load databases … (#9713)

Summary: …in order This fixes https://github.com/facebook/rocksdb/issues/9650 For db_bench --benchmarks=fillseq --num_multi_db=X it loads databases in sequence rather than randomly choosing a database per Put. The benefits are: 1) avoids long delays between flushing memtables 2) avoids flushing memtables for all of them at the same point in time 3) puts same number of keys per database so that query tests will find keys as expected Pull Request resolved: https://github.com/facebook/rocksdb/pull/9713 Test Plan: Using db_bench.1 without the change and db_bench.2 with the change: for i in 1 2; do rm -rf /data/m/rx/* ; time ./db_bench.$i --db=/data/m/rx --benchmarks=fillseq --num_multi_db=4 --num=10000000; du -hs /data/m/rx ; done --- without the change fillseq : 3.188 micros/op 313682 ops/sec; 34.7 MB/s real 2m7.787s user 1m52.776s sys 0m46.549s 2.7G /data/m/rx --- with the change fillseq : 3.149 micros/op 317563 ops/sec; 35.1 MB/s real 2m6.196s user 1m51.482s sys 0m46.003s 2.7G /data/m/rx Also, temporarily added a printf to confirm that the code switches to the next database at the right time ZZ switch to db 1 at 10000000 ZZ switch to db 2 at 20000000 ZZ switch to db 3 at 30000000 for i in 1 2; do rm -rf /data/m/rx/* ; time ./db_bench.$i --db=/data/m/rx --benchmarks=fillseq,readrandom --num_multi_db=4 --num=100000; du -hs /data/m/rx ; done --- without the change, smaller database, note that not all keys are found by readrandom because databases have < and > --num keys fillseq : 3.176 micros/op 314805 ops/sec; 34.8 MB/s readrandom : 1.913 micros/op 522616 ops/sec; 57.7 MB/s (99873 of 100000 found) --- with the change, smaller database, note that all keys are found by readrandom fillseq : 3.110 micros/op 321566 ops/sec; 35.6 MB/s readrandom : 1.714 micros/op 583257 ops/sec; 64.5 MB/s (100000 of 100000 found) Reviewed By: jay-zhuang Differential Revision: D35030168 Pulled By: mdcallag fbshipit-source-id: 2a18c4ec571d954cf5a57b00a11802a3608823ee |

4 years ago |

|

|

1ca1562e35 |

Make mixgraph easier to use (#9711)

Summary: Changes: * improves monitoring by displaying average size of a Put value and average scan length * forces the minimum value size to be 10. Before this it was 0 if you didn't set the distribution parameters. * uses reasonable defaults for the distribution parameters that determine value size and scan length * includes seeks in "reads ... found" message, before this they were missing This is for https://github.com/facebook/rocksdb/issues/9672 Pull Request resolved: https://github.com/facebook/rocksdb/pull/9711 Test Plan: Before this change: ./db_bench --benchmarks=fillseq,mixgraph --mix_get_ratio=50 --mix_put_ratio=25 --mix_seek_ratio=25 --num=100000 --value_k=0.2615 --value_sigma=25.45 --iter_k=2.517 --iter_sigma=14.236 fillseq : 4.289 micros/op 233138 ops/sec; 25.8 MB/s mixgraph : 18.461 micros/op 54166 ops/sec; 755.0 MB/s ( Gets:50164 Puts:24919 Seek:24917 of 50164 in 75081 found) After this change: ./db_bench --benchmarks=fillseq,mixgraph --mix_get_ratio=50 --mix_put_ratio=25 --mix_seek_ratio=25 --num=100000 --value_k=0.2615 --value_sigma=25.45 --iter_k=2.517 --iter_sigma=14.236 fillseq : 3.974 micros/op 251553 ops/sec; 27.8 MB/s mixgraph : 16.722 micros/op 59795 ops/sec; 833.5 MB/s ( Gets:50164 Puts:24919 Seek:24917, reads 75081 in 75081 found, avg size: 36.0 value, 504.9 scan) Reviewed By: jay-zhuang Differential Revision: D35030190 Pulled By: mdcallag fbshipit-source-id: d8f555f28d869f752ddb674a524108884511b151 |

4 years ago |

|

|

a8a422e962 |

Add manifest fix-up utility for file temperatures (#9683)

Summary: The goal of this change is to allow changes to the "current" (in FileSystem) file temperatures to feed back into DB metadata, so that they can inform decisions and stats reporting. In part because of modular code factoring, it doesn't seem easy to do this automagically, where opening an SST file and observing current Temperature different from expected would trigger a change in metadata and DB manifest write (essentially giving the deep read path access to the write path). It is also difficult to do this while the DB is open because of the limitations of LogAndApply. This change allows updating file temperature metadata on a closed DB using an experimental utility function UpdateManifestForFilesState() or `ldb update_manifest --update_temperatures`. This should suffice for "migration" scenarios where outside tooling has placed or re-arranged DB files into a (different) tiered configuration without going through RocksDB itself (currently, only compaction can change temperature metadata). Some details: * Refactored and added unit test for `ldb unsafe_remove_sst_file` because of shared functionality * Pulled in autovector.h changes from https://github.com/facebook/rocksdb/issues/9546 to fix SuperVersionContext move constructor (related to an older draft of this change) Possible follow-up work: * Support updating manifest with file checksums, such as when a new checksum function is used and want existing DB metadata updated for it. * It's possible that for some repair scenarios, lighter weight than full repair, we might want to support UpdateManifestForFilesState() to modify critical file details like size or checksum using same algorithm. But let's make sure these are differentiated from modifying file details in ways that don't suspect corruption (or require extreme trust). Pull Request resolved: https://github.com/facebook/rocksdb/pull/9683 Test Plan: unit tests added Reviewed By: jay-zhuang Differential Revision: D34798828 Pulled By: pdillinger fbshipit-source-id: cfd83e8fb10761d8c9e7f9c020d68c9106a95554 |

4 years ago |

|

|

cff0d1e8e6 |

New backup meta schema, with file temperatures (#9660)

Summary: The primary goal of this change is to add support for backing up and restoring (applying on restore) file temperature metadata, without committing to either the DB manifest or the FS reported "current" temperatures being exclusive "source of truth". To achieve this goal, we need to add temperature information to backup metadata, which requires updated backup meta schema. Fortunately I prepared for this in https://github.com/facebook/rocksdb/issues/8069, which began forward compatibility in version 6.19.0 for this kind of schema update. (Previously, backup meta schema was not extensible! Making this schema update public will allow some other "nice to have" features like taking backups with hard links, and avoiding crc32c checksum computation when another checksum is already available.) While schema version 2 is newly public, the default schema version is still 1. Until we change the default, users will need to set to 2 to enable features like temperature data backup+restore. New metadata like temperature information will be ignored with a warning in versions before this change and since 6.19.0. The metadata is considered ignorable because a functioning DB can be restored without it. Some detail: * Some renaming because "future schema" is now just public schema 2. * Initialize some atomics in TestFs (linter reported) * Add temperature hint support to SstFileDumper (used by BackupEngine) Pull Request resolved: https://github.com/facebook/rocksdb/pull/9660 Test Plan: related unit test majorly updated for the new functionality, including some shared testing support for tracking temperatures in a FS. Some other tests and testing hooks into production code also updated for making the backup meta schema change public. Reviewed By: ajkr Differential Revision: D34686968 Pulled By: pdillinger fbshipit-source-id: 3ac1fa3e67ee97ca8a5103d79cc87d872c1d862a |

4 years ago |

|

|

5894761056 |

Improve stress test for transactions (#9568)

Summary: Test only, no change to functionality. Extremely low risk of library regression. Update test key generation by maintaining existing and non-existing keys. Update db_crashtest.py to drive multiops_txn stress test for both write-committed and write-prepared. Add a make target 'blackbox_crash_test_with_multiops_txn'. Running the following commands caught the bug exposed in https://github.com/facebook/rocksdb/issues/9571. ``` $rm -rf /tmp/rocksdbtest/* $./db_stress -progress_reports=0 -test_multi_ops_txns -use_txn -clear_column_family_one_in=0 \ -column_families=1 -writepercent=0 -delpercent=0 -delrangepercent=0 -customopspercent=60 \ -readpercent=20 -prefixpercent=0 -iterpercent=20 -reopen=0 -ops_per_thread=1000 -ub_a=10000 \ -ub_c=100 -destroy_db_initially=0 -key_spaces_path=/dev/shm/key_spaces_desc -threads=32 -read_fault_one_in=0 $./db_stress -progress_reports=0 -test_multi_ops_txns -use_txn -clear_column_family_one_in=0 -column_families=1 -writepercent=0 -delpercent=0 -delrangepercent=0 -customopspercent=60 -readpercent=20 \ -prefixpercent=0 -iterpercent=20 -reopen=0 -ops_per_thread=1000 -ub_a=10000 -ub_c=100 -destroy_db_initially=0 \ -key_spaces_path=/dev/shm/key_spaces_desc -threads=32 -read_fault_one_in=0 ``` Running the following command caught a bug which will be fixed in https://github.com/facebook/rocksdb/issues/9648 . ``` $TEST_TMPDIR=/dev/shm make blackbox_crash_test_with_multiops_wc_txn ``` Pull Request resolved: https://github.com/facebook/rocksdb/pull/9568 Reviewed By: jay-zhuang Differential Revision: D34308154 Pulled By: riversand963 fbshipit-source-id: 99ff1b65c19b46c471d2f2d3b47adcd342a1b9e7 |

4 years ago |

|

|

e4c87773e1 |

Reactivate Mempurge feature in crash test. (#9684)

Summary: Set `experimental_mempurge_threshold` back to `lambda: 10.0*random.random()` in crash test, reverting https://github.com/facebook/rocksdb/issues/8958 after fix provided in https://github.com/facebook/rocksdb/issues/9671 . Pull Request resolved: https://github.com/facebook/rocksdb/pull/9684 Reviewed By: pdillinger Differential Revision: D34820257 Pulled By: bjlemaire fbshipit-source-id: 1e5ae8c872c4ac4c4267c990ac5e3e793d77908c |

4 years ago |

|

|

ca0ef54f16 |

Rate-limit automatic WAL flush after each user write (#9607)

Summary: **Context:** WAL flush is currently not rate-limited by `Options::rate_limiter`. This PR is to provide rate-limiting to auto WAL flush, the one that automatically happen after each user write operation (i.e, `Options::manual_wal_flush == false`), by adding `WriteOptions::rate_limiter_options`. Note that we are NOT rate-limiting WAL flush that do NOT automatically happen after each user write, such as `Options::manual_wal_flush == true + manual FlushWAL()` (rate-limiting multiple WAL flushes), for the benefits of: - being consistent with [ReadOptions::rate_limiter_priority](https://github.com/facebook/rocksdb/blob/7.0.fb/include/rocksdb/options.h#L515) - being able to turn off some WAL flush's rate-limiting but not all (e.g, turn off specific the WAL flush of a critical user write like a service's heartbeat) `WriteOptions::rate_limiter_options` only accept `Env::IO_USER` and `Env::IO_TOTAL` currently due to an implementation constraint. - The constraint is that we currently queue parallel writes (including WAL writes) based on FIFO policy which does not factor rate limiter priority into this layer's scheduling. If we allow lower priorities such as `Env::IO_HIGH/MID/LOW` and such writes specified with lower priorities occurs before ones specified with higher priorities (even just by a tiny bit in arrival time), the former would have blocked the latter, leading to a "priority inversion" issue and contradictory to what we promise for rate-limiting priority. Therefore we only allow `Env::IO_USER` and `Env::IO_TOTAL` right now before improving that scheduling. A pre-requisite to this feature is to support operation-level rate limiting in `WritableFileWriter`, which is also included in this PR. **Summary:** - Renamed test suite `DBRateLimiterTest to DBRateLimiterOnReadTest` for adding a new test suite - Accept `rate_limiter_priority` in `WritableFileWriter`'s private and public write functions - Passed `WriteOptions::rate_limiter_options` to `WritableFileWriter` in the path of automatic WAL flush. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9607 Test Plan: - Added new unit test to verify existing flush/compaction rate-limiting does not break, since `DBTest, RateLimitingTest` is disabled and current db-level rate-limiting tests focus on read only (e.g, `db_rate_limiter_test`, `DBTest2, RateLimitedCompactionReads`). - Added new unit test `DBRateLimiterOnWriteWALTest, AutoWalFlush` - `strace -ftt -e trace=write ./db_bench -benchmarks=fillseq -db=/dev/shm/testdb -rate_limit_auto_wal_flush=1 -rate_limiter_bytes_per_sec=15 -rate_limiter_refill_period_us=1000000 -write_buffer_size=100000000 -disable_auto_compactions=1 -num=100` - verified that WAL flush(i.e, system-call _write_) were chunked into 15 bytes and each _write_ was roughly 1 second apart - verified the chunking disappeared when `-rate_limit_auto_wal_flush=0` - crash test: `python3 tools/db_crashtest.py blackbox --disable_wal=0 --rate_limit_auto_wal_flush=1 --rate_limiter_bytes_per_sec=10485760 --interval=10` killed as normal **Benchmarked on flush/compaction to ensure no performance regression:** - compaction with rate-limiting (see table 1, avg over 1280-run): pre-change: **915635 micros/op**; post-change: **907350 micros/op (improved by 0.106%)** ``` #!/bin/bash TEST_TMPDIR=/dev/shm/testdb START=1 NUM_DATA_ENTRY=8 N=10 rm -f compact_bmk_output.txt compact_bmk_output_2.txt dont_care_output.txt for i in $(eval echo "{$START..$NUM_DATA_ENTRY}") do NUM_RUN=$(($N*(2**($i-1)))) for j in $(eval echo "{$START..$NUM_RUN}") do ./db_bench --benchmarks=fillrandom -db=$TEST_TMPDIR -disable_auto_compactions=1 -write_buffer_size=6710886 > dont_care_output.txt && ./db_bench --benchmarks=compact -use_existing_db=1 -db=$TEST_TMPDIR -level0_file_num_compaction_trigger=1 -rate_limiter_bytes_per_sec=100000000 | egrep 'compact' done > compact_bmk_output.txt && awk -v NUM_RUN=$NUM_RUN '{sum+=$3;sum_sqrt+=$3^2}END{print sum/NUM_RUN, sqrt(sum_sqrt/NUM_RUN-(sum/NUM_RUN)^2)}' compact_bmk_output.txt >> compact_bmk_output_2.txt done ``` - compaction w/o rate-limiting (see table 2, avg over 640-run): pre-change: **822197 micros/op**; post-change: **823148 micros/op (regressed by 0.12%)** ``` Same as above script, except that -rate_limiter_bytes_per_sec=0 ``` - flush with rate-limiting (see table 3, avg over 320-run, run on the [patch]( |

4 years ago |

|

|

33742c2a9f |

Remove BlockBasedTableOptions.hash_index_allow_collision (#9454)

Summary: BlockBasedTableOptions.hash_index_allow_collision is already deprecated and has no effect. Delete it for preparing 7.0 release. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9454 Test Plan: Run all existing tests. Reviewed By: ajkr Differential Revision: D33805827 fbshipit-source-id: ed8a436d1d083173ec6aef2a762ba02e1eefdc9d |

4 years ago |

|

|

9ed96703d1 |

Add support for BlobDB to ldb (#9630)

Summary: Add the configuration options and help messages of BlobDB to `ldb` Pull Request resolved: https://github.com/facebook/rocksdb/pull/9630 Test Plan: `python ./tools/ldb_test.py` Reviewed By: ltamasi Differential Revision: D34443176 Pulled By: changneng fbshipit-source-id: 5b3f185cdfc2561e06dd37215c7edfbca07dbe80 |

4 years ago |

|

|

f706a9c199 |

Add a secondary cache implementation based on LRUCache 1 (#9518)

Summary: **Summary:** RocksDB uses a block cache to reduce IO and make queries more efficient. The block cache is based on the LRU algorithm (LRUCache) and keeps objects containing uncompressed data, such as Block, ParsedFullFilterBlock etc. It allows the user to configure a second level cache (rocksdb::SecondaryCache) to extend the primary block cache by holding items evicted from it. Some of the major RocksDB users, like MyRocks, use direct IO and would like to use a primary block cache for uncompressed data and a secondary cache for compressed data. The latter allows us to mitigate the loss of the Linux page cache due to direct IO. This PR includes a concrete implementation of rocksdb::SecondaryCache that integrates with compression libraries such as LZ4 and implements an LRU cache to hold compressed blocks. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9518 Test Plan: In this PR, the lru_secondary_cache_test.cc includes the following tests: 1. The unit tests for the secondary cache with either compression or no compression, such as basic tests, fails tests. 2. The integration tests with both primary cache and this secondary cache . **Follow Up:** 1. Statistics (e.g. compression ratio) will be added in another PR. 2. Once this implementation is ready, I will do some shadow testing and benchmarking with UDB to measure the impact. Reviewed By: anand1976 Differential Revision: D34430930 Pulled By: gitbw95 fbshipit-source-id: 218d78b672a2f914856d8a90ff32f2f5b5043ded |

4 years ago |

|

|

39b0d92153 |

Add record to set WAL compression type if enabled (#9556)

Summary: When WAL compression is enabled, add a record (new record type) to store the compression type to indicate that all subsequent records are compressed. The log reader will store the compression type when this record is encountered and use the type to uncompress the subsequent records. Compress and uncompress to be implemented in subsequent diffs. Enabled WAL compression in some WAL tests to check for regressions. Some tests that rely on offsets have been disabled. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9556 Reviewed By: anand1976 Differential Revision: D34308216 Pulled By: sidroyc fbshipit-source-id: 7f10595e46f3277f1ea2d309fbf95e2e935a8705 |

4 years ago |

|

|

babe56ddba |

Add rate limiter priority to ReadOptions (#9424)

Summary: Users can set the priority for file reads associated with their operation by setting `ReadOptions::rate_limiter_priority` to something other than `Env::IO_TOTAL`. Rate limiting `VerifyChecksum()` and `VerifyFileChecksums()` is the motivation for this PR, so it also includes benchmarks and minor bug fixes to get that working. `RandomAccessFileReader::Read()` already had support for rate limiting compaction reads. I changed that rate limiting to be non-specific to compaction, but rather performed according to the passed in `Env::IOPriority`. Now the compaction read rate limiting is supported by setting `rate_limiter_priority = Env::IO_LOW` on its `ReadOptions`. There is no default value for the new `Env::IOPriority` parameter to `RandomAccessFileReader::Read()`. That means this PR goes through all callers (in some cases multiple layers up the call stack) to find a `ReadOptions` to provide the priority. There are TODOs for cases I believe it would be good to let user control the priority some day (e.g., file footer reads), and no TODO in cases I believe it doesn't matter (e.g., trace file reads). The API doc only lists the missing cases where a file read associated with a provided `ReadOptions` cannot be rate limited. For cases like file ingestion checksum calculation, there is no API to provide `ReadOptions` or `Env::IOPriority`, so I didn't count that as missing. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9424 Test Plan: - new unit tests - new benchmarks on ~50MB database with 1MB/s read rate limit and 100ms refill interval; verified with strace reads are chunked (at 0.1MB per chunk) and spaced roughly 100ms apart. - setup command: `./db_bench -benchmarks=fillrandom,compact -db=/tmp/testdb -target_file_size_base=1048576 -disable_auto_compactions=true -file_checksum=true` - benchmarks command: `strace -ttfe pread64 ./db_bench -benchmarks=verifychecksum,verifyfilechecksums -use_existing_db=true -db=/tmp/testdb -rate_limiter_bytes_per_sec=1048576 -rate_limit_bg_reads=1 -rate_limit_user_ops=true -file_checksum=true` - crash test using IO_USER priority on non-validation reads with https://github.com/facebook/rocksdb/issues/9567 reverted: `python3 tools/db_crashtest.py blackbox --max_key=1000000 --write_buffer_size=524288 --target_file_size_base=524288 --level_compaction_dynamic_level_bytes=true --duration=3600 --rate_limit_bg_reads=true --rate_limit_user_ops=true --rate_limiter_bytes_per_sec=10485760 --interval=10` Reviewed By: hx235 Differential Revision: D33747386 Pulled By: ajkr fbshipit-source-id: a2d985e97912fba8c54763798e04f006ccc56e0c |

4 years ago |

|

|

8286469b9a |

LDB to add --secondary_path to help (#9582)

Summary: Opening DB as seconeary instance has been supported in ldb but it is not mentioned in --help. Mention it there. The part of the help message after the modification: ``` commands MUST specify --db=<full_path_to_db_directory> when necessary commands can optionally specify --env_uri=<uri_of_environment> or --fs_uri=<uri_of_filesystem> if necessary --secondary_path=<secondary_path> to open DB as secondary instance. Operations not supported in secondary instance will fail. ``` Pull Request resolved: https://github.com/facebook/rocksdb/pull/9582 Test Plan: Build and run ldb --help Reviewed By: riversand963 Differential Revision: D34286427 fbshipit-source-id: e56c5290d0548098ab6acc6dde2167f5a64f34f3 |

4 years ago |

|

|

ad2cab8f0c |

minor tweaks to db_crashtest.py settings (#9483)

Summary: I did another pass through running CI jobs. It is uncommon now to see `db_stress` stuck in the setup phase but still happen. One reason was repeatedly reading/verifying checksum on filter blocks when `-cache_index_and_filter_blocks=1` and `-cache_size=1048576`. To address that I increased the cache size. Another reason was having a WAL with many range tombstones and every `db_stress` run using `-avoid_flush_during_recovery=1` (in that scenario, the setup phase spent too much CPU in `rocksdb::MemTable::NewRangeTombstoneIteratorInternal()`). To address that I fixed the `-avoid_flush_during_recovery` setting so it is reevaluated for every `db_stress` run. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9483 Reviewed By: riversand963 Differential Revision: D33922929 Pulled By: ajkr fbshipit-source-id: 0a298ec7c4df6f6b44620233996047a2dc7ee5f3 |

4 years ago |

|

|

ec0b1ff2bd |

Add blob compaction readahead size to the BlobDB benchmark script (#9566)

Summary: Pull Request resolved: https://github.com/facebook/rocksdb/pull/9566 Reviewed By: riversand963 Differential Revision: D34226256 Pulled By: ltamasi fbshipit-source-id: 4374b819e937c35e3a866ba5b5eafba87ff20af3 |

4 years ago |

|

|

479eb1aad6 |

Hide deprecated, inefficient block-based filter from public API (#9535)

Summary: This change removes the ability to configure the deprecated, inefficient block-based filter in the public API. Options that would have enabled it now use "full" (and optionally partitioned) filters. Existing block-based filters can still be read and used, and a "back door" way to build them still exists, for testing and in case of trouble. About the only way this removal would cause an issue for users is if temporary memory for filter construction greatly increases. In HISTORY.md we suggest a few possible mitigations: partitioned filters, smaller SST files, or setting reserve_table_builder_memory=true. Or users who have customized a FilterPolicy using the CreateFilter/KeyMayMatch mechanism removed in https://github.com/facebook/rocksdb/issues/9501 will have to upgrade their code. (It's long past time for people to move to the new builder/reader customization interface.) This change also introduces some internal-use-only configuration strings for testing specific filter implementations while bypassing some compatibility / intelligence logic. This is intended to hint at a path toward making FilterPolicy Customizable, but it also gives us a "back door" way to configure block-based filter. Aside: updated db_bench so that -readonly implies -use_existing_db Pull Request resolved: https://github.com/facebook/rocksdb/pull/9535 Test Plan: Unit tests updated. Specifically, * BlockBasedTableTest.BlockReadCountTest is tweaked to validate the back door configuration interface and ignoring of `use_block_based_builder`. * BlockBasedTableTest.TracingGetTest is migrated from testing block-based filter access pattern to full filter access patter, by re-ordering some things. * Options test (pretty self-explanatory) Performance test - create with `./db_bench -db=/dev/shm/rocksdb1 -bloom_bits=10 -cache_index_and_filter_blocks=1 -benchmarks=fillrandom -num=10000000 -compaction_style=2 -fifo_compaction_max_table_files_size_mb=10000 -fifo_compaction_allow_compaction=0` with and without `-use_block_based_filter`, which creates a DB with 21 SST files in L0. Read with `./db_bench -db=/dev/shm/rocksdb1 -readonly -bloom_bits=10 -cache_index_and_filter_blocks=1 -benchmarks=readrandom -num=10000000 -compaction_style=2 -fifo_compaction_max_table_files_size_mb=10000 -fifo_compaction_allow_compaction=0 -duration=30` Without -use_block_based_filter: readrandom 464 ops/sec, 689280 KB DB With -use_block_based_filter: readrandom 169 ops/sec, 690996 KB DB No consistent difference with fillrandom Reviewed By: jay-zhuang Differential Revision: D34153871 Pulled By: pdillinger fbshipit-source-id: 31f4a933c542f8f09aca47fa64aec67832a69738 |

4 years ago |

|

|

9745c68eb1 |

Remove deprecated option new_table_reader_for_compaction_inputs (#9443)

Summary: In RocksDB option new_table_reader_for_compaction_inputs has not effect on Compaction or on the behavior of RocksDB library. Therefore, we are removing it in the upcoming 7.0 release. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9443 Test Plan: CircleCI Reviewed By: ajkr Differential Revision: D33788508 Pulled By: akankshamahajan15 fbshipit-source-id: 324ca6f12bfd019e9bd5e1b0cdac39be5c3cec7d |

4 years ago |

|

|

ddce0c3f11 |

Add releases till 6.29.fb to compatibility check (#9529)

Summary: Add releases till 6.29.fb to compatibility check for forward and backward compatibility Pull Request resolved: https://github.com/facebook/rocksdb/pull/9529 Test Plan: run locally Reviewed By: hx235 Differential Revision: D34086063 Pulled By: akankshamahajan15 fbshipit-source-id: 4ccff513c99cf2d0e41da0b76ab27ffcfdffe7df |

4 years ago |

|

|

036bbab6f7 |

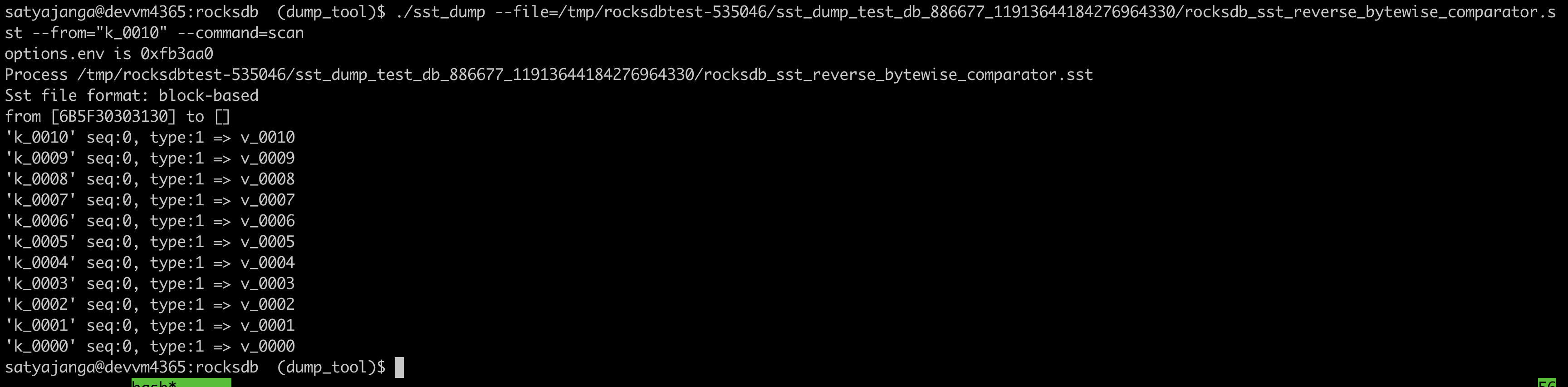

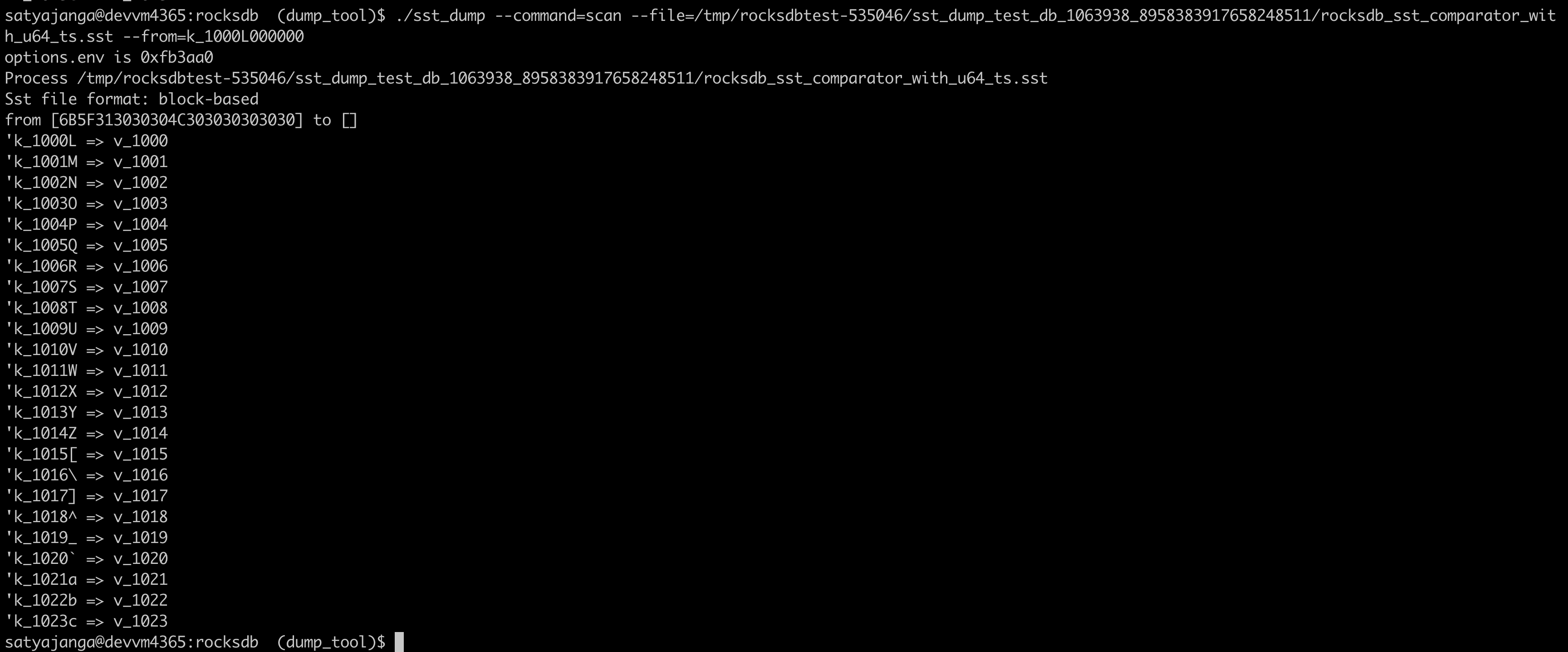

Use the comparator from the sst file table properties in sst_dump_tool (#9491)

Summary: We introduced a new Comparator for timestamp in user keys. In the sst_dump_tool by default we use BytewiseComparator to read sst files. This change allows us to read comparator_name from table properties in meta data block and use it to read. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9491 Test Plan: added unittests for new functionality. make check   Reviewed By: riversand963 Differential Revision: D33993614 Pulled By: satyajanga fbshipit-source-id: 4b5cf938e6d2cb3931d763bef5baccc900b8c536 |

4 years ago |

|

|

d7c868b062 |

Work around snappy linker issue with newer compilers (#9517)

Summary: After https://github.com/facebook/rocksdb/issues/9481, we are using newer default compiler for build-format-compatible CircleCI nightly job, which fails on building 2.2.fb.branch branch because it tries to use a pre-compiled libsnappy.a that is checked into the repo (!). This works around that by setting SNAPPY_LDFLAGS=-lsnappy, which is only understood by such old versions. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9517 Test Plan: Run check_format_compatible.sh on Ubuntu 20 AWS machine, watch nightly run Reviewed By: hx235 Differential Revision: D34055561 Pulled By: pdillinger fbshipit-source-id: 45f9d428dd082f026773bfa8d9dd4dad66fc9378 |

4 years ago |

|

|

42e0751b3a |

Clean up VersionStorageInfo a bit (#9494)

Summary: The patch does some cleanup in and around `VersionStorageInfo`: * Renames the method `PrepareApply` to `PrepareAppend` in `Version` to make it clear that it is to be called before appending the `Version` to `VersionSet` (via `AppendVersion`), not before applying any `VersionEdit`s. * Introduces a helper method `VersionStorageInfo::PrepareForVersionAppend` (called by `Version::PrepareAppend`) that encapsulates the population of the various derived data structures in `VersionStorageInfo`, and turns the methods computing the derived structures (`UpdateNumNonEmptyLevels`, `CalculateBaseBytes` etc.) into private helpers. * Changes `Version::PrepareAppend` so it only calls `UpdateAccumulatedStats` if the `update_stats` flag is set. (Earlier, this was checked by the callee.) Related to this, it also moves the call to `ComputeCompensatedSizes` to `VersionStorageInfo::PrepareForVersionAppend`. * Updates and cleans up `version_builder_test`, `version_set_test`, and `compaction_picker_test` so `PrepareForVersionAppend` is called anytime a new `VersionStorageInfo` is set up or saved. This cleanup also involves splitting `VersionStorageInfoTest.MaxBytesForLevelDynamic` into multiple smaller test cases. * Fixes up a bunch of comments that were outdated or just plain incorrect. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9494 Test Plan: Ran `make check` and the crash test script for a while. Reviewed By: riversand963 Differential Revision: D33971666 Pulled By: ltamasi fbshipit-source-id: fda52faac7783041126e4f8dec0fe01bdcadf65a |

4 years ago |

|

|

8b62abcc21 |

Disable backup/restore for ts-stress test (#9497)

Summary: Pull Request resolved: https://github.com/facebook/rocksdb/pull/9497 Reviewed By: ajkr Differential Revision: D33990256 Pulled By: riversand963 fbshipit-source-id: 268ce16b037e23e42b14fa0fcb45535582e1a0d6 |

4 years ago |

|

|

aae3093719 |

Introduce a CountedFileSystem for counting file operations (#9283)

Summary: Added a CountedFileSystem that tracks a number of file operations (opens, closes, deletes, renames, flushes, syncs, fsyncs, reads, writes). This class was based on the ReportFileOpEnv from db_bench. This is a stepping stone PR to be able to change the SpecialEnv into a SpecialFileSystem, where several of the file varieties wish to do operation counting. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9283 Reviewed By: pdillinger Differential Revision: D33062004 Pulled By: mrambacher fbshipit-source-id: d0d297a7fb9c48c06cbf685e5fa755c27193b6f5 |

4 years ago |

|

|

3122cb4358 |

Revise APIs related to user-defined timestamp (#8946)

Summary: ajkr reminded me that we have a rule of not including per-kv related data in `WriteOptions`. Namely, `WriteOptions` should not include information about "what-to-write", but should just include information about "how-to-write". According to this rule, `WriteOptions::timestamp` (experimental) is clearly a violation. Therefore, this PR removes `WriteOptions::timestamp` for compliance. After the removal, we need to pass timestamp info via another set of APIs. This PR proposes a set of overloaded functions `Put(write_opts, key, value, ts)`, `Delete(write_opts, key, ts)`, and `SingleDelete(write_opts, key, ts)`. Planned to add `Write(write_opts, batch, ts)`, but its complexity made me reconsider doing it in another PR (maybe). For better checking and returning error early, we also add a new set of APIs to `WriteBatch` that take extra `timestamp` information when writing to `WriteBatch`es. These set of APIs in `WriteBatchWithIndex` are currently not supported, and are on our TODO list. Removed `WriteBatch::AssignTimestamps()` and renamed `WriteBatch::AssignTimestamp()` to `WriteBatch::UpdateTimestamps()` since this method require that all keys have space for timestamps allocated already and multiple timestamps can be updated. The constructor of `WriteBatch` now takes a fourth argument `default_cf_ts_sz` which is the timestamp size of the default column family. This will be used to allocate space when calling APIs that do not specify a column family handle. Also, updated `DB::Get()`, `DB::MultiGet()`, `DB::NewIterator()`, `DB::NewIterators()` methods, replacing some assertions about timestamp to returning Status code. Pull Request resolved: https://github.com/facebook/rocksdb/pull/8946 Test Plan: make check ./db_bench -benchmarks=fillseq,fillrandom,readrandom,readseq,deleterandom -user_timestamp_size=8 ./db_stress --user_timestamp_size=8 -nooverwritepercent=0 -test_secondary=0 -secondary_catch_up_one_in=0 -continuous_verification_interval=0 Make sure there is no perf regression by running the following ``` ./db_bench_opt -db=/dev/shm/rocksdb -use_existing_db=0 -level0_stop_writes_trigger=256 -level0_slowdown_writes_trigger=256 -level0_file_num_compaction_trigger=256 -disable_wal=1 -duration=10 -benchmarks=fillrandom ``` Before this PR ``` DB path: [/dev/shm/rocksdb] fillrandom : 1.831 micros/op 546235 ops/sec; 60.4 MB/s ``` After this PR ``` DB path: [/dev/shm/rocksdb] fillrandom : 1.820 micros/op 549404 ops/sec; 60.8 MB/s ``` Reviewed By: ltamasi Differential Revision: D33721359 Pulled By: riversand963 fbshipit-source-id: c131561534272c120ffb80711d42748d21badf09 |

4 years ago |

|

|

920386f2b7 |

Detect (new) Bloom/Ribbon Filter construction corruption (#9342)

Summary: Note: rebase on and merge after https://github.com/facebook/rocksdb/pull/9349, https://github.com/facebook/rocksdb/pull/9345, (optional) https://github.com/facebook/rocksdb/pull/9393 **Context:** (Quoted from pdillinger) Layers of information during new Bloom/Ribbon Filter construction in building block-based tables includes the following: a) set of keys to add to filter b) set of hashes to add to filter (64-bit hash applied to each key) c) set of Bloom indices to set in filter, with duplicates d) set of Bloom indices to set in filter, deduplicated e) final filter and its checksum This PR aims to detect corruption (e.g, unexpected hardware/software corruption on data structures residing in the memory for a long time) from b) to e) and leave a) as future works for application level. - b)'s corruption is detected by verifying the xor checksum of the hash entries calculated as the entries accumulate before being added to the filter. (i.e, `XXPH3FilterBitsBuilder::MaybeVerifyHashEntriesChecksum()`) - c) - e)'s corruption is detected by verifying the hash entries indeed exists in the constructed filter by re-querying these hash entries in the filter (i.e, `FilterBitsBuilder::MaybePostVerify()`) after computing the block checksum (except for PartitionFilter, which is done right after each `FilterBitsBuilder::Finish` for impl simplicity - see code comment for more). For this stage of detection, we assume hash entries are not corrupted after checking on b) since the time interval from b) to c) is relatively short IMO. Option to enable this feature of detection is `BlockBasedTableOptions::detect_filter_construct_corruption` which is false by default. **Summary:** - Implemented new functions `XXPH3FilterBitsBuilder::MaybeVerifyHashEntriesChecksum()` and `FilterBitsBuilder::MaybePostVerify()` - Ensured hash entries, final filter and banding and their [cache reservation ](https://github.com/facebook/rocksdb/issues/9073) are released properly despite corruption - See [Filter.construction.artifacts.release.point.pdf ](https://github.com/facebook/rocksdb/files/7923487/Design.Filter.construction.artifacts.release.point.pdf) for high-level design - Bundled and refactored hash entries's related artifact in XXPH3FilterBitsBuilder into `HashEntriesInfo` for better control on lifetime of these artifact during `SwapEntires`, `ResetEntries` - Ensured RocksDB block-based table builder calls `FilterBitsBuilder::MaybePostVerify()` after constructing the filter by `FilterBitsBuilder::Finish()` - When encountering such filter construction corruption, stop writing the filter content to files and mark such a block-based table building non-ok by storing the corruption status in the builder. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9342 Test Plan: - Added new unit test `DBFilterConstructionCorruptionTestWithParam.DetectCorruption` - Included this new feature in `DBFilterConstructionReserveMemoryTestWithParam.ReserveMemory` as this feature heavily touch ReserveMemory's impl - For fallback case, I run `./filter_bench -impl=3 -detect_filter_construct_corruption=true -reserve_table_builder_memory=true -strict_capacity_limit=true -quick -runs 10 | grep 'Build avg'` to make sure nothing break. - Added to `filter_bench`: increased filter construction time by **30%**, mostly by `MaybePostVerify()` - FastLocalBloom - Before change: `./filter_bench -impl=2 -quick -runs 10 | grep 'Build avg'`: **28.86643s** - After change: - `./filter_bench -impl=2 -detect_filter_construct_corruption=false -quick -runs 10 | grep 'Build avg'` (expect a tiny increase due to MaybePostVerify is always called regardless): **27.6644s (-4% perf improvement might be due to now we don't drop bloom hash entry in `AddAllEntries` along iteration but in bulk later, same with the bypassing-MaybePostVerify case below)** - `./filter_bench -impl=2 -detect_filter_construct_corruption=true -quick -runs 10 | grep 'Build avg'` (expect acceptable increase): **34.41159s (+20%)** - `./filter_bench -impl=2 -detect_filter_construct_corruption=true -quick -runs 10 | grep 'Build avg'` (by-passing MaybePostVerify, expect minor increase): **27.13431s (-6%)** - Standard128Ribbon - Before change: `./filter_bench -impl=3 -quick -runs 10 | grep 'Build avg'`: **122.5384s** - After change: - `./filter_bench -impl=3 -detect_filter_construct_corruption=false -quick -runs 10 | grep 'Build avg'` (expect a tiny increase due to MaybePostVerify is always called regardless - verified by removing MaybePostVerify under this case and found only +-1ns difference): **124.3588s (+2%)** - `./filter_bench -impl=3 -detect_filter_construct_corruption=true -quick -runs 10 | grep 'Build avg'`(expect acceptable increase): **159.4946s (+30%)** - `./filter_bench -impl=3 -detect_filter_construct_corruption=true -quick -runs 10 | grep 'Build avg'`(by-passing MaybePostVerify, expect minor increase) : **125.258s (+2%)** - Added to `db_stress`: `make crash_test`, `./db_stress --detect_filter_construct_corruption=true` - Manually smoke-tested: manually corrupted the filter construction in some db level tests with basic PUT and background flush. As expected, the error did get returned to users in subsequent PUT and Flush status. Reviewed By: pdillinger Differential Revision: D33746928 Pulled By: hx235 fbshipit-source-id: cb056426be5a7debc1cd16f23bc250f36a08ca57 |

4 years ago |

|

|

f6d7ec1d02 |

Ignore `total_order_seek` in DB::Get (#9427)

Summary: Apparently setting total_order_seek=true for DB::Get was intended to allow accurate read semantics if the current prefix extractor doesn't match what was used to generate SST files on disk. But since prefix_extractor was made a mutable option in 5.14.0, we have been able to detect this case and provide the correct semantics regardless of the total_order_seek option. Since that time, the option has only made Get() slower in a reasonably common case: prefix_extractor unchanged and whole_key_filtering=false. So this change primarily removes unnecessary effect of total_order_seek on Get. Also cleans up some related comments. Also adds a -total_order_seek option to db_bench and canonicalizes handling of ReadOptions in db_bench so that command line options have the expected association with library features. (There is potential for change in regression test behavior, but the old behavior is likely indefensible, or some other inconsistency would need to be fixed.) TODO in follow-up work: there should be no reason for Get() to depend on current prefix extractor at all. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9427 Test Plan: Unit tests updated. Performance (using db_bench update) Create DB with `TEST_TMPDIR=/dev/shm/rocksdb ./db_bench -benchmarks=fillrandom -num=10000000 -disable_wal=1 -write_buffer_size=10000000 -bloom_bits=16 -compaction_style=2 -fifo_compaction_max_table_files_size_mb=10000 -fifo_compaction_allow_compaction=0 -prefix_size=12 -whole_key_filtering=0` Test with and without `-total_order_seek` on `TEST_TMPDIR=/dev/shm/rocksdb ./db_bench -use_existing_db -readonly -benchmarks=readrandom -num=10000000 -duration=40 -disable_wal=1 -bloom_bits=16 -compaction_style=2 -fifo_compaction_max_table_files_size_mb=10000 -fifo_compaction_allow_compaction=0 -prefix_size=12` Before this change, total_order_seek=false: 25188 ops/sec Before this change, total_order_seek=true: 1222 ops/sec (~20x slower) After this change, total_order_seek=false: 24570 ops/sec After this change, total_order_seek=true: 25012 ops/sec (indistinguishable) Reviewed By: siying Differential Revision: D33753458 Pulled By: pdillinger fbshipit-source-id: bf892f34907a5e407d9c40bd4d42f0adbcbe0014 |

4 years ago |

|

|

8dbd0bd11f |

db_crashtest.py use cheaper settings (#9476)

Summary: Despite attempts to optimize `db_stress` setup phase (i.e., pre-`OperateDb()`) latency in https://github.com/facebook/rocksdb/issues/9470 and https://github.com/facebook/rocksdb/issues/9475, it still always took tens of seconds. Since we still aren't able to setup a 100M key `db_stress` quickly, we should reduce the number of keys. This PR reduces it 4x while increasing `value_size_mult` 4x (from its default value of 8) so that memtables and SST files fill at a similar rate compared to before this PR. Also disabled bzip2 compression since we'll probably never use it and I noticed many CI runs spending majority of CPU on bzip2 decompression. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9476 Reviewed By: siying Differential Revision: D33898520 Pulled By: ajkr fbshipit-source-id: 855021784ad9664f2be5bce21f0339a1cf93230d |

4 years ago |

|

|

42cca28ebb |

Remove deprecated API AdvancedColumnFamilyOptions::rate_limit_delay_max_milliseconds (#9455)

Summary: **Context/Summary:** AdvancedColumnFamilyOptions::rate_limit_delay_max_milliseconds has been marked as deprecated and it's time to actually remove the code. - Keep `soft_rate_limit`/`hard_rate_limit` in `cf_mutable_options_type_info` to prevent throwing `InvalidArgument` in `GetColumnFamilyOptionsFromMap` when reading an option file still with these options (e.g, old option file generated from RocksDB before the deprecation) - Keep `soft_rate_limit`/`hard_rate_limit` in under `OptionsOldApiTest.GetOptionsFromMapTest` to test the case mentioned above. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9455 Test Plan: Rely on my eyeball and CI Reviewed By: ajkr Differential Revision: D33811664 Pulled By: hx235 fbshipit-source-id: 866859427fe710354a90f1095057f80116365ff0 |

4 years ago |

|

|

74ccd1931e |

Remove deprecated option DBOptions::skip_log_error_on_recovery (#9434)

Summary: In RocksDB DBOptions::skip_log_error_on_recovery is marked as "NOT SUPPORTED" for a long time, and setting this option does not have any effect on the behavior of RocksDB library. Therefore, we are removing it in the upcoming 7.0 release. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9434 Test Plan: CircleCI Reviewed By: ajkr Differential Revision: D33763015 Pulled By: akankshamahajan15 fbshipit-source-id: 11f09643298da6c02d3dcdb090b996f4c3cfdd76 |

4 years ago |

|

|

c11fe94000 |

Fix^2 prefix extractor testing in crash test (#9463)

Summary: Even after https://github.com/facebook/rocksdb/issues/9461 could see ``` Error: please specify prefix_size for test_batches_snapshots test! ``` Pull Request resolved: https://github.com/facebook/rocksdb/pull/9463 Test Plan: run `make blackbox_crashtest` for a long time. (Unfortunately, it's taking a long time to reproduce these failures) Reviewed By: akankshamahajan15 Differential Revision: D33838152 Pulled By: pdillinger fbshipit-source-id: b9a73c5bbb68df53f14c22b9b52f61d1f7ef38af |

4 years ago |

|

|

22321e1027 |

Remove unused API base_background_compactions (#9462)

Summary: The API is deprecated long time ago. Clean up the codebase by removing it. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9462 Test Plan: CI, fake release: D33835220 Reviewed By: riversand963 Differential Revision: D33835103 Pulled By: jay-zhuang fbshipit-source-id: 6d2dc12c8e7fdbe2700865a3e61f0e3f78bd8184 |

4 years ago |