Tag:

Branch:

Tree:

e03d958b91

main

oxigraph-8.1.1

oxigraph-8.3.2

oxigraph-main

${ noResults }

211 Commits (e03d958b9166de6f29288f2ed854722b36cd3e0e)

| Author | SHA1 | Message | Date |

|---|---|---|---|

|

|

736a7b5433 |

Remove own ToString() (#9955)

Summary: ToString() is created as some platform doesn't support std::to_string(). However, we've already used std::to_string() by mistake for 16 months (in db/db_info_dumper.cc). This commit just remove ToString(). Pull Request resolved: https://github.com/facebook/rocksdb/pull/9955 Test Plan: Watch CI tests Reviewed By: riversand963 Differential Revision: D36176799 fbshipit-source-id: bdb6dcd0e3a3ab96a1ac810f5d0188f684064471 |

4 years ago |

|

|

62d84e2a2b |

db_stress fault injection in release mode (#9957)

Summary: Previously all fault injection was ignored in release mode. This PR adds it back except for read fault injection (`--read_fault_one_in > 0`) since its dependency (`IGNORE_STATUS_IF_ERROR`) is unavailable in release mode. Other notable changes include: - Moved `EnableWriteErrorInjection()` for `--write_fault_one_in > 0` so it's after `DB::Open()` without depending on `SyncPoint` - Made `--read_fault_one_in > 0` return an error in release mode - Updated `db_crashtest.py` to always set `--read_fault_one_in=0` in release mode Pull Request resolved: https://github.com/facebook/rocksdb/pull/9957 Test Plan: ``` $ DEBUG_LEVEL=0 make -j24 db_stress $ DEBUG_LEVEL=0 TEST_TMPDIR=/dev/shm python3 tools/db_crashtest.py blackbox ``` Reviewed By: anand1976 Differential Revision: D36193830 Pulled By: ajkr fbshipit-source-id: 0b97946b4e3f06e3e0f6e7833c2763da08ec5321 |

4 years ago |

|

|

a62506aee2 |

Enable unsynced data loss in crash test (#9947)

Summary:

`db_stress` already tracks expected state history to verify prefix-recoverability when `sync_fault_injection` is enabled. This PR enables `sync_fault_injection` in `db_crashtest.py`.

Previously enabling `sync_fault_injection` would cause whole unsynced files to be dropped. This PR adds a more interesting case of losing only the tail of unsynced data by implementing `TestFSWritableFile::RangeSync()` and enabling `{wal_,}bytes_per_sync`.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/9947

Test Plan:

- regular blackbox, blackbox --simple

- various commands to stress this new case, such as `TEST_TMPDIR=/dev/shm python3 tools/db_crashtest.py blackbox --max_key=100000 --write_buffer_size=2097152 --avoid_flush_during_recovery=1 --disable_wal=0 --interval=10 --db_write_buffer_size=0 --sync_fault_injection=1 --wal_compression=none --delpercent=0 --delrangepercent=0 --prefixpercent=0 --iterpercent=0 --writepercent=100 --readpercent=0 --wal_bytes_per_sync=131072 --duration=36000 --sync=0 --open_write_fault_one_in=16`

Reviewed By: riversand963

Differential Revision: D36152775

Pulled By: ajkr

fbshipit-source-id: 44b68a7fad0a4cf74af9fe1f39be01baab8141d8

|

4 years ago |

|

|

49628c9a83 |

Use std::numeric_limits<> (#9954)

Summary: Right now we still don't fully use std::numeric_limits but use a macro, mainly for supporting VS 2013. Right now we only support VS 2017 and up so it is not a problem. The code comment claims that MinGW still needs it. We don't have a CI running MinGW so it's hard to validate. since we now require C++17, it's hard to imagine MinGW would still build RocksDB but doesn't support std::numeric_limits<>. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9954 Test Plan: See CI Runs. Reviewed By: riversand963 Differential Revision: D36173954 fbshipit-source-id: a35a73af17cdcae20e258cdef57fcf29a50b49e0 |

4 years ago |

|

|

9d634dd5b6 |

Rename kRemoveWithSingleDelete to kPurge (#9951)

Summary: PR 9929 adds a new CompactionFilter::Decision, i.e. kRemoveWithSingleDelete so that CompactionFilter can indicate to CompactionIterator that a PUT can only be removed with SD. However, how CompactionIterator handles such a key is implementation detail which should not be implied in the public API. In fact, such a PUT can just be dropped. This is an optimization which we will apply in the near future. Discussion thread: https://github.com/facebook/rocksdb/pull/9929#discussion_r863198964 Pull Request resolved: https://github.com/facebook/rocksdb/pull/9951 Test Plan: make check Reviewed By: ajkr Differential Revision: D36156590 Pulled By: riversand963 fbshipit-source-id: 7b7d01f47bba4cad7d9cca6ca52984f27f88b372 |

4 years ago |

|

|

cda34dd64a |

Allow consecutive SingleDelete() in stress/crash test (#9930)

Summary: We need to support consecutive SingleDelete(), so this PR adds it to the stress/crash tests. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9930 Test Plan: `python3 tools/db_crashtest.py blackbox --simple --nooverwritepercent=50 --writepercent=90 --delpercent=10 --readpercent=0 --prefixpercent=0 --delrangepercent=0 --iterpercent=0 --max_key=1000000 --duration=3600 --interval=10 --write_buffer_size=1048576 --target_file_size_base=1048576 --max_bytes_for_level_base=4194304 --value_size_mult=33` Reviewed By: riversand963 Differential Revision: D36081863 Pulled By: ajkr fbshipit-source-id: 3566cdbaed375b8003126fc298968eb1a854317f |

4 years ago |

|

|

06394ff4e7 |

Fix a bug of CompactionIterator/CompactionFilter using `Delete` (#9929)

Summary: When compaction filter determines that a key should be removed, it updates the internal key's type to `Delete`. If this internal key is preserved in current compaction but seen by a later compaction together with `SingleDelete`, it will cause compaction iterator to return Corruption. To fix the issue, compaction filter should return more information in addition to the intention of removing a key. Therefore, we add a new `kRemoveWithSingleDelete` to `CompactionFilter::Decision`. Seeing `kRemoveWithSingleDelete`, compaction iterator will update the op type of the internal key to `kTypeSingleDelete`. In addition, I updated db_stress_shared_state.[cc|h] so that `no_overwrite_ids_` becomes `const`. It is easier to reason about thread-safety if accessed from multiple threads. This information is passed to `PrepareTxnDBOptions()` when calling from `Open()` so that we can set up the rollback deletion type callback for transactions. Finally, disable compaction filter for multiops_txn because the key removal logic of `DbStressCompactionFilter` does not quite work with `MultiOpsTxnsStressTest`. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9929 Test Plan: make check make crash_test make crash_test_with_txn Reviewed By: anand1976 Differential Revision: D36069678 Pulled By: riversand963 fbshipit-source-id: cedd2f1ba958af59ad3916f1ba6f424307955f92 |

4 years ago |

|

|

94e245a14d |

Improve stress test for MultiOpsTxnsStressTest (#9829)

Summary: Adds more coverage to `MultiOpsTxnsStressTest` with a focus on write-prepared transactions. 1. Add a hack to manually evict commit cache entries. We currently cannot assign small values to `wp_commit_cache_bits` because it requires a prepared transaction to commit within a certain range of sequence numbers, otherwise it will throw. 2. Add coverage for commit-time-write-batch. If write policy is write-prepared, we need to set `use_only_the_last_commit_time_batch_for_recovery` to true. 3. After each flush/compaction, verify data consistency. This is possible since data size can be small: default numbers of primary/secondary keys are just 1000. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9829 Test Plan: ``` TEST_TMPDIR=/dev/shm/rocksdb_crashtest_blackbox/ make blackbox_crash_test_with_multiops_wp_txn ``` Reviewed By: pdillinger Differential Revision: D35806678 Pulled By: riversand963 fbshipit-source-id: d7fde7a29fda0fb481a61f553e0ca0c47da93616 |

4 years ago |

|

|

68ee228dec |

RocksDB: fix bug in crash-recovery correctness testing (#9897)

Summary: Pull Request resolved: https://github.com/facebook/rocksdb/pull/9897 Fixes https://github.com/facebook/rocksdb/issues/9385. Update State to reflect the value in the DB after a crash Reviewed By: ajkr Differential Revision: D35788808 fbshipit-source-id: 2d21d8537ab380a17cad3e90ac72b3eb1b56de9f |

4 years ago |

|

|

c3d7e16252 |

Add WAL compression to stress tests (#9811)

Summary: Add the WAL compression feature to the stress test. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9811 Reviewed By: riversand963 Differential Revision: D35414316 Pulled By: anand1976 fbshipit-source-id: 0c17b1ec55679a52f088ad368798b57139bd921a |

4 years ago |

|

|

49623f9c8e |

Account memory of big memory users in BlockBasedTable in global memory limit (#9748)

Summary: **Context:** Through heap profiling, we discovered that `BlockBasedTableReader` objects can accumulate and lead to high memory usage (e.g, `max_open_file = -1`). These memories are currently not saved, not tracked, not constrained and not cache evict-able. As a first step to improve this, similar to https://github.com/facebook/rocksdb/pull/8428, this PR is to track an estimate of `BlockBasedTableReader` object's memory in block cache and fail future creation if the memory usage exceeds the available space of cache at the time of creation. **Summary:** - Approximate big memory users (`BlockBasedTable::Rep` and `TableProperties` )' memory usage in addition to the existing estimated ones (filter block/index block/un-compression dictionary) - Charge all of these memory usages to block cache on `BlockBasedTable::Open()` and release them on `~BlockBasedTable()` as there is no memory usage fluctuation of concern in between - Refactor on CacheReservationManager (and its call-sites) to add concurrent support for BlockBasedTable used in this PR. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9748 Test Plan: - New unit tests - db bench: `OpenDb` : **-0.52% in ms** - Setup `./db_bench -benchmarks=fillseq -db=/dev/shm/testdb -disable_auto_compactions=1 -write_buffer_size=1048576` - Repeated run with pre-change w/o feature and post-change with feature, benchmark `OpenDb`: `./db_bench -benchmarks=readrandom -use_existing_db=1 -db=/dev/shm/testdb -reserve_table_reader_memory=true (remove this when running w/o feature) -file_opening_threads=3 -open_files=-1 -report_open_timing=true| egrep 'OpenDb:'` #-run | (feature-off) avg milliseconds | std milliseconds | (feature-on) avg milliseconds | std milliseconds | change (%) -- | -- | -- | -- | -- | -- 10 | 11.4018 | 5.95173 | 9.47788 | 1.57538 | -16.87382694 20 | 9.23746 | 0.841053 | 9.32377 | 1.14074 | 0.9343477536 40 | 9.0876 | 0.671129 | 9.35053 | 1.11713 | 2.893283155 80 | 9.72514 | 2.28459 | 9.52013 | 1.0894 | -2.108041632 160 | 9.74677 | 0.991234 | 9.84743 | 1.73396 | 1.032752389 320 | 10.7297 | 5.11555 | 10.547 | 1.97692 | **-1.70275031** 640 | 11.7092 | 2.36565 | 11.7869 | 2.69377 | **0.6635807741** - db bench on write with cost to cache in WriteBufferManager (just in case this PR's CRM refactoring accidentally slows down anything in WBM) : `fillseq` : **+0.54% in micros/op** `./db_bench -benchmarks=fillseq -db=/dev/shm/testdb -disable_auto_compactions=1 -cost_write_buffer_to_cache=true -write_buffer_size=10000000000 | egrep 'fillseq'` #-run | (pre-PR) avg micros/op | std micros/op | (post-PR) avg micros/op | std micros/op | change (%) -- | -- | -- | -- | -- | -- 10 | 6.15 | 0.260187 | 6.289 | 0.371192 | 2.260162602 20 | 7.28025 | 0.465402 | 7.37255 | 0.451256 | 1.267813605 40 | 7.06312 | 0.490654 | 7.13803 | 0.478676 | **1.060579461** 80 | 7.14035 | 0.972831 | 7.14196 | 0.92971 | **0.02254791432** - filter bench: `bloom filter`: **-0.78% in ms/key** - ` ./filter_bench -impl=2 -quick -reserve_table_builder_memory=true | grep 'Build avg'` #-run | (pre-PR) avg ns/key | std ns/key | (post-PR) ns/key | std ns/key | change (%) -- | -- | -- | -- | -- | -- 10 | 26.4369 | 0.442182 | 26.3273 | 0.422919 | **-0.4145720565** 20 | 26.4451 | 0.592787 | 26.1419 | 0.62451 | **-1.1465262** - Crash test `python3 tools/db_crashtest.py blackbox --reserve_table_reader_memory=1 --cache_size=1` killed as normal Reviewed By: ajkr Differential Revision: D35136549 Pulled By: hx235 fbshipit-source-id: 146978858d0f900f43f4eb09bfd3e83195e3be28 |

4 years ago |

|

|

a180c5cc3a |

Added GetMergeOperands() to stress test (#9804)

Summary: db_stress does not yet cover is GetMergeOperands(), added GetMergeOperands() to db_stress. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9804 Test Plan: ```make -j32 db_stress``` ```python3 tools/db_crashtest.py blackbox --simple --interval=30 --duration=2400 --max_key=100000 --write_buffer_size=524288 --target_file_size_base=524288 --max_bytes_for_level_base=2097152 --value_size_mult=33``` Reviewed By: ajkr Differential Revision: D35387137 Pulled By: cbi42 fbshipit-source-id: 8f851ef68b5af4d824128ad55ebe564f7ad6f7e6 |

4 years ago |

|

|

6534c6dea4 |

Fix remaining uses of "backupable" (#9792)

Summary: Various renaming and fixes to get rid of remaining uses of "backupable" which is terminology leftover from the original, flawed design of BackupableDB. Now any DB can be backed up, using BackupEngine. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9792 Test Plan: CI Reviewed By: ajkr Differential Revision: D35334386 Pulled By: pdillinger fbshipit-source-id: 2108a42b4575c8cccdfd791c549aae93ec2f3329 |

4 years ago |

|

|

fd66005628 |

Add 'adaptive_readahead' and 'async_io' options to db_stress (#9750)

Summary: Same as title Pull Request resolved: https://github.com/facebook/rocksdb/pull/9750 Test Plan: export CRASH_TEST_EXT_ARGS=" --async_io=1 --adaptive_readahead=1; make -j crash_test Reviewed By: jay-zhuang Differential Revision: D35114326 Pulled By: akankshamahajan15 fbshipit-source-id: 8b05c95be09f7aff6cb9eb757aa20a6520349d45 |

4 years ago |

|

|

cff0d1e8e6 |

New backup meta schema, with file temperatures (#9660)

Summary: The primary goal of this change is to add support for backing up and restoring (applying on restore) file temperature metadata, without committing to either the DB manifest or the FS reported "current" temperatures being exclusive "source of truth". To achieve this goal, we need to add temperature information to backup metadata, which requires updated backup meta schema. Fortunately I prepared for this in https://github.com/facebook/rocksdb/issues/8069, which began forward compatibility in version 6.19.0 for this kind of schema update. (Previously, backup meta schema was not extensible! Making this schema update public will allow some other "nice to have" features like taking backups with hard links, and avoiding crc32c checksum computation when another checksum is already available.) While schema version 2 is newly public, the default schema version is still 1. Until we change the default, users will need to set to 2 to enable features like temperature data backup+restore. New metadata like temperature information will be ignored with a warning in versions before this change and since 6.19.0. The metadata is considered ignorable because a functioning DB can be restored without it. Some detail: * Some renaming because "future schema" is now just public schema 2. * Initialize some atomics in TestFs (linter reported) * Add temperature hint support to SstFileDumper (used by BackupEngine) Pull Request resolved: https://github.com/facebook/rocksdb/pull/9660 Test Plan: related unit test majorly updated for the new functionality, including some shared testing support for tracking temperatures in a FS. Some other tests and testing hooks into production code also updated for making the backup meta schema change public. Reviewed By: ajkr Differential Revision: D34686968 Pulled By: pdillinger fbshipit-source-id: 3ac1fa3e67ee97ca8a5103d79cc87d872c1d862a |

4 years ago |

|

|

5894761056 |

Improve stress test for transactions (#9568)

Summary: Test only, no change to functionality. Extremely low risk of library regression. Update test key generation by maintaining existing and non-existing keys. Update db_crashtest.py to drive multiops_txn stress test for both write-committed and write-prepared. Add a make target 'blackbox_crash_test_with_multiops_txn'. Running the following commands caught the bug exposed in https://github.com/facebook/rocksdb/issues/9571. ``` $rm -rf /tmp/rocksdbtest/* $./db_stress -progress_reports=0 -test_multi_ops_txns -use_txn -clear_column_family_one_in=0 \ -column_families=1 -writepercent=0 -delpercent=0 -delrangepercent=0 -customopspercent=60 \ -readpercent=20 -prefixpercent=0 -iterpercent=20 -reopen=0 -ops_per_thread=1000 -ub_a=10000 \ -ub_c=100 -destroy_db_initially=0 -key_spaces_path=/dev/shm/key_spaces_desc -threads=32 -read_fault_one_in=0 $./db_stress -progress_reports=0 -test_multi_ops_txns -use_txn -clear_column_family_one_in=0 -column_families=1 -writepercent=0 -delpercent=0 -delrangepercent=0 -customopspercent=60 -readpercent=20 \ -prefixpercent=0 -iterpercent=20 -reopen=0 -ops_per_thread=1000 -ub_a=10000 -ub_c=100 -destroy_db_initially=0 \ -key_spaces_path=/dev/shm/key_spaces_desc -threads=32 -read_fault_one_in=0 ``` Running the following command caught a bug which will be fixed in https://github.com/facebook/rocksdb/issues/9648 . ``` $TEST_TMPDIR=/dev/shm make blackbox_crash_test_with_multiops_wc_txn ``` Pull Request resolved: https://github.com/facebook/rocksdb/pull/9568 Reviewed By: jay-zhuang Differential Revision: D34308154 Pulled By: riversand963 fbshipit-source-id: 99ff1b65c19b46c471d2f2d3b47adcd342a1b9e7 |

4 years ago |

|

|

ca0ef54f16 |

Rate-limit automatic WAL flush after each user write (#9607)

Summary: **Context:** WAL flush is currently not rate-limited by `Options::rate_limiter`. This PR is to provide rate-limiting to auto WAL flush, the one that automatically happen after each user write operation (i.e, `Options::manual_wal_flush == false`), by adding `WriteOptions::rate_limiter_options`. Note that we are NOT rate-limiting WAL flush that do NOT automatically happen after each user write, such as `Options::manual_wal_flush == true + manual FlushWAL()` (rate-limiting multiple WAL flushes), for the benefits of: - being consistent with [ReadOptions::rate_limiter_priority](https://github.com/facebook/rocksdb/blob/7.0.fb/include/rocksdb/options.h#L515) - being able to turn off some WAL flush's rate-limiting but not all (e.g, turn off specific the WAL flush of a critical user write like a service's heartbeat) `WriteOptions::rate_limiter_options` only accept `Env::IO_USER` and `Env::IO_TOTAL` currently due to an implementation constraint. - The constraint is that we currently queue parallel writes (including WAL writes) based on FIFO policy which does not factor rate limiter priority into this layer's scheduling. If we allow lower priorities such as `Env::IO_HIGH/MID/LOW` and such writes specified with lower priorities occurs before ones specified with higher priorities (even just by a tiny bit in arrival time), the former would have blocked the latter, leading to a "priority inversion" issue and contradictory to what we promise for rate-limiting priority. Therefore we only allow `Env::IO_USER` and `Env::IO_TOTAL` right now before improving that scheduling. A pre-requisite to this feature is to support operation-level rate limiting in `WritableFileWriter`, which is also included in this PR. **Summary:** - Renamed test suite `DBRateLimiterTest to DBRateLimiterOnReadTest` for adding a new test suite - Accept `rate_limiter_priority` in `WritableFileWriter`'s private and public write functions - Passed `WriteOptions::rate_limiter_options` to `WritableFileWriter` in the path of automatic WAL flush. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9607 Test Plan: - Added new unit test to verify existing flush/compaction rate-limiting does not break, since `DBTest, RateLimitingTest` is disabled and current db-level rate-limiting tests focus on read only (e.g, `db_rate_limiter_test`, `DBTest2, RateLimitedCompactionReads`). - Added new unit test `DBRateLimiterOnWriteWALTest, AutoWalFlush` - `strace -ftt -e trace=write ./db_bench -benchmarks=fillseq -db=/dev/shm/testdb -rate_limit_auto_wal_flush=1 -rate_limiter_bytes_per_sec=15 -rate_limiter_refill_period_us=1000000 -write_buffer_size=100000000 -disable_auto_compactions=1 -num=100` - verified that WAL flush(i.e, system-call _write_) were chunked into 15 bytes and each _write_ was roughly 1 second apart - verified the chunking disappeared when `-rate_limit_auto_wal_flush=0` - crash test: `python3 tools/db_crashtest.py blackbox --disable_wal=0 --rate_limit_auto_wal_flush=1 --rate_limiter_bytes_per_sec=10485760 --interval=10` killed as normal **Benchmarked on flush/compaction to ensure no performance regression:** - compaction with rate-limiting (see table 1, avg over 1280-run): pre-change: **915635 micros/op**; post-change: **907350 micros/op (improved by 0.106%)** ``` #!/bin/bash TEST_TMPDIR=/dev/shm/testdb START=1 NUM_DATA_ENTRY=8 N=10 rm -f compact_bmk_output.txt compact_bmk_output_2.txt dont_care_output.txt for i in $(eval echo "{$START..$NUM_DATA_ENTRY}") do NUM_RUN=$(($N*(2**($i-1)))) for j in $(eval echo "{$START..$NUM_RUN}") do ./db_bench --benchmarks=fillrandom -db=$TEST_TMPDIR -disable_auto_compactions=1 -write_buffer_size=6710886 > dont_care_output.txt && ./db_bench --benchmarks=compact -use_existing_db=1 -db=$TEST_TMPDIR -level0_file_num_compaction_trigger=1 -rate_limiter_bytes_per_sec=100000000 | egrep 'compact' done > compact_bmk_output.txt && awk -v NUM_RUN=$NUM_RUN '{sum+=$3;sum_sqrt+=$3^2}END{print sum/NUM_RUN, sqrt(sum_sqrt/NUM_RUN-(sum/NUM_RUN)^2)}' compact_bmk_output.txt >> compact_bmk_output_2.txt done ``` - compaction w/o rate-limiting (see table 2, avg over 640-run): pre-change: **822197 micros/op**; post-change: **823148 micros/op (regressed by 0.12%)** ``` Same as above script, except that -rate_limiter_bytes_per_sec=0 ``` - flush with rate-limiting (see table 3, avg over 320-run, run on the [patch]( |

4 years ago |

|

|

4a9ae4f713 |

Avoid .trash handling race in db_stress Checkpoint (#9673)

Summary: The shared SstFileManager in db_stress can create background work that races with TestCheckpoint such that DestroyDir fails because of file rename while it is running. Analogous to change already made for TestBackupRestore Pull Request resolved: https://github.com/facebook/rocksdb/pull/9673 Test Plan: make blackbox_crash_test for a while with checkpoint_one_in=100 Reviewed By: ajkr Differential Revision: D34702215 Pulled By: pdillinger fbshipit-source-id: ac3e166efa28cba6c6f4b9b391e799394603ebfd |

4 years ago |

|

|

3362a730dc |

Avoid usage of ReopenWritableFile in db_stress (#9649)

Summary: The UniqueIdVerifier constructor currently calls ReopenWritableFile on the FileSystem, which might not be supported. Instead of relying on reopening the unique IDs file for writing, create a new file and copy the original contents. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9649 Test Plan: Run db_stress Reviewed By: pdillinger Differential Revision: D34572307 Pulled By: anand1976 fbshipit-source-id: 3a777908582d79dae57488d4278bad126774f698 |

4 years ago |

|

|

d3a2f284d9 |

Add Temperature info in `NewSequentialFile()` (#9499)

Summary: Add Temperature hints information from RocksDB in API `NewSequentialFile()`. backup and checkpoint operations need to open the source files with `NewSequentialFile()`, which will have the temperature hints. Other operations are not covered. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9499 Test Plan: Added unittest Reviewed By: pdillinger Differential Revision: D34006115 Pulled By: jay-zhuang fbshipit-source-id: 568b34602b76520e53128672bd07e9d886786a2f |

4 years ago |

|

|

babe56ddba |

Add rate limiter priority to ReadOptions (#9424)

Summary: Users can set the priority for file reads associated with their operation by setting `ReadOptions::rate_limiter_priority` to something other than `Env::IO_TOTAL`. Rate limiting `VerifyChecksum()` and `VerifyFileChecksums()` is the motivation for this PR, so it also includes benchmarks and minor bug fixes to get that working. `RandomAccessFileReader::Read()` already had support for rate limiting compaction reads. I changed that rate limiting to be non-specific to compaction, but rather performed according to the passed in `Env::IOPriority`. Now the compaction read rate limiting is supported by setting `rate_limiter_priority = Env::IO_LOW` on its `ReadOptions`. There is no default value for the new `Env::IOPriority` parameter to `RandomAccessFileReader::Read()`. That means this PR goes through all callers (in some cases multiple layers up the call stack) to find a `ReadOptions` to provide the priority. There are TODOs for cases I believe it would be good to let user control the priority some day (e.g., file footer reads), and no TODO in cases I believe it doesn't matter (e.g., trace file reads). The API doc only lists the missing cases where a file read associated with a provided `ReadOptions` cannot be rate limited. For cases like file ingestion checksum calculation, there is no API to provide `ReadOptions` or `Env::IOPriority`, so I didn't count that as missing. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9424 Test Plan: - new unit tests - new benchmarks on ~50MB database with 1MB/s read rate limit and 100ms refill interval; verified with strace reads are chunked (at 0.1MB per chunk) and spaced roughly 100ms apart. - setup command: `./db_bench -benchmarks=fillrandom,compact -db=/tmp/testdb -target_file_size_base=1048576 -disable_auto_compactions=true -file_checksum=true` - benchmarks command: `strace -ttfe pread64 ./db_bench -benchmarks=verifychecksum,verifyfilechecksums -use_existing_db=true -db=/tmp/testdb -rate_limiter_bytes_per_sec=1048576 -rate_limit_bg_reads=1 -rate_limit_user_ops=true -file_checksum=true` - crash test using IO_USER priority on non-validation reads with https://github.com/facebook/rocksdb/issues/9567 reverted: `python3 tools/db_crashtest.py blackbox --max_key=1000000 --write_buffer_size=524288 --target_file_size_base=524288 --level_compaction_dynamic_level_bytes=true --duration=3600 --rate_limit_bg_reads=true --rate_limit_user_ops=true --rate_limiter_bytes_per_sec=10485760 --interval=10` Reviewed By: hx235 Differential Revision: D33747386 Pulled By: ajkr fbshipit-source-id: a2d985e97912fba8c54763798e04f006ccc56e0c |

4 years ago |

|

|

685044dff2 |

Remove timestamp from key in expected state (#9525)

Summary: The keys as part of write batch read from trace file can contain trailing timestamps. This PR removes them before calling `ExpectedState`. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9525 Test Plan: make check make crash_test_with_ts Reviewed By: ajkr Differential Revision: D34082358 Pulled By: riversand963 fbshipit-source-id: 78c925659e2a19e4a8278fb4a8ddf5070e265c04 |

4 years ago |

|

|

9745c68eb1 |

Remove deprecated option new_table_reader_for_compaction_inputs (#9443)

Summary: In RocksDB option new_table_reader_for_compaction_inputs has not effect on Compaction or on the behavior of RocksDB library. Therefore, we are removing it in the upcoming 7.0 release. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9443 Test Plan: CircleCI Reviewed By: ajkr Differential Revision: D33788508 Pulled By: akankshamahajan15 fbshipit-source-id: 324ca6f12bfd019e9bd5e1b0cdac39be5c3cec7d |

4 years ago |

|

|

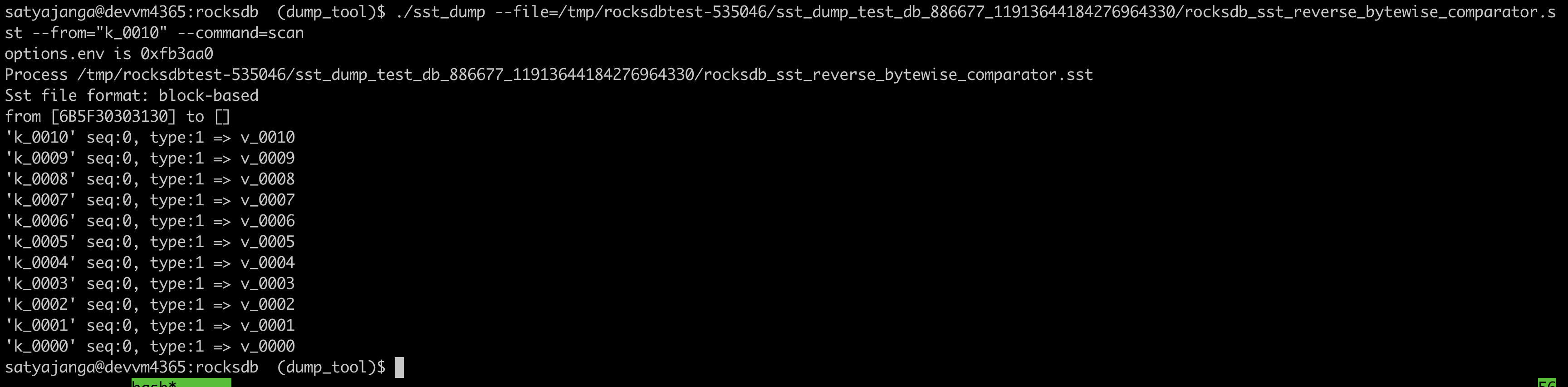

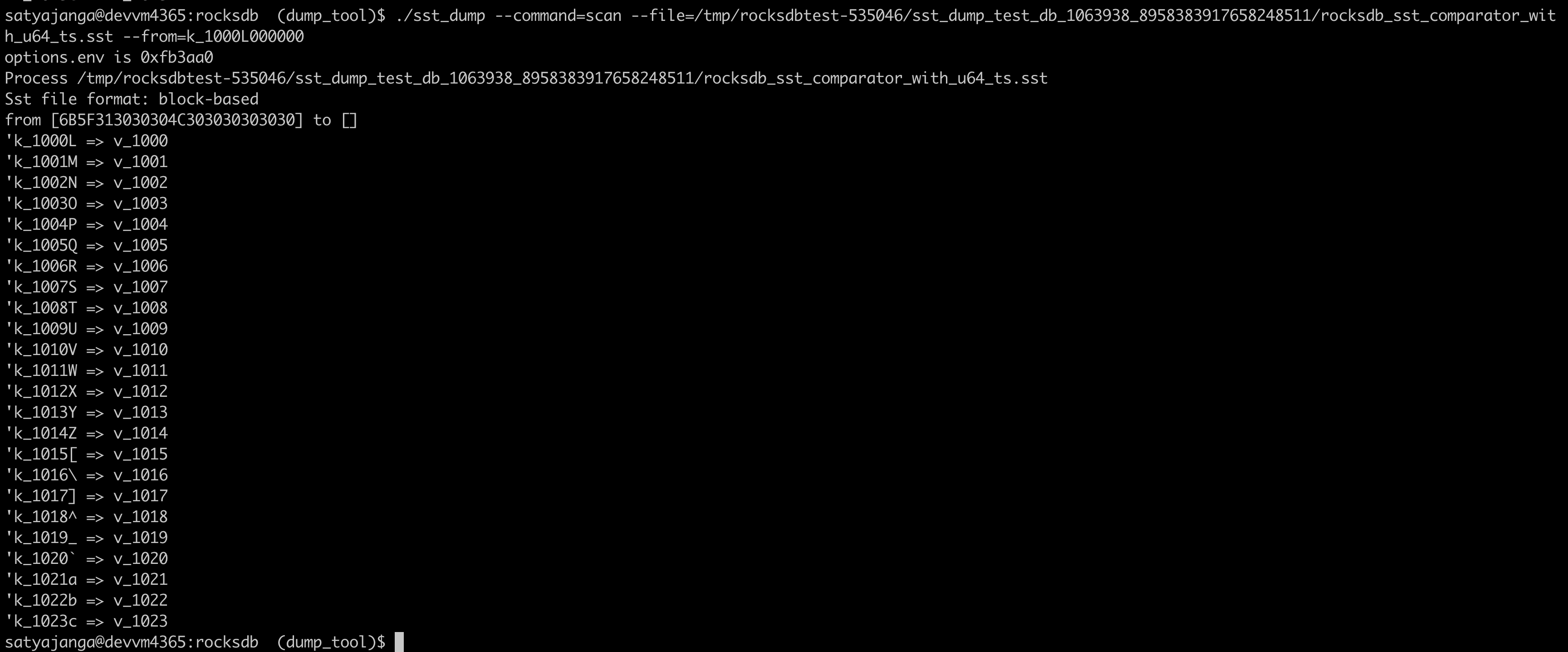

036bbab6f7 |

Use the comparator from the sst file table properties in sst_dump_tool (#9491)

Summary: We introduced a new Comparator for timestamp in user keys. In the sst_dump_tool by default we use BytewiseComparator to read sst files. This change allows us to read comparator_name from table properties in meta data block and use it to read. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9491 Test Plan: added unittests for new functionality. make check   Reviewed By: riversand963 Differential Revision: D33993614 Pulled By: satyajanga fbshipit-source-id: 4b5cf938e6d2cb3931d763bef5baccc900b8c536 |

4 years ago |

|

|

629e3e1d77 |

Fix spelling in public API (#9490)

Summary: I feel it would be nice if we can fix this spelling error. In `SizeApproximationOptions`, the `include_memtabtles` should be `include_memtables`. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9490 Test Plan: make check Reviewed By: hx235 Differential Revision: D33949862 Pulled By: riversand963 fbshipit-source-id: b2be67501b65d4aabb6b8df1bf25eb8d54cc1466 |

4 years ago |

|

|

3122cb4358 |

Revise APIs related to user-defined timestamp (#8946)

Summary: ajkr reminded me that we have a rule of not including per-kv related data in `WriteOptions`. Namely, `WriteOptions` should not include information about "what-to-write", but should just include information about "how-to-write". According to this rule, `WriteOptions::timestamp` (experimental) is clearly a violation. Therefore, this PR removes `WriteOptions::timestamp` for compliance. After the removal, we need to pass timestamp info via another set of APIs. This PR proposes a set of overloaded functions `Put(write_opts, key, value, ts)`, `Delete(write_opts, key, ts)`, and `SingleDelete(write_opts, key, ts)`. Planned to add `Write(write_opts, batch, ts)`, but its complexity made me reconsider doing it in another PR (maybe). For better checking and returning error early, we also add a new set of APIs to `WriteBatch` that take extra `timestamp` information when writing to `WriteBatch`es. These set of APIs in `WriteBatchWithIndex` are currently not supported, and are on our TODO list. Removed `WriteBatch::AssignTimestamps()` and renamed `WriteBatch::AssignTimestamp()` to `WriteBatch::UpdateTimestamps()` since this method require that all keys have space for timestamps allocated already and multiple timestamps can be updated. The constructor of `WriteBatch` now takes a fourth argument `default_cf_ts_sz` which is the timestamp size of the default column family. This will be used to allocate space when calling APIs that do not specify a column family handle. Also, updated `DB::Get()`, `DB::MultiGet()`, `DB::NewIterator()`, `DB::NewIterators()` methods, replacing some assertions about timestamp to returning Status code. Pull Request resolved: https://github.com/facebook/rocksdb/pull/8946 Test Plan: make check ./db_bench -benchmarks=fillseq,fillrandom,readrandom,readseq,deleterandom -user_timestamp_size=8 ./db_stress --user_timestamp_size=8 -nooverwritepercent=0 -test_secondary=0 -secondary_catch_up_one_in=0 -continuous_verification_interval=0 Make sure there is no perf regression by running the following ``` ./db_bench_opt -db=/dev/shm/rocksdb -use_existing_db=0 -level0_stop_writes_trigger=256 -level0_slowdown_writes_trigger=256 -level0_file_num_compaction_trigger=256 -disable_wal=1 -duration=10 -benchmarks=fillrandom ``` Before this PR ``` DB path: [/dev/shm/rocksdb] fillrandom : 1.831 micros/op 546235 ops/sec; 60.4 MB/s ``` After this PR ``` DB path: [/dev/shm/rocksdb] fillrandom : 1.820 micros/op 549404 ops/sec; 60.8 MB/s ``` Reviewed By: ltamasi Differential Revision: D33721359 Pulled By: riversand963 fbshipit-source-id: c131561534272c120ffb80711d42748d21badf09 |

4 years ago |

|

|

920386f2b7 |

Detect (new) Bloom/Ribbon Filter construction corruption (#9342)

Summary: Note: rebase on and merge after https://github.com/facebook/rocksdb/pull/9349, https://github.com/facebook/rocksdb/pull/9345, (optional) https://github.com/facebook/rocksdb/pull/9393 **Context:** (Quoted from pdillinger) Layers of information during new Bloom/Ribbon Filter construction in building block-based tables includes the following: a) set of keys to add to filter b) set of hashes to add to filter (64-bit hash applied to each key) c) set of Bloom indices to set in filter, with duplicates d) set of Bloom indices to set in filter, deduplicated e) final filter and its checksum This PR aims to detect corruption (e.g, unexpected hardware/software corruption on data structures residing in the memory for a long time) from b) to e) and leave a) as future works for application level. - b)'s corruption is detected by verifying the xor checksum of the hash entries calculated as the entries accumulate before being added to the filter. (i.e, `XXPH3FilterBitsBuilder::MaybeVerifyHashEntriesChecksum()`) - c) - e)'s corruption is detected by verifying the hash entries indeed exists in the constructed filter by re-querying these hash entries in the filter (i.e, `FilterBitsBuilder::MaybePostVerify()`) after computing the block checksum (except for PartitionFilter, which is done right after each `FilterBitsBuilder::Finish` for impl simplicity - see code comment for more). For this stage of detection, we assume hash entries are not corrupted after checking on b) since the time interval from b) to c) is relatively short IMO. Option to enable this feature of detection is `BlockBasedTableOptions::detect_filter_construct_corruption` which is false by default. **Summary:** - Implemented new functions `XXPH3FilterBitsBuilder::MaybeVerifyHashEntriesChecksum()` and `FilterBitsBuilder::MaybePostVerify()` - Ensured hash entries, final filter and banding and their [cache reservation ](https://github.com/facebook/rocksdb/issues/9073) are released properly despite corruption - See [Filter.construction.artifacts.release.point.pdf ](https://github.com/facebook/rocksdb/files/7923487/Design.Filter.construction.artifacts.release.point.pdf) for high-level design - Bundled and refactored hash entries's related artifact in XXPH3FilterBitsBuilder into `HashEntriesInfo` for better control on lifetime of these artifact during `SwapEntires`, `ResetEntries` - Ensured RocksDB block-based table builder calls `FilterBitsBuilder::MaybePostVerify()` after constructing the filter by `FilterBitsBuilder::Finish()` - When encountering such filter construction corruption, stop writing the filter content to files and mark such a block-based table building non-ok by storing the corruption status in the builder. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9342 Test Plan: - Added new unit test `DBFilterConstructionCorruptionTestWithParam.DetectCorruption` - Included this new feature in `DBFilterConstructionReserveMemoryTestWithParam.ReserveMemory` as this feature heavily touch ReserveMemory's impl - For fallback case, I run `./filter_bench -impl=3 -detect_filter_construct_corruption=true -reserve_table_builder_memory=true -strict_capacity_limit=true -quick -runs 10 | grep 'Build avg'` to make sure nothing break. - Added to `filter_bench`: increased filter construction time by **30%**, mostly by `MaybePostVerify()` - FastLocalBloom - Before change: `./filter_bench -impl=2 -quick -runs 10 | grep 'Build avg'`: **28.86643s** - After change: - `./filter_bench -impl=2 -detect_filter_construct_corruption=false -quick -runs 10 | grep 'Build avg'` (expect a tiny increase due to MaybePostVerify is always called regardless): **27.6644s (-4% perf improvement might be due to now we don't drop bloom hash entry in `AddAllEntries` along iteration but in bulk later, same with the bypassing-MaybePostVerify case below)** - `./filter_bench -impl=2 -detect_filter_construct_corruption=true -quick -runs 10 | grep 'Build avg'` (expect acceptable increase): **34.41159s (+20%)** - `./filter_bench -impl=2 -detect_filter_construct_corruption=true -quick -runs 10 | grep 'Build avg'` (by-passing MaybePostVerify, expect minor increase): **27.13431s (-6%)** - Standard128Ribbon - Before change: `./filter_bench -impl=3 -quick -runs 10 | grep 'Build avg'`: **122.5384s** - After change: - `./filter_bench -impl=3 -detect_filter_construct_corruption=false -quick -runs 10 | grep 'Build avg'` (expect a tiny increase due to MaybePostVerify is always called regardless - verified by removing MaybePostVerify under this case and found only +-1ns difference): **124.3588s (+2%)** - `./filter_bench -impl=3 -detect_filter_construct_corruption=true -quick -runs 10 | grep 'Build avg'`(expect acceptable increase): **159.4946s (+30%)** - `./filter_bench -impl=3 -detect_filter_construct_corruption=true -quick -runs 10 | grep 'Build avg'`(by-passing MaybePostVerify, expect minor increase) : **125.258s (+2%)** - Added to `db_stress`: `make crash_test`, `./db_stress --detect_filter_construct_corruption=true` - Manually smoke-tested: manually corrupted the filter construction in some db level tests with basic PUT and background flush. As expected, the error did get returned to users in subsequent PUT and Flush status. Reviewed By: pdillinger Differential Revision: D33746928 Pulled By: hx235 fbshipit-source-id: cb056426be5a7debc1cd16f23bc250f36a08ca57 |

4 years ago |

|

|

7cd5763274 |

Fix a copy-paste bug related to background threads in db_stress (#9485)

Summary: Fixes a typo introduced in https://github.com/facebook/rocksdb/pull/9466. Fixes https://github.com/facebook/rocksdb/issues/9482 Pull Request resolved: https://github.com/facebook/rocksdb/pull/9485 Test Plan: ``` COMPILE_WITH_TSAN=1 make db_stress -j24 ./db_stress --ops_per_thread=1000 --reopen=5 ``` Reviewed By: ajkr Differential Revision: D33928601 Pulled By: ltamasi fbshipit-source-id: 3e01a0ca5fffb56c268c811cbe045413b225059a |

4 years ago |

|

|

ed75dddc35 |

Optimize db_stress setup phase (#9475)

Summary: It is too slow that our `db_crashtest.py` often kills `db_stress` before the setup phase completes. Profiled it and found a few ways to optimize. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9475 Test Plan: Measured setup phase time reduced 22% (36 -> 28 seconds) for first run, and 36% (38 -> 24 seconds) for non-first run on empty-ish DB. - first run benchmark command: `rm -rf /dev/shm/dbstress*/ && mkdir -p /dev/shm/dbstress_expected/ && ./db_stress -max_key=100000000 -destroy_db_initially=1 -expected_values_dir=/dev/shm/dbstress_expected/ -db=/dev/shm/dbstress/ --clear_column_family_one_in=0 --reopen=0 --nooverwritepercent=1` output before this PR: ``` 2022/01/31-11:14:05 Initializing db_stress ... 2022/01/31-11:14:41 Starting database operations ``` output after this PR: ``` ... 2022/01/31-11:12:23 Initializing db_stress ... 2022/01/31-11:12:51 Starting database operations ``` - non-first run benchmark command: `./db_stress -max_key=100000000 -destroy_db_initially=0 -expected_values_dir=/dev/shm/dbstress_expected/ -db=/dev/shm/dbstress/ --clear_column_family_one_in=0 --reopen=0 --nooverwritepercent=1` output before this PR: ``` 2022/01/31-11:20:45 Initializing db_stress ... 2022/01/31-11:21:23 Starting database operations ``` output after this PR: ``` 2022/01/31-11:22:02 Initializing db_stress ... 2022/01/31-11:22:26 Starting database operations ``` - ran minified crash test a while: `DEBUG_LEVEL=0 TEST_TMPDIR=/dev/shm python3 tools/db_crashtest.py blackbox --simple --interval=10 --max_key=1000000 --write_buffer_size=1048576 --target_file_size_base=1048576 --max_bytes_for_level_base=4194304 --value_size_mult=33` Reviewed By: anand1976 Differential Revision: D33897793 Pulled By: ajkr fbshipit-source-id: 0d7b2c93e1e2a9f8a878e87632c2455406313087 |

4 years ago |

|

|

c7ce03dce1 |

db_stress begin tracking expected state after verification (#9470)

Summary: Previously we enabled tracking expected state changes during `FinishInitDb()`, as soon as the DB was opened. This meant tracing was enabled during `VerifyDb()`. This cost extra CPU by requiring `DBImpl::trace_mutex_` to be acquired on each read operation. It was unnecessary since we know there are no expected state changes during the `VerifyDb()` phase. So, this PR delays tracking expected state changes until after the `VerifyDb()` phase has completed. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9470 Test Plan: Measured this PR reduced `VerifyDb()` 76% (387 -> 92 seconds) with `-disable_wal=1` (i.e., expected state tracking enabled). - benchmark command: `./db_stress -max_key=100000000 -ops_per_thread=1 -destroy_db_initially=1 -expected_values_dir=/dev/shm/dbstress_expected/ -db=/dev/shm/dbstress/ --clear_column_family_one_in=0 --disable_wal=1 --reopen=0` - without this PR, `VerifyDb()` takes 387 seconds: ``` 2022/01/30-21:43:04 Initializing worker threads Crash-recovery verification passed :) 2022/01/30-21:49:31 Starting database operations ``` - with this PR, `VerifyDb()` takes 92 seconds ``` 2022/01/30-21:59:06 Initializing worker threads Crash-recovery verification passed :) 2022/01/30-22:00:38 Starting database operations ``` Reviewed By: riversand963 Differential Revision: D33884596 Pulled By: ajkr fbshipit-source-id: 5f259de8087de5b0531f088e11297f37ed2f7685 |

4 years ago |

|

|

f07c56928f |

Set the number of threads up front in db_stress (#9466)

Summary: With the code on main, `RunStressTest` increments the number of threads one by one as the threads are created and started. This results in a data race with `NonBatchedOpsStressTest::VerifyDb`, which reads this value without synchronization, and is also not correct in the sense that `VerifyDb` assumes that the number of threads already has its final value set (e.g. it's checking whether the current thread is the last one). The patch fixes this by setting the number of threads before creating/starting any threads. This also eliminates the need for locking the mutex during thread startup. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9466 Test Plan: Ran the blackbox crash test under TSAN for a while. Reviewed By: ajkr Differential Revision: D33858856 Pulled By: ltamasi fbshipit-source-id: 8a6515a83fd1808b8b8dca61978777c4404f04cc |

4 years ago |

|

|

78aee6fedc |

Remove obsolete backupable_db.h, utility_db.h (#9438)

Summary: This also removes the obsolete names BackupableDBOptions and UtilityDB. API users must now use BackupEngineOptions and DBWithTTL::Open. In C API, `rocksdb_backupable_db_*` is replaced `rocksdb_backup_engine_*`. Similar renaming in Java API. In reference to https://github.com/facebook/rocksdb/issues/9389 Pull Request resolved: https://github.com/facebook/rocksdb/pull/9438 Test Plan: CI Reviewed By: mrambacher Differential Revision: D33780269 Pulled By: pdillinger fbshipit-source-id: 4a6cfc5c1b4c78bcad790b9d3dd13c5fdf4a1fac |

4 years ago |

|

|

1e0e883ca5 |

Remove deprecated API AdvancedColumnFamilyOptions::soft_rate_limit/hard_rate_limit (#9452)

Summary: **Context/Summary:** AdvancedColumnFamilyOptions::soft_rate_limit/hard_rate_limit have been marked as deprecated and it's time to actually remove the code. - Keep `soft_rate_limit`/`hard_rate_limit` in `cf_mutable_options_type_info` to prevent throwing `InvalidArgument` in `GetColumnFamilyOptionsFromMap` when reading an option file still with these options (e.g, old option file generated from RocksDB before the deprecation) - Keep `soft_rate_limit`/`hard_rate_limit` in under `OptionsOldApiTest.GetOptionsFromMapTest` to test the case mentioned above. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9452 Test Plan: Rely on my eyeball and CI Reviewed By: ajkr Differential Revision: D33804938 Pulled By: hx235 fbshipit-source-id: 133d49f7ec5238d7efceeb0a3122a5792a2b9945 |

4 years ago |

|

|

2eac6bb120 |

db_stress: db_stress fails on custom filesystems. (#9352)

Summary: db_stress listener service always uses default filesystem to operate, causing it to not recognize custom filesystem (like ZenFS plugin FS). Pass the env to db_stress listener with the correct filesystem information, so it can open the user intended filesystem. Signed-off-by: Aravind Ramesh <Aravind.Ramesh@wdc.com> Pull Request resolved: https://github.com/facebook/rocksdb/pull/9352 Reviewed By: riversand963 Differential Revision: D33776762 Pulled By: pdillinger fbshipit-source-id: e79bb9a544384f80ae9dd0108241ab9c83223954 |

4 years ago |

|

|

50135c1bf3 |

Move HDFS support to separate repo (#9170)

Summary: This PR moves HDFS support from RocksDB repo to a separate repo. The new (temporary?) repo in this PR serves as an example before we finalize the decision on where and who to host hdfs support. At this point, people can start from the example repo and fork. Java/JNI is not included yet, and needs to be done later if necessary. The goal is to include this commit in RocksDB 7.0 release. Reference: https://github.com/ajkr/dedupfs by ajkr Pull Request resolved: https://github.com/facebook/rocksdb/pull/9170 Test Plan: Follow the instructions in https://github.com/riversand963/rocksdb-hdfs-env/blob/master/README.md. Build and run db_bench and db_stress. make check Reviewed By: ajkr Differential Revision: D33751662 Pulled By: riversand963 fbshipit-source-id: 22b4db7f31762ed417a20239f5a08dcd1696244f |

4 years ago |

|

|

6892f19b11 |

Test correctness with WAL disabled in non-txn blackbox crash tests (#9338)

Summary: Recently we added the ability to verify some prefix of operations are recovered (AKA no "hole" in the recovered data) (https://github.com/facebook/rocksdb/issues/8966). Besides testing unsynced data loss scenarios, it is also useful to test WAL disabled use cases, where unflushed writes are expected to be lost. Note RocksDB only offers the prefix-recovery guarantee to WAL-disabled use cases that use atomic flush, so crash test always enables atomic flush when WAL is disabled. To verify WAL-disabled crash-recovery correctness globally, i.e., also in whitebox and blackbox transaction tests, it is possible but requires further changes. I added TODOs in db_crashtest.py. Depends on https://github.com/facebook/rocksdb/issues/9305. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9338 Test Plan: Running all crash tests and many instances of blackbox. Sandcastle links are in Phabricator diff test plan. Reviewed By: riversand963 Differential Revision: D33345333 Pulled By: ajkr fbshipit-source-id: f56dd7d2e5a78d59301bf4fc3fedb980eb31e0ce |

4 years ago |

|

|

fe31dc53ca |

Make the Env class Customizable (#9293)

Summary: Allows the Env to have options (Configurable) and loads like other Customizable classes. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9293 Reviewed By: pdillinger, zhichao-cao Differential Revision: D33181591 Pulled By: mrambacher fbshipit-source-id: 55e823886c654d214eda9eedd45ccdc54dac14d7 |

4 years ago |

|

|

aa2b3bf675 |

Added `TraceOptions::preserve_write_order` (#9334)

Summary: This option causes trace records to be written in the serialized write thread. That way, the write records in the trace must follow the same order as writes that are logged to WAL and writes that are applied to the DB. By default I left it disabled to match existing behavior. I enabled it in `db_stress`, though, as that use case requires order of write records in trace matches the order in WAL. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9334 Test Plan: - See if below unsynced data loss crash test can run for 24h straight. It used to crash after a few hours when reaching an unlucky trace ordering. ``` DEBUG_LEVEL=0 TEST_TMPDIR=/dev/shm /usr/local/bin/python3 -u tools/db_crashtest.py blackbox --interval=10 --max_key=100000 --write_buffer_size=524288 --target_file_size_base=524288 --max_bytes_for_level_base=2097152 --value_size_mult=33 --sync_fault_injection=1 --test_batches_snapshots=0 --duration=86400 ``` Reviewed By: zhichao-cao Differential Revision: D33301990 Pulled By: ajkr fbshipit-source-id: 82d97559727adb4462a7af69758449c8725b22d3 |

4 years ago |

|

|

2ee20a669d |

Extend trace filtering to more operation types (#9335)

Summary: - Extended trace filtering to cover `MultiGet()`, `Seek()`, and `SeekForPrev()`. Now all user ops that can be traced support filtering. - Enabled the new filter masks in `db_stress` since it only cares to trace writes. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9335 Test Plan: - trace-heavy `db_stress` command reduced 30% elapsed time (79.21 -> 55.47 seconds) Benchmark command: ``` $ /usr/bin/time ./db_stress -ops_per_thread=100000 -sync_fault_injection=1 --db=/dev/shm/rocksdb_stress_db/ --expected_values_dir=/dev/shm/rocksdb_stress_expected/ --clear_column_family_one_in=0 ``` - replay-heavy `db_stress` command reduced 12.4% elapsed time (23.69 -> 20.75 seconds) Setup command: ``` $ ./db_stress -ops_per_thread=100000000 -sync_fault_injection=1 -db=/dev/shm/rocksdb_stress_db/ -expected_values_dir=/dev/shm/rocksdb_stress_expected --clear_column_family_one_in=0 & sleep 120; pkill -9 db_stress ``` Benchmark command: ``` $ /usr/bin/time ./db_stress -ops_per_thread=1 -reopen=0 -expected_values_dir=/dev/shm/rocksdb_stress_expected/ -db=/dev/shm/rocksdb_stress_db/ --clear_column_family_one_in=0 --destroy_db_initially=0 ``` Reviewed By: zhichao-cao Differential Revision: D33304580 Pulled By: ajkr fbshipit-source-id: 0df10f87c1fc506e9484b6b42cea2ef96c7ecd65 |

4 years ago |

|

|

dfff1cecff |

Filter `Get()`s from `db_stress` traces (#9315)

Summary: `db_stress` traces are used for tracking unsynced changes. For that purpose, we only need to track writes and not reads. Currently `TraceOptions` only supports excluding `Get()`s from the trace, so this PR only excludes `Get()`s. In the future it would be good to exclude `MultiGet()`s and iterator operations too. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9315 Test Plan: - trace-heavy `db_stress` command elapsed time reduced 37% Benchmark: ``` TEST_TMPDIR=/dev/shm /usr/bin/time ./db_stress -ops_per_thread=100000 -sync_fault_injection=1 -expected_values_dir=/dev/shm/dbstress_expected --clear_column_family_one_in=0 ``` - replay-heavy `db_stress` command elapsed time reduced 38% Setup: ``` TEST_TMPDIR=/dev/shm /usr/bin/time ./db_stress -ops_per_thread=100000000 -sync_fault_injection=1 -expected_values_dir=/dev/shm/dbstress_expected --clear_column_family_one_in=0 & sleep 120; pkill -9 db_stress ``` Benchmark: ``` TEST_TMPDIR=/dev/shm /usr/bin/time ./db_stress -ops_per_thread=1 -reopen=0 -expected_values_dir=/dev/shm/dbstress_expected --clear_column_family_one_in=0 --destroy_db_initially=0 ``` Reviewed By: zhichao-cao Differential Revision: D33229900 Pulled By: ajkr fbshipit-source-id: 0e4251c674d236ddbc4548e9bbfdd608bf3cdc93 |

4 years ago |

|

|

82670fb17b |

db_stress print hex key for MultiGet() inconsistency (#9324)

Summary: Pull Request resolved: https://github.com/facebook/rocksdb/pull/9324 Reviewed By: riversand963 Differential Revision: D33248178 Pulled By: ajkr fbshipit-source-id: c8a7382ed613f9ac3a0a2e3fa7d3c6fe9c95ef85 |

4 years ago |

|

|

b448b71222 |

`db_stress` tolerate incomplete tail records in trace file (#9316)

Summary: I saw the following error when running crash test for a while with unsynced data loss: ``` Error restoring historical expected values: Corruption: Corrupted trace file. ``` The trace file turned out to have an incomplete tail record. This is normal considering blackbox kills `db_stress` while trace can be ongoing. In the case where the trace file is not otherwise corrupted, there should be enough records already seen to sync up the expected state with the recovered DB. This PR ignores any `Status::Corruption` the `Replayer` returns when that happens. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9316 Reviewed By: jay-zhuang Differential Revision: D33230579 Pulled By: ajkr fbshipit-source-id: 9814af4e39e57f00d85be7404363211762f9b41b |

4 years ago |

|

|

791723c1ec |

Fix race condition in db_stress thread setup (#9314)

Summary:

We need to grab `SharedState`'s mutex while calling `IncThreads()` or `IncBgThreads()`. Otherwise the newly launched threads can simultaneously access the thread counters to check if every thread has finished initializing.

Repro command:

```

$ rm -rf /dev/shm/rocksdb/rocksdb_crashtest_{whitebox,expected}/ && mkdir -p /dev/shm/rocksdb/rocksdb_crashtest_{whitebox,expected}/ && ./db_stress --acquire_snapshot_one_in=10000 --atomic_flush=1 --avoid_flush_during_recovery=0 --avoid_unnecessary_blocking_io=1 --backup_max_size=104857600 --backup_one_in=100000 --batch_protection_bytes_per_key=0 --block_size=16384 --bloom_bits=131.8094496796033 --bottommost_compression_type=zlib --cache_index_and_filter_blocks=1 --cache_size=1048576 --checkpoint_one_in=1000000 --checksum_type=kCRC32c --clear_column_family_one_in=0 --compact_files_one_in=1000000 --compact_range_one_in=1000000 --compaction_style=1 --compaction_ttl=0 --compression_max_dict_buffer_bytes=134217727 --compression_max_dict_bytes=16384 --compression_parallel_threads=1 --compression_type=zstd --compression_zstd_max_train_bytes=65536 --continuous_verification_interval=0 --db=/dev/shm/rocksdb/rocksdb_crashtest_whitebox --db_write_buffer_size=8388608 --delpercent=5 --delrangepercent=0 --destroy_db_initially=0 --disable_wal=1 --enable_compaction_filter=0 --enable_pipelined_write=0 --fail_if_options_file_error=1 --file_checksum_impl=crc32c --flush_one_in=1000000 --format_version=5 --get_current_wal_file_one_in=0 --get_live_files_one_in=1000000 --get_property_one_in=1000000 --get_sorted_wal_files_one_in=0 --index_block_restart_interval=15 --index_type=3 --iterpercent=10 --key_len_percent_dist=1,30,69 --level_compaction_dynamic_level_bytes=True --log2_keys_per_lock=22 --long_running_snapshots=0 --mark_for_compaction_one_file_in=10 --max_background_compactions=20 --max_bytes_for_level_base=10485760 --max_key=1000000 --max_key_len=3 --max_manifest_file_size=1073741824 --max_write_batch_group_size_bytes=1048576 --max_write_buffer_number=3 --max_write_buffer_size_to_maintain=4194304 --memtablerep=skip_list --mmap_read=1 --mock_direct_io=False --nooverwritepercent=1 --open_files=500000 --open_metadata_write_fault_one_in=0 --open_read_fault_one_in=32 --open_write_fault_one_in=0 --ops_per_thread=20000 --optimize_filters_for_memory=1 --paranoid_file_checks=0 --partition_filters=0 --partition_pinning=0 --pause_background_one_in=1000000 --periodic_compaction_seconds=0 --prefixpercent=5 --prepopulate_block_cache=1 --progress_reports=0 --read_fault_one_in=1000 --readpercent=45 --recycle_log_file_num=1 --reopen=0 --ribbon_starting_level=999 --secondary_cache_fault_one_in=32 --snapshot_hold_ops=100000 --sst_file_manager_bytes_per_sec=104857600 --sst_file_manager_bytes_per_truncate=1048576 --subcompactions=2 --sync=0 --sync_fault_injection=False --target_file_size_base=2097152 --target_file_size_multiplier=2 --test_batches_snapshots=1 --test_cf_consistency=1 --top_level_index_pinning=0 --unpartitioned_pinning=0 --use_block_based_filter=1 --use_clock_cache=0 --use_direct_io_for_flush_and_compaction=0 --use_direct_reads=0 --use_full_merge_v1=1 --use_merge=0 --use_multiget=1 --user_timestamp_size=0 --verify_checksum=1 --verify_checksum_one_in=1000000 --verify_db_one_in=100000 --write_buffer_size=1048576 --write_dbid_to_manifest=1 --write_fault_one_in=0 --writepercent=35

```

TSAN error:

```

WARNING: ThreadSanitizer: data race (pid=2750142)

Read of size 4 at 0x7ffc21d7f58c by thread T39 (mutexes: write M670895590377780496):

#0 rocksdb::SharedState::AllInitialized() const db_stress_tool/db_stress_shared_state.h:204 (db_stress+0x4fd307)

https://github.com/facebook/rocksdb/issues/1 rocksdb::ThreadBody(void*) db_stress_tool/db_stress_driver.cc:26 (db_stress+0x4fd307)

https://github.com/facebook/rocksdb/issues/2 StartThreadWrapper env/env_posix.cc:454 (db_stress+0x84472f)

Previous write of size 4 at 0x7ffc21d7f58c by main thread:

#0 rocksdb::SharedState::IncThreads() db_stress_tool/db_stress_shared_state.h:194 (db_stress+0x4fd779)

https://github.com/facebook/rocksdb/issues/1 rocksdb::RunStressTest(rocksdb::StressTest*) db_stress_tool/db_stress_driver.cc:78 (db_stress+0x4fd779)

https://github.com/facebook/rocksdb/issues/2 rocksdb::db_stress_tool(int, char**) db_stress_tool/db_stress_tool.cc:348 (db_stress+0x4b97dc)

https://github.com/facebook/rocksdb/issues/3 main db_stress_tool/db_stress.cc:21 (db_stress+0x47a351)

Location is stack of main thread.

Location is global '<null>' at 0x000000000000 ([stack]+0x00000001d58c)

Mutex M670895590377780496 is already destroyed.

Thread T39 (tid=2750211, running) created by main thread at:

#0 pthread_create /home/engshare/third-party2/gcc/9.x/src/gcc-10.x/libsanitizer/tsan/tsan_interceptors.cc:964 (libtsan.so.0+0x613c3)

https://github.com/facebook/rocksdb/issues/1 StartThread env/env_posix.cc:464 (db_stress+0x8463c2)

https://github.com/facebook/rocksdb/issues/2 rocksdb::CompositeEnvWrapper::StartThread(void (*)(void*), void*) env/composite_env_wrapper.h:288 (db_stress+0x4bcd20)

https://github.com/facebook/rocksdb/issues/3 rocksdb::EnvWrapper::StartThread(void (*)(void*), void*) include/rocksdb/env.h:1475 (db_stress+0x4bb950)

https://github.com/facebook/rocksdb/issues/4 rocksdb::RunStressTest(rocksdb::StressTest*) db_stress_tool/db_stress_driver.cc:80 (db_stress+0x4fd9d2)

https://github.com/facebook/rocksdb/issues/5 rocksdb::db_stress_tool(int, char**) db_stress_tool/db_stress_tool.cc:348 (db_stress+0x4b97dc)

https://github.com/facebook/rocksdb/issues/6 main db_stress_tool/db_stress.cc:21 (db_stress+0x47a351)

ThreadSanitizer: data race db_stress_tool/db_stress_shared_state.h:204 in rocksdb::SharedState::AllInitialized() const

```

Pull Request resolved: https://github.com/facebook/rocksdb/pull/9314

Test Plan: verified repro command works after this PR.

Reviewed By: jay-zhuang

Differential Revision: D33217698

Pulled By: ajkr

fbshipit-source-id: 79358fe5adb779fc9dcf80643cc102d4b467fc38

|

4 years ago |

|

|

863c78d2c9 |

Fix unsynced data loss correctness test with mixed `-test_batches_snapshots` (#9302)

Summary: This fixes two bugs in the recently committed DB verification following crash-recovery with unsynced data loss (https://github.com/facebook/rocksdb/issues/8966): The first bug was in crash test runs involving mixed values for `-test_batches_snapshots`. The problem was we were neither restoring expected values nor enabling tracing when `-test_batches_snapshots=1`. This caused a future `-test_batches_snapshots=0` run to not find enough trace data to restore expected values. The fix is to restore expected values at the start of `-test_batches_snapshots=1` runs, but still leave tracing disabled as we do not need to track those KVs. The second bug was in `db_stress` runs that restore the expected values file and use compaction filter. The compaction filter was initialized to use the pre-restore expected values, which would be `munmap()`'d during `FileExpectedStateManager::Restore()`. Then compaction filter would run into a segfault. The fix is just to reorder compaction filter init after expected values restore. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9302 Test Plan: - To verify the first problem, the below sequence used to fail; now it passes. ``` $ ./db_stress --db=./test-db/ --expected_values_dir=./test-db-expected/ --max_key=100000 --ops_per_thread=1000 --sync_fault_injection=1 --clear_column_family_one_in=0 --destroy_db_initially=0 -reopen=0 -test_batches_snapshots=0 $ ./db_stress --db=./test-db/ --expected_values_dir=./test-db-expected/ --max_key=100000 --ops_per_thread=1000 --sync_fault_injection=1 --clear_column_family_one_in=0 --destroy_db_initially=0 -reopen=0 -test_batches_snapshots=1 $ ./db_stress --db=./test-db/ --expected_values_dir=./test-db-expected/ --max_key=100000 --ops_per_thread=1000 --sync_fault_injection=1 --clear_column_family_one_in=0 --destroy_db_initially=0 -reopen=0 -test_batches_snapshots=0 ``` - The second problem occurred rarely in the form of a SIGSEGV on a file that was `munmap()`d. I have not seen it after this PR though this doesn't prove much. Reviewed By: jay-zhuang Differential Revision: D33155283 Pulled By: ajkr fbshipit-source-id: 66fd0f0edf34015a010c30015f14f104734e964e |

4 years ago |

|

|

84228e21e8 |

Fix shutdown in db_stress with `-test_batches_snapshots=1` (#9313)

Summary: The `SharedState` constructor had an early return in case of `-test_batches_snapshots=1`. This early return caused `num_bg_threads_` to never be incremented. Consequently, the driver thread could cleanup objects like the `SharedState` while BG threads were still running and accessing it, leading to crash. The fix is to move the logic for counting threads (both FG and BG) to the place they are launched. That way we can be sure the counts are consistent, at least for now. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9313 Test Plan: below command used to fail, now it passes. ``` $ ./db_stress --db=./test-db/ --expected_values_dir=./test-db-expected/ --max_key=100000 --ops_per_thread=1000 --sync_fault_injection=1 --clear_column_family_one_in=0 --destroy_db_initially=0 -reopen=0 -test_batches_snapshots=1 ``` Reviewed By: jay-zhuang Differential Revision: D33198670 Pulled By: ajkr fbshipit-source-id: 126592dc1eb31998bc8f82ffbf5a0d4eb8dec317 |

4 years ago |

|

|

423538a816 |

Make MemoryAllocator into a Customizable class (#8980)

Summary: - Make MemoryAllocator and its implementations into a Customizable class. - Added a "DefaultMemoryAllocator" which uses new and delete - Added a "CountedMemoryAllocator" that counts the number of allocs and free - Updated the existing tests to use these new allocators - Changed the memkind allocator test into a generic test that can test the various allocators. - Added tests for creating all of the allocators - Added tests to verify/create the JemallocNodumpAllocator using its options. Pull Request resolved: https://github.com/facebook/rocksdb/pull/8980 Reviewed By: zhichao-cao Differential Revision: D32990403 Pulled By: mrambacher fbshipit-source-id: 6fdfe8218c10dd8dfef34344a08201be1fa95c76 |

4 years ago |

|

|

c9818b3325 |

db_stress verify with lost unsynced operations (#8966)

Summary: When a previous run left behind historical state/trace files (implying it was run with --sync_fault_injection set), this PR uses them to restore the expected state according to the DB's recovered sequence number. That way, a tail of latest unsynced operations are permitted to be dropped, as is the case when data in page cache or certain `Env`s is lost. The point of the verification in this scenario is just to ensure there is no hole in the recovered data. Pull Request resolved: https://github.com/facebook/rocksdb/pull/8966 Test Plan: - ran it a while, made sure it is restoring expected values using the historical state/trace files: ``` $ rm -rf ./tmp-db/ ./exp/ && mkdir -p ./tmp-db/ ./exp/ && while ./db_stress -compression_type=none -clear_column_family_one_in=0 -expected_values_dir=./exp -sync_fault_injection=1 -destroy_db_initially=0 -db=./tmp-db -max_key=1000000 -ops_per_thread=10000 -reopen=0 -threads=32 ; do : ; done ``` Reviewed By: pdillinger Differential Revision: D31219445 Pulled By: ajkr fbshipit-source-id: f0e1d51fe5b35465b00565c33331190ea38ba0ad |

4 years ago |

|

|

e05c2bb549 |

Stress test for RocksDB transactions (#8936)

Summary: Current db_stress does not cover complex read-write transactions. Therefore, this PR adds coverage for emulated MyRocks-style transactions in `MultiOpsTxnsStressTest`. To achieve this, we need: - Add a new operation type 'customops' so that we can add new complex groups of operations, e.g. transactions involving multiple read-write operations. - Implement three read-write transactions and two read-only ones to emulate MyRocks-style transactions. Pull Request resolved: https://github.com/facebook/rocksdb/pull/8936 Test Plan: ``` make check ./db_stress -test_multi_ops_txns -use_txn -clear_column_family_one_in=0 -column_families=1 -writepercent=0 -delpercent=0 -delrangepercent=0 -customopspercent=60 -readpercent=20 -prefixpercent=0 -iterpercent=20 -reopen=0 -ops_per_thread=100000 ``` Next step is to add more configurability and refine input generation and result reporting, which will done in separate follow-up PRs. Reviewed By: zhichao-cao Differential Revision: D31071795 Pulled By: riversand963 fbshipit-source-id: 50d7c828346ec643311336b904848a1588a37006 |

4 years ago |

|

|

9e4d56f2c9 |

Fix segmentation fault in table_options.prepopulate_block_cache when used with partition_filters (#9263)

Summary: When table_options.prepopulate_block_cache is set to BlockBasedTableOptions::PrepopulateBlockCache::kFlushOnly and table_options.partition_filters is also set true, then there is segmentation failure when top level filter is fetched because its entered with wrong type in cache. Pull Request resolved: https://github.com/facebook/rocksdb/pull/9263 Test Plan: Updated unit tests; Ran db_stress: make crash_test -j32 Reviewed By: pdillinger Differential Revision: D32936566 Pulled By: akankshamahajan15 fbshipit-source-id: 8bd79e53830d3e3c1bb79787e1ffbc3cb46d4426 |

4 years ago |

|

|

66b31c5098 |

Fix -Werror=maybe-uninitialized in db_stress_tool (#9265)

Summary:

Context/Summary:

Uninitialized variable `SequenceNumber old_saved_seqno` causes asan related compilation error/warning below:

```

db_stress_tool/expected_state.cc:308:55: error: ‘old_saved_seqno’ may be used uninitialized in this function [-Werror=maybe-uninitialized]

308 | if (s.ok() && old_saved_seqno != kMaxSequenceNumber &&

| ~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~^~

```

Fix it by initializing to 0.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/9265

Test Plan:

- make clean && COMPILE_WITH_ASAN=1 make -j48 db_stress_tool/expected_state.o

- monitor if same error happens again after merging

Reviewed By: ajkr

Differential Revision: D32939630

Pulled By: hx235

fbshipit-source-id: 41697515fd11ada8427f606b5dceb4e58d12cb80

|

4 years ago |