Summary:

RocksDb regression commands are exiting with error

/usr/bin/ar: creating

librocksdb.a

/usr/bin/ld: ./cache/cache.o: relocation R_X86_64_32 against `.rodata.str1.1' can not be used when making a shared object; recompile with -fPIC

Bug: It tries to link the static code into a shared lib.

Fix: Added make clean before building shared_lib

Pull Request resolved: https://github.com/facebook/rocksdb/pull/7300

Test Plan:

make clean

make -j$(nproc) static_lib

make -j$(nproc) shared_lib

Reviewed By: pdillinger

Differential Revision: D23276842

Pulled By: akankshamahajan15

fbshipit-source-id: c2e69fa505893ad414786794fc486f3f22f059d5

Summary:

We see some hosts failed to build platform009 with gcc. Revert the default to be platform007 if USE_CLANG is not specified.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/7253

Test Plan: Build with both of USE_CLANG=1 set and not set and observe it builds successfully, and see the tool chain used.

Reviewed By: jay-zhuang

Differential Revision: D23110550

fbshipit-source-id: 25cb47923f7174b24debdad0cc8d90b07c4d5d09

Summary:

Upgrade tool chain to the latest. It is done mostly manually as build_tools/build_detect_platform fails to update many of them.

Try to fix a new clang analyze warning with the new tool chain.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/7251

Test Plan: "make all", "USE_CLANG=1 make all"

Reviewed By: riversand963

Differential Revision: D23091090

fbshipit-source-id: 732e5a30137837431438f85f36296406b641f975

Summary:

`USE_LTO=1` in `make` commands now enables LTO. The archiver (`ar`) needed

to change in this PR to use a wrapper that enables the LTO plugin.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/7181

Test Plan:

build a few ways

```

$ make clean && USE_LTO=1 make -j48 db_bench

$ make clean && USE_CLANG=1 USE_LTO=1 make -j48 db_bench

$ make clean && ROCKSDB_NO_FBCODE=1 USE_LTO=1 make -j48 db_bench

```

Reviewed By: cheng-chang

Differential Revision: D22784994

Pulled By: ajkr

fbshipit-source-id: 9c45333bd49bf4615aa04c85b7c6fd3925421152

Summary:

Change the linking of tests/tools to be against a library rather than a list of objects. This change substantially reduces the size of the objects produced.

peterd clean repo size: 264M

Before this change, with make all: 40G

After this change, with make all: 28G

With make LIB_MODE=shared all: 7.0G

The list of TESTS was changed from being hard-coded to generated from the test sources variable. Note that there are some test sources that are not built as tests (though the set of tests is identical to the previous version).

Added OBJ_DIR option to Makefile to allow objects to be placed in an alternative location. By default, OBJ_DIR is the same as before ("./").

This change is a precursor to being able to build/run the tests/tools linked against static libraries. Additionally, it should be possible to clean up and merge some of the rules for building tests and the like if so desired.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6660

Reviewed By: riversand963

Differential Revision: D22244463

Pulled By: pdillinger

fbshipit-source-id: db9c6341d81ed62c2270374f4ede02fb9604c754

Summary:

When `PORTABLE=1` is set, RocksDB will now be built with backwards compatibility for MacOS as far back as 10.12 (i.e. 2016).

Pull Request resolved: https://github.com/facebook/rocksdb/pull/7016

Reviewed By: ajkr

Differential Revision: D22211312

Pulled By: pdillinger

fbshipit-source-id: 7b0858d9b55d6265d3ea27bf5ea1673639b6538c

Summary:

RocksDB Makefile was assuming existence of 'python' command,

which is not present in CentOS 8. We avoid using 'python' if 'python3' is available.

Also added fancy logic to format-diff.sh to make clang-format-diff.py for Python2 work even with Python3 only (as some CentOS 8 FB machines come equipped)

Also, now use just 'python3' for PYTHON if not found so that an informative

"command not found" error will result rather than something weird.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6883

Test Plan: manually tried some variants, 'make check' on a fresh CentOS 8 machine without 'python' executable or Python2 but with clang-format-diff.py for Python2.

Reviewed By: gg814

Differential Revision: D21767029

Pulled By: pdillinger

fbshipit-source-id: 54761b376b140a3922407bdc462f3572f461d0e9

Summary:

* Add missing unit test for schema stability of FileChecksumGenCrc32c

(previously was only comparing to itself)

* A lot of clarifying comments

* Add some assertions for preconditions

* Rename WritableFileWriter::CalculateFileChecksum -> UpdateFileChecksum

* Simplify FileChecksumGenCrc32c with shared functions

* Implement EndianSwapValue to replace unused EndianTransform

And incidentally since I had trouble with 'make check-format' GitHub action disagreeing with local run,

* Output full diagnostic information when 'make check-format' fails in CI

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6861

Test Plan: new unit test passes before & after other changes

Reviewed By: zhichao-cao

Differential Revision: D21667115

Pulled By: pdillinger

fbshipit-source-id: 6a99970f87605aa024fa540c78cd519ff322c3e6

Summary:

Add Github Action to perform some basic sanity check for PR, inclding the

following.

1) Buck TARGETS file.

On the one hand, The TARGETS file is used for internal buck, and we do not

manually update it. On the other hand, we need to run the buckifier scripts to

update TARGETS whenever new files are added, etc. With this Github Action, we

make sure that every PR does not forget this step. The GH Action uses

a Makefile target called check-buck-targets. Users can manually run `make

check-buck-targets` on local machine.

2) Code format

We use clang-format-diff.py to format our code. The GH Action in this PR makes

sure this step is not skipped. The checking script build_tools/format-diff.sh assumes that `clang-format-diff.py` is executable.

On host running GH Action, it is difficult to download `clang-format-diff.py` and make it

executable. Therefore, we modified build_tools/format-diff.sh to handle the case in which there is a non-executable clang-format-diff.py file in the top-level rocksdb repo directory.

Test Plan (Github and devserver):

Watch for Github Action result in the `Checks` tab.

On dev server

```

make check-format

make check-buck-targets

make check

```

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6761

Test Plan: Watch for Github Action result in the `Checks` tab.

Reviewed By: pdillinger

Differential Revision: D21260209

Pulled By: riversand963

fbshipit-source-id: c646e2f37c6faf9f0614b68aa0efc818cff96787

Summary:

Nasty bug in which more/different changes would be applied than

those shown to user

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6772

Test Plan: manual

Reviewed By: siying

Differential Revision: D21304604

Pulled By: pdillinger

fbshipit-source-id: 7e20740e513c9c300d1522511290a025b35abedc

Summary:

Based on https://github.com/facebook/rocksdb/issues/6648 (CLA Signed), but heavily modified / extended:

* Implicit capture of this via [=] deprecated in C++20, and [=,this] not standard before C++20 -> now using explicit capture lists

* Implicit copy operator deprecated in gcc 9 -> add explicit '= default' definition

* std::random_shuffle deprecated in C++17 and removed in C++20 -> migrated to a replacement in RocksDB random.h API

* Add the ability to build with different std version though -DCMAKE_CXX_STANDARD=11/14/17/20 on the cmake command line

* Minimal rebuild flag of MSVC is deprecated and is forbidden with /std:c++latest (C++20)

* Added MSVC 2019 C++11 & MSVC 2019 C++20 in AppVeyor

* Added GCC 9 C++11 & GCC9 C++20 in Travis

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6697

Test Plan: make check and CI

Reviewed By: cheng-chang

Differential Revision: D21020318

Pulled By: pdillinger

fbshipit-source-id: 12311be5dbd8675a0e2c817f7ec50fa11c18ab91

Summary:

Improve it in two ways:

1. tools/check_format_compatible.sh is not friendly to run outside FB environment. remove the hard-coded http proxy setting. Instead, move it to Legocastle configuration

2. Always disable warning as error, so that older build is more likely to pass.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6702

Test Plan: Run the test and make sure at least it doesn't break.

Reviewed By: riversand963

Differential Revision: D21033329

fbshipit-source-id: 88b4ec1ec49547b772790050a165466bdc4a62a0

Summary:

This PR implements a fault injection mechanism for injecting errors in reads in db_stress. The FaultInjectionTestFS is used for this purpose. A thread local structure is used to track the errors, so that each db_stress thread can independently enable/disable error injection and verify observed errors against expected errors. This is initially enabled only for Get and MultiGet, but can be extended to iterator as well once its proven stable.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6538

Test Plan:

crash_test

make check

Reviewed By: riversand963

Differential Revision: D20714347

Pulled By: anand1976

fbshipit-source-id: d7598321d4a2d72bda0ced57411a337a91d87dc7

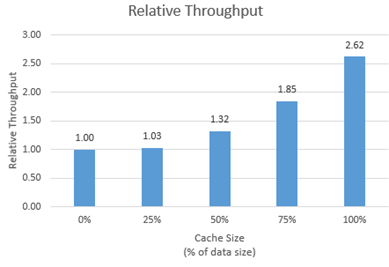

Summary:

New memory technologies are being developed by various hardware vendors (Intel DCPMM is one such technology currently available). These new memory types require different libraries for allocation and management (such as PMDK and memkind). The high capacities available make it possible to provision large caches (up to several TBs in size), beyond what is achievable with DRAM.

The new allocator provided in this PR uses the memkind library to allocate memory on different media.

**Performance**

We tested the new allocator using db_bench.

- For each test, we vary the size of the block cache (relative to the size of the uncompressed data in the database).

- The database is filled sequentially. Throughput is then measured with a readrandom benchmark.

- We use a uniform distribution as a worst-case scenario.

The plot shows throughput (ops/s) relative to a configuration with no block cache and default allocator.

For all tests, p99 latency is below 500 us.

**Changes**

- Add MemkindKmemAllocator

- Add --use_cache_memkind_kmem_allocator db_bench option (to create an LRU block cache with the new allocator)

- Add detection of memkind library with KMEM DAX support

- Add test for MemkindKmemAllocator

**Minimum Requirements**

- kernel 5.3.12

- ndctl v67 - https://github.com/pmem/ndctl

- memkind v1.10.0 - https://github.com/memkind/memkind

**Memory Configuration**

The allocator uses the MEMKIND_DAX_KMEM memory kind. Follow the instructions on[ memkind’s GitHub page](https://github.com/memkind/memkind) to set up NVDIMM memory accordingly.

Note on memory allocation with NVDIMM memory exposed as system memory.

- The MemkindKmemAllocator will only allocate from NVDIMM memory (using memkind_malloc with MEMKIND_DAX_KMEM kind).

- The default allocator is not restricted to RAM by default. Based on NUMA node latency, the kernel should allocate from local RAM preferentially, but it’s a kernel decision. numactl --preferred/--membind can be used to allocate preferentially/exclusively from the local RAM node.

**Usage**

When creating an LRU cache, pass a MemkindKmemAllocator object as argument.

For example (replace capacity with the desired value in bytes):

```

#include "rocksdb/cache.h"

#include "memory/memkind_kmem_allocator.h"

NewLRUCache(

capacity /*size_t*/,

6 /*cache_numshardbits*/,

false /*strict_capacity_limit*/,

false /*cache_high_pri_pool_ratio*/,

std::make_shared<MemkindKmemAllocator>());

```

Refer to [RocksDB’s block cache documentation](https://github.com/facebook/rocksdb/wiki/Block-Cache) to assign the LRU cache as block cache for a database.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6214

Reviewed By: cheng-chang

Differential Revision: D19292435

fbshipit-source-id: 7202f47b769e7722b539c86c2ffd669f64d7b4e1

Summary:

In the `.travis.yml` file the `jdk: openjdk7` element is ignored when `language: cpp`. So whatever version of the JDK that was installed in the Travis container was used - typically JDK 11.

To ensure our RocksJava builds are working, we now instead install and use OpenJDK 8. Ideally we would use OpenJDK 7, as RocksJava supports Java 7, but many of the newer Travis containers don't support Java 7, so Java 8 is the next best thing.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6512

Differential Revision: D20388296

Pulled By: pdillinger

fbshipit-source-id: 8bbe6b59b70cfab7fe81ff63867d907fefdd2df1

Summary:

Check for sys/auxv.h and getauxval before using them as they are not

always available (for example on uclibc)

Signed-off-by: Fabrice Fontaine <fontaine.fabrice@gmail.com>

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6359

Differential Revision: D20239797

fbshipit-source-id: 175a098094d81545628c2372e7c388e70a32fd48

Summary:

We realized bugs related to IO Uring. Turn it off by default.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6405

Test Plan: Manually run build_tools/build_detect_platform and observe outputs.

Differential Revision: D19862792

fbshipit-source-id: 5d5e8e2762997b72a145ae59389ef3d7e4ccd060

Summary:

I set up a mirror of our Java deps on github so we can download

them through github URLs rather than maven.org, which is proving

terribly unreliable from Travis builds.

Also sanitized calls to curl, so they are easier to read and

appropriately fail on download failure.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6348

Test Plan: CI

Differential Revision: D19633621

Pulled By: pdillinger

fbshipit-source-id: 7eb3f730953db2ead758dc94039c040f406790f3

Summary:

Difficult to root cause crash test failures without archiving

db dir. Now all crash test configurations should save the db dir.

Also exit with error code on bad command.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6344

Test Plan:

Hmm, how about this:

for TARGET in stress_crash asan_crash ubsan_crash tsan_crash; do EMAIL=email ONCALL=oncall TRIGGER=all SUBSCRIBER=sub build_tools/rocksdb-lego-determinator $TARGET > tmp && node -c tmp && grep -q Upload tmp || echo Bad; done

Differential Revision: D19625605

Pulled By: pdillinger

fbshipit-source-id: cb84aa93ee80b4534f4c61b90f0e0f99a41155d5

Summary:

While the instruction of installing "make format" dependencies works on some platforms, it is hard to use for some others. Improve it a little bit.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6162

Test Plan: Run "make format" on an envrionment missing the dependencies and see the instructions printed out

Differential Revision: D18970773

fbshipit-source-id: fd21b31053407cc171a6675f781a556a1c3e8945

Summary:

Right now, PosixRandomAccessFile::MultiRead() executes read requests in parallel. In this PR, it leverages I/O Uring library to run it in parallel, even when page cache is enabled. This function will fall back if the kernel version doesn't support it.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5881

Test Plan: Run the unit test on a kernel version supporting it and make sure all tests pass, and run a unit test on kernel version supporting it and see it pass. Before merging, will also run stress test and see it passes.

Differential Revision: D17742266

fbshipit-source-id: e05699c925ac04fdb42379456a4e23e4ebcb803a

Summary:

Adds an improved, replacement Bloom filter implementation (FastLocalBloom) for full and partitioned filters in the block-based table. This replacement is faster and more accurate, especially for high bits per key or millions of keys in a single filter.

Speed

The improved speed, at least on recent x86_64, comes from

* Using fastrange instead of modulo (%)

* Using our new hash function (XXH3 preview, added in a previous commit), which is much faster for large keys and only *slightly* slower on keys around 12 bytes if hashing the same size many thousands of times in a row.

* Optimizing the Bloom filter queries with AVX2 SIMD operations. (Added AVX2 to the USE_SSE=1 build.) Careful design was required to support (a) SIMD-optimized queries, (b) compatible non-SIMD code that's simple and efficient, (c) flexible choice of number of probes, and (d) essentially maximized accuracy for a cache-local Bloom filter. Probes are made eight at a time, so any number of probes up to 8 is the same speed, then up to 16, etc.

* Prefetching cache lines when building the filter. Although this optimization could be applied to the old structure as well, it seems to balance out the small added cost of accumulating 64 bit hashes for adding to the filter rather than 32 bit hashes.

Here's nominal speed data from filter_bench (200MB in filters, about 10k keys each, 10 bits filter data / key, 6 probes, avg key size 24 bytes, includes hashing time) on Skylake DE (relatively low clock speed):

$ ./filter_bench -quick -impl=2 -net_includes_hashing # New Bloom filter

Build avg ns/key: 47.7135

Mixed inside/outside queries...

Single filter net ns/op: 26.2825

Random filter net ns/op: 150.459

Average FP rate %: 0.954651

$ ./filter_bench -quick -impl=0 -net_includes_hashing # Old Bloom filter

Build avg ns/key: 47.2245

Mixed inside/outside queries...

Single filter net ns/op: 63.2978

Random filter net ns/op: 188.038

Average FP rate %: 1.13823

Similar build time but dramatically faster query times on hot data (63 ns to 26 ns), and somewhat faster on stale data (188 ns to 150 ns). Performance differences on batched and skewed query loads are between these extremes as expected.

The only other interesting thing about speed is "inside" (query key was added to filter) vs. "outside" (query key was not added to filter) query times. The non-SIMD implementations are substantially slower when most queries are "outside" vs. "inside". This goes against what one might expect or would have observed years ago, as "outside" queries only need about two probes on average, due to short-circuiting, while "inside" always have num_probes (say 6). The problem is probably the nastily unpredictable branch. The SIMD implementation has few branches (very predictable) and has pretty consistent running time regardless of query outcome.

Accuracy

The generally improved accuracy (re: Issue https://github.com/facebook/rocksdb/issues/5857) comes from a better design for probing indices

within a cache line (re: Issue https://github.com/facebook/rocksdb/issues/4120) and improved accuracy for millions of keys in a single filter from using a 64-bit hash function (XXH3p). Design details in code comments.

Accuracy data (generalizes, except old impl gets worse with millions of keys):

Memory bits per key: FP rate percent old impl -> FP rate percent new impl

6: 5.70953 -> 5.69888

8: 2.45766 -> 2.29709

10: 1.13977 -> 0.959254

12: 0.662498 -> 0.411593

16: 0.353023 -> 0.0873754

24: 0.261552 -> 0.0060971

50: 0.225453 -> ~0.00003 (less than 1 in a million queries are FP)

Fixes https://github.com/facebook/rocksdb/issues/5857

Fixes https://github.com/facebook/rocksdb/issues/4120

Unlike the old implementation, this implementation has a fixed cache line size (64 bytes). At 10 bits per key, the accuracy of this new implementation is very close to the old implementation with 128-byte cache line size. If there's sufficient demand, this implementation could be generalized.

Compatibility

Although old releases would see the new structure as corrupt filter data and read the table as if there's no filter, we've decided only to enable the new Bloom filter with new format_version=5. This provides a smooth path for automatic adoption over time, with an option for early opt-in.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6007

Test Plan: filter_bench has been used thoroughly to validate speed, accuracy, and correctness. Unit tests have been carefully updated to exercise new and old implementations, as well as the logic to select an implementation based on context (format_version).

Differential Revision: D18294749

Pulled By: pdillinger

fbshipit-source-id: d44c9db3696e4d0a17caaec47075b7755c262c5f

Summary:

- Updated our included xxhash implementation to version 0.7.2 (== the latest dev version as of 2019-10-09).

- Using XXH_NAMESPACE (like other fb projects) to avoid potential name collisions.

- Added fastrange64, and unit tests for it and fastrange32. These are faster alternatives to hash % range.

- Use preview version of XXH3 instead of MurmurHash64A for NPHash64

-- Had to update cache_test to increase probability of passing for any given hash function.

- Use fastrange64 instead of % with uses of NPHash64

-- Had to fix WritePreparedTransactionTest.CommitOfDelayedPrepared to avoid deadlock apparently caused by new hash collision.

- Set default seed for NPHash64 because specifying a seed rarely makes sense for it.

- Removed unnecessary include xxhash.h in a popular .h file

- Rename preview version of XXH3 to XXH3p for clarity and to ease backward compatibility in case final version of XXH3 is integrated.

Relying on existing unit tests for NPHash64-related changes. Each new implementation of fastrange64 passed unit tests when manipulating my local build to select it. I haven't done any integration performance tests, but I consider the improved performance of the pieces being swapped in to be well established.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5909

Differential Revision: D18125196

Pulled By: pdillinger

fbshipit-source-id: f6bf83d49d20cbb2549926adf454fd035f0ecc0d

Summary:

Some dependency path is not correct so that ASAN cannot run with CLANG. Fix it.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5946

Test Plan: Run ASAN with CLANG

Differential Revision: D18040933

fbshipit-source-id: 1d82be9d350485cf1df1c792dad765188958641f

Summary:

format-diff.sh, a.k.a. 'make format', would use 'master'

to decide which commits are probably unpublished. Much better to use

facebook remote master since local master may not be caught up and may

have its own unpublished commits. Script now tries to compare against

facebook remote master branch (branch pointer is updated with any fetch

or pull), because those differences are what would be considered the

differences for a pull request.

Also, script would compare against *parent* of merge-base with that

reference point, which is just wrong since that includes the last

published commit.

In case of problems, you can now customize the reference point, by

setting the FORMAT_UPSTREAM variable.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5831

Test Plan: manual

Differential Revision: D17528462

Pulled By: pdillinger

fbshipit-source-id: 50fdb8795d683bf3c14d449669c1a5299e0dfa8b

Summary:

Update version of dependencies.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5777

Test Plan: make release

Differential Revision: D17269421

fbshipit-source-id: e76dbe5389e1d7f811739d3bc1e404b482dfce34

Summary:

In preparing to utilize a new Intel instruction extension, I

noticed problems with the existing build script in regard to the

existing utilized extensions, either with USE_SSE or PORTABLE flags.

* PORTABLE=0 was interpreted the same as PORTABLE=1. Now empty and 0

mean the same. (I guess you were not supposed to set PORTABLE= if you

wanted non-portable--except that...)

* The Facebook build script extensions would set PORTABLE=1 even if

it's already set in a make var or environment. Now it does not override

a non-empty setting, so use PORTABLE=0 for fully optimized build,

overriding Facebook environment default.

* Put in an explanation of the USE_SSE flag where it's used by

build_detect_platform, and cleaned up some confusing/redundant

associated logic.

* If USE_SSE was set and expected intrinsics were not available,

build_detect_platform would exit early but build would proceed with

broken, incomplete configuration. Now warning is gracefully recovered.

* If USE_SSE was set and expected intrinsics were not available,

build would still try to use flags like -msse4.2 etc. which could lead

to unexpected compilation failure or binary incompatibility. Now those

flags are not used if the warning is issued.

This should not break or change existing, valid build scripts.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5800

Test Plan: manual case testing

Differential Revision: D17369543

Pulled By: pdillinger

fbshipit-source-id: 4ee244911680ae71144d272c40aceea548e3ce88

Summary:

This ports `folly::DistributedMutex` into RocksDB. The PR includes everything else needed to compile and use DistributedMutex as a component within folly. Most files are unchanged except for some portability stuff and includes.

For now, I've put this under `rocksdb/third-party`, but if there is a better folder to put this under, let me know. I also am not sure how or where to put unit tests for third-party stuff like this. It seems like gtest is included already, but I need to link with it from another third-party folder.

This also includes some other common components from folly

- folly/Optional

- folly/ScopeGuard (In particular `SCOPE_EXIT`)

- folly/synchronization/ParkingLot (A portable futex-like interface)

- folly/synchronization/AtomicNotification (The standard C++ interface for futexes)

- folly/Indestructible (For singletons that don't get destroyed without allocations)

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5642

Differential Revision: D16544439

fbshipit-source-id: 179b98b5dcddc3075926d31a30f92fd064245731

Summary:

Add an extra cleanup step so that db directory can be saved and uploaded.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5554

Reviewed By: yancouto

Differential Revision: D16168844

Pulled By: riversand963

fbshipit-source-id: ec7b2cee5f11c7d388c36531f8b076d648e2fb19

Summary:

This property is needed to run the child jobs on the same host and thus propagate the child job status back to the parent's.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5456

Reviewed By: yancouto

Differential Revision: D15824382

Pulled By: maysamyabandeh

fbshipit-source-id: 42f2efbedaa3a8b399281105f0ce793c1c9a6191

Summary:

Special characters like slashes and parentheses are not supported.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5424

Differential Revision: D15708067

Pulled By: ltamasi

fbshipit-source-id: 90527ec3ee882a0cdd1249c3946f5eff2ff7c115

Summary:

This change adds a Dynamic Library class to the RocksDB Env. Dynamic libraries are populated via the Env::LoadLibrary method.

The addition of dynamic library support allows for a few different features to be developed:

1. The compression code can be changed to use dynamic library support. This would allow RocksDB to determine at run-time what compression packages were installed. This change would eliminate the need to make sure the build-time and run-time environment had the same library set. It would also simplify some of the Java build issues (where it attempts to build and include various packages inside the RocksDB jars).

2. Along with other features (to be provided in a subsequent PR), this change would allow code/configurations to be added to RocksDB at run-time. For example, the build system includes code for building an "rados" environment and adding "Cassandra" features. Instead of these extensions being built into the base RocksDB code, these extensions could be loaded at run-time as required/appropriate, either by configuration or explicitly.

We intend to push out other changes in support of the extending RocksDB at run-time via configurations.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5281

Differential Revision: D15447613

Pulled By: riversand963

fbshipit-source-id: 452cd4f54511c0bceee18f6d9d919aae9fd25fef

Summary:

Fix some hdfs-related code so that it can compile and run 'db_stress'

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5122

Differential Revision: D14675495

Pulled By: riversand963

fbshipit-source-id: cac280479efcf5451982558947eac1732e8bc45a

Summary:

The compiler flag `-DROCKSDB_FALLOCATE_PRESENT` was only set when

`fallocate`, `FALLOC_FL_KEEP_SIZE`, and `FALLOC_FL_PUNCH_HOLE` were all

present. However, the last of the three is not really necessary for the

primary `fallocate` use case; furthermore, it was introduced only in later

Linux kernel versions (2.6.38+).

This PR changes the flag `-DROCKSDB_FALLOCATE_PRESENT` to only require

`fallocate` and `FALLOC_FL_KEEP_SIZE` to be present. There is a separate

check for `FALLOC_FL_PUNCH_HOLE` only in the place where it is used.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5023

Differential Revision: D14248487

Pulled By: siying

fbshipit-source-id: a10ed0b902fa755988e957bd2dcec9081ec0502e