|

|

|

|

// Copyright (c) 2011-present, Facebook, Inc. All rights reserved.

|

|

|

|

|

// This source code is licensed under both the GPLv2 (found in the

|

|

|

|

|

// COPYING file in the root directory) and Apache 2.0 License

|

|

|

|

|

// (found in the LICENSE.Apache file in the root directory).

|

|

|

|

|

//

|

|

|

|

|

// Copyright (c) 2011 The LevelDB Authors. All rights reserved.

|

|

|

|

|

// Use of this source code is governed by a BSD-style license that can be

|

|

|

|

|

// found in the LICENSE file. See the AUTHORS file for names of contributors.

|

|

|

|

|

|

|

|

|

|

#ifdef GFLAGS

|

|

|

|

|

#ifdef NUMA

|

|

|

|

|

#include <numa.h>

|

|

|

|

|

#include <numaif.h>

|

|

|

|

|

#endif

|

|

|

|

|

#ifndef OS_WIN

|

|

|

|

|

#include <unistd.h>

|

|

|

|

|

#endif

|

|

|

|

|

#include <fcntl.h>

|

|

|

|

|

#include <stdio.h>

|

|

|

|

|

#include <stdlib.h>

|

|

|

|

|

#include <sys/types.h>

|

db_bench periodically writes QPS to CSV file

Summary:

This is part of an effort to better understand and optimize RocksDB stalls under high load. I added a feature to db_bench to periodically write QPS to CSV files. That way we can nicely see how our QPS changes in time (especially when DB is stalled) and can do a better job of evaluating our stall system (i.e. we want the QPS to be as constant as possible, as opposed to having bunch of stalls)

Cool part of CSV files is that we can easily graph them -- there are a bunch of tools available.

Test Plan:

Ran ./db_bench --report_interval_seconds=10 --benchmarks=fillrandom --num=10000000

and observed this in report.csv:

secs_elapsed,interval_qps

10,2725860

20,1980480

30,1863456

40,1454359

50,1460389

Reviewers: sdong, MarkCallaghan, rven, yhchiang

Reviewed By: yhchiang

Subscribers: dhruba, leveldb

Differential Revision: https://reviews.facebook.net/D40047

10 years ago

|

|

|

#include <atomic>

|

|

|

|

|

#include <cinttypes>

|

db_bench periodically writes QPS to CSV file

Summary:

This is part of an effort to better understand and optimize RocksDB stalls under high load. I added a feature to db_bench to periodically write QPS to CSV files. That way we can nicely see how our QPS changes in time (especially when DB is stalled) and can do a better job of evaluating our stall system (i.e. we want the QPS to be as constant as possible, as opposed to having bunch of stalls)

Cool part of CSV files is that we can easily graph them -- there are a bunch of tools available.

Test Plan:

Ran ./db_bench --report_interval_seconds=10 --benchmarks=fillrandom --num=10000000

and observed this in report.csv:

secs_elapsed,interval_qps

10,2725860

20,1980480

30,1863456

40,1454359

50,1460389

Reviewers: sdong, MarkCallaghan, rven, yhchiang

Reviewed By: yhchiang

Subscribers: dhruba, leveldb

Differential Revision: https://reviews.facebook.net/D40047

10 years ago

|

|

|

#include <condition_variable>

|

|

|

|

|

#include <cstddef>

|

|

|

|

|

#include <memory>

|

db_bench periodically writes QPS to CSV file

Summary:

This is part of an effort to better understand and optimize RocksDB stalls under high load. I added a feature to db_bench to periodically write QPS to CSV files. That way we can nicely see how our QPS changes in time (especially when DB is stalled) and can do a better job of evaluating our stall system (i.e. we want the QPS to be as constant as possible, as opposed to having bunch of stalls)

Cool part of CSV files is that we can easily graph them -- there are a bunch of tools available.

Test Plan:

Ran ./db_bench --report_interval_seconds=10 --benchmarks=fillrandom --num=10000000

and observed this in report.csv:

secs_elapsed,interval_qps

10,2725860

20,1980480

30,1863456

40,1454359

50,1460389

Reviewers: sdong, MarkCallaghan, rven, yhchiang

Reviewed By: yhchiang

Subscribers: dhruba, leveldb

Differential Revision: https://reviews.facebook.net/D40047

10 years ago

|

|

|

#include <mutex>

|

|

|

|

|

#include <thread>

|

|

|

|

|

#include <unordered_map>

|

db_bench periodically writes QPS to CSV file

Summary:

This is part of an effort to better understand and optimize RocksDB stalls under high load. I added a feature to db_bench to periodically write QPS to CSV files. That way we can nicely see how our QPS changes in time (especially when DB is stalled) and can do a better job of evaluating our stall system (i.e. we want the QPS to be as constant as possible, as opposed to having bunch of stalls)

Cool part of CSV files is that we can easily graph them -- there are a bunch of tools available.

Test Plan:

Ran ./db_bench --report_interval_seconds=10 --benchmarks=fillrandom --num=10000000

and observed this in report.csv:

secs_elapsed,interval_qps

10,2725860

20,1980480

30,1863456

40,1454359

50,1460389

Reviewers: sdong, MarkCallaghan, rven, yhchiang

Reviewed By: yhchiang

Subscribers: dhruba, leveldb

Differential Revision: https://reviews.facebook.net/D40047

10 years ago

|

|

|

|

|

|

|

|

#include "db/db_impl/db_impl.h"

|

|

|

|

|

#include "db/malloc_stats.h"

|

|

|

|

|

#include "db/version_set.h"

|

|

|

|

|

#include "hdfs/env_hdfs.h"

|

|

|

|

|

#include "monitoring/histogram.h"

|

|

|

|

|

#include "monitoring/statistics.h"

|

|

|

|

|

#include "options/cf_options.h"

|

|

|

|

|

#include "port/port.h"

|

|

|

|

|

#include "port/stack_trace.h"

|

|

|

|

|

#include "rocksdb/cache.h"

|

|

|

|

|

#include "rocksdb/db.h"

|

|

|

|

|

#include "rocksdb/env.h"

|

|

|

|

|

#include "rocksdb/filter_policy.h"

|

|

|

|

|

#include "rocksdb/memtablerep.h"

|

|

|

|

|

#include "rocksdb/options.h"

|

|

|

|

|

#include "rocksdb/perf_context.h"

|

|

|

|

|

#include "rocksdb/persistent_cache.h"

|

|

|

|

|

#include "rocksdb/rate_limiter.h"

|

|

|

|

|

#include "rocksdb/slice.h"

|

|

|

|

|

#include "rocksdb/slice_transform.h"

|

|

|

|

|

#include "rocksdb/stats_history.h"

|

|

|

|

|

#include "rocksdb/utilities/object_registry.h"

|

|

|

|

|

#include "rocksdb/utilities/optimistic_transaction_db.h"

|

|

|

|

|

#include "rocksdb/utilities/options_util.h"

|

|

|

|

|

#include "rocksdb/utilities/sim_cache.h"

|

Pessimistic Transactions

Summary:

Initial implementation of Pessimistic Transactions. This diff contains the api changes discussed in D38913. This diff is pretty large, so let me know if people would prefer to meet up to discuss it.

MyRocks folks: please take a look at the API in include/rocksdb/utilities/transaction[_db].h and let me know if you have any issues.

Also, you'll notice a couple of TODOs in the implementation of RollbackToSavePoint(). After chatting with Siying, I'm going to send out a separate diff for an alternate implementation of this feature that implements the rollback inside of WriteBatch/WriteBatchWithIndex. We can then decide which route is preferable.

Next, I'm planning on doing some perf testing and then integrating this diff into MongoRocks for further testing.

Test Plan: Unit tests, db_bench parallel testing.

Reviewers: igor, rven, sdong, yhchiang, yoshinorim

Reviewed By: sdong

Subscribers: hermanlee4, maykov, spetrunia, leveldb, dhruba

Differential Revision: https://reviews.facebook.net/D40869

10 years ago

|

|

|

#include "rocksdb/utilities/transaction.h"

|

|

|

|

|

#include "rocksdb/utilities/transaction_db.h"

|

|

|

|

|

#include "rocksdb/write_batch.h"

|

|

|

|

|

#include "test_util/testutil.h"

|

|

|

|

|

#include "test_util/transaction_test_util.h"

|

|

|

|

|

#include "util/cast_util.h"

|

|

|

|

|

#include "util/compression.h"

|

|

|

|

|

#include "util/crc32c.h"

|

|

|

|

|

#include "util/gflags_compat.h"

|

|

|

|

|

#include "util/mutexlock.h"

|

|

|

|

|

#include "util/random.h"

|

|

|

|

|

#include "util/stderr_logger.h"

|

|

|

|

|

#include "util/string_util.h"

|

|

|

|

|

#include "util/xxhash.h"

|

|

|

|

|

#include "utilities/blob_db/blob_db.h"

|

Benchmarking for Merge Operator

Summary:

Updated db_bench and utilities/merge_operators.h to allow for dynamic benchmarking

of merge operators in db_bench. Added a new test (--benchmarks=mergerandom), which performs

a bunch of random Merge() operations over random keys. Also added a "--merge_operator=" flag

so that the tester can easily benchmark different merge operators. Currently supports

the PutOperator and UInt64Add operator. Support for stringappend or list append may come later.

Test Plan:

1. make db_bench

2. Test the PutOperator (simulating Put) as follows:

./db_bench --benchmarks=fillrandom,readrandom,updaterandom,readrandom,mergerandom,readrandom --merge_operator=put

--threads=2

3. Test the UInt64AddOperator (simulating numeric addition) similarly:

./db_bench --value_size=8 --benchmarks=fillrandom,readrandom,updaterandom,readrandom,mergerandom,readrandom

--merge_operator=uint64add --threads=2

Reviewers: haobo, dhruba, zshao, MarkCallaghan

Reviewed By: haobo

CC: leveldb

Differential Revision: https://reviews.facebook.net/D11535

11 years ago

|

|

|

#include "utilities/merge_operators.h"

|

|

|

|

|

#include "utilities/merge_operators/bytesxor.h"

|

New API to get all merge operands for a Key (#5604)

Summary:

This is a new API added to db.h to allow for fetching all merge operands associated with a Key. The main motivation for this API is to support use cases where doing a full online merge is not necessary as it is performance sensitive. Example use-cases:

1. Update subset of columns and read subset of columns -

Imagine a SQL Table, a row is encoded as a K/V pair (as it is done in MyRocks). If there are many columns and users only updated one of them, we can use merge operator to reduce write amplification. While users only read one or two columns in the read query, this feature can avoid a full merging of the whole row, and save some CPU.

2. Updating very few attributes in a value which is a JSON-like document -

Updating one attribute can be done efficiently using merge operator, while reading back one attribute can be done more efficiently if we don't need to do a full merge.

----------------------------------------------------------------------------------------------------

API :

Status GetMergeOperands(

const ReadOptions& options, ColumnFamilyHandle* column_family,

const Slice& key, PinnableSlice* merge_operands,

GetMergeOperandsOptions* get_merge_operands_options,

int* number_of_operands)

Example usage :

int size = 100;

int number_of_operands = 0;

std::vector<PinnableSlice> values(size);

GetMergeOperandsOptions merge_operands_info;

db_->GetMergeOperands(ReadOptions(), db_->DefaultColumnFamily(), "k1", values.data(), merge_operands_info, &number_of_operands);

Description :

Returns all the merge operands corresponding to the key. If the number of merge operands in DB is greater than merge_operands_options.expected_max_number_of_operands no merge operands are returned and status is Incomplete. Merge operands returned are in the order of insertion.

merge_operands-> Points to an array of at-least merge_operands_options.expected_max_number_of_operands and the caller is responsible for allocating it. If the status returned is Incomplete then number_of_operands will contain the total number of merge operands found in DB for key.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5604

Test Plan:

Added unit test and perf test in db_bench that can be run using the command:

./db_bench -benchmarks=getmergeoperands --merge_operator=sortlist

Differential Revision: D16657366

Pulled By: vjnadimpalli

fbshipit-source-id: 0faadd752351745224ee12d4ae9ef3cb529951bf

5 years ago

|

|

|

#include "utilities/merge_operators/sortlist.h"

|

|

|

|

|

#include "utilities/persistent_cache/block_cache_tier.h"

|

|

|

|

|

|

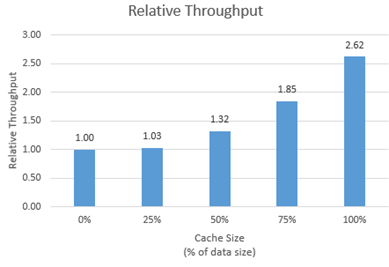

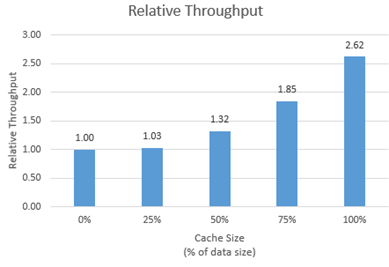

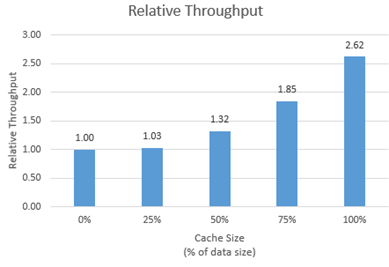

Provide an allocator for new memory type to be used with RocksDB block cache (#6214)

Summary:

New memory technologies are being developed by various hardware vendors (Intel DCPMM is one such technology currently available). These new memory types require different libraries for allocation and management (such as PMDK and memkind). The high capacities available make it possible to provision large caches (up to several TBs in size), beyond what is achievable with DRAM.

The new allocator provided in this PR uses the memkind library to allocate memory on different media.

**Performance**

We tested the new allocator using db_bench.

- For each test, we vary the size of the block cache (relative to the size of the uncompressed data in the database).

- The database is filled sequentially. Throughput is then measured with a readrandom benchmark.

- We use a uniform distribution as a worst-case scenario.

The plot shows throughput (ops/s) relative to a configuration with no block cache and default allocator.

For all tests, p99 latency is below 500 us.

**Changes**

- Add MemkindKmemAllocator

- Add --use_cache_memkind_kmem_allocator db_bench option (to create an LRU block cache with the new allocator)

- Add detection of memkind library with KMEM DAX support

- Add test for MemkindKmemAllocator

**Minimum Requirements**

- kernel 5.3.12

- ndctl v67 - https://github.com/pmem/ndctl

- memkind v1.10.0 - https://github.com/memkind/memkind

**Memory Configuration**

The allocator uses the MEMKIND_DAX_KMEM memory kind. Follow the instructions on[ memkind’s GitHub page](https://github.com/memkind/memkind) to set up NVDIMM memory accordingly.

Note on memory allocation with NVDIMM memory exposed as system memory.

- The MemkindKmemAllocator will only allocate from NVDIMM memory (using memkind_malloc with MEMKIND_DAX_KMEM kind).

- The default allocator is not restricted to RAM by default. Based on NUMA node latency, the kernel should allocate from local RAM preferentially, but it’s a kernel decision. numactl --preferred/--membind can be used to allocate preferentially/exclusively from the local RAM node.

**Usage**

When creating an LRU cache, pass a MemkindKmemAllocator object as argument.

For example (replace capacity with the desired value in bytes):

```

#include "rocksdb/cache.h"

#include "memory/memkind_kmem_allocator.h"

NewLRUCache(

capacity /*size_t*/,

6 /*cache_numshardbits*/,

false /*strict_capacity_limit*/,

false /*cache_high_pri_pool_ratio*/,

std::make_shared<MemkindKmemAllocator>());

```

Refer to [RocksDB’s block cache documentation](https://github.com/facebook/rocksdb/wiki/Block-Cache) to assign the LRU cache as block cache for a database.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6214

Reviewed By: cheng-chang

Differential Revision: D19292435

fbshipit-source-id: 7202f47b769e7722b539c86c2ffd669f64d7b4e1

5 years ago

|

|

|

#ifdef MEMKIND

|

|

|

|

|

#include "memory/memkind_kmem_allocator.h"

|

|

|

|

|

#endif

|

|

|

|

|

|

|

|

|

|

#ifdef OS_WIN

|

|

|

|

|

#include <io.h> // open/close

|

|

|

|

|

#endif

|

|

|

|

|

|

|

|

|

|

using GFLAGS_NAMESPACE::ParseCommandLineFlags;

|

|

|

|

|

using GFLAGS_NAMESPACE::RegisterFlagValidator;

|

|

|

|

|

using GFLAGS_NAMESPACE::SetUsageMessage;

|

|

|

|

|

|

|

|

|

|

DEFINE_string(

|

|

|

|

|

benchmarks,

|

|

|

|

|

"fillseq,"

|

|

|

|

|

"fillseqdeterministic,"

|

|

|

|

|

"fillsync,"

|

|

|

|

|

"fillrandom,"

|

|

|

|

|

"filluniquerandomdeterministic,"

|

|

|

|

|

"overwrite,"

|

|

|

|

|

"readrandom,"

|

|

|

|

|

"newiterator,"

|

|

|

|

|

"newiteratorwhilewriting,"

|

|

|

|

|

"seekrandom,"

|

|

|

|

|

"seekrandomwhilewriting,"

|

|

|

|

|

"seekrandomwhilemerging,"

|

|

|

|

|

"readseq,"

|

|

|

|

|

"readreverse,"

|

|

|

|

|

"compact,"

|

|

|

|

|

"compactall,"

|

|

|

|

|

"multireadrandom,"

|

Generate mixed workload with Get, Put, Seek in db_bench (#4788)

Summary:

Based on the specific workload models (key access distribution, value size distribution, and iterator scan length distribution, the QPS variation), the MixGraph benchmark generate the synthetic workload according to these distributions which can reflect the real-world workload characteristics.

After user enable the tracing function, they will get the trace file. By analyzing the trace file with the trace_analyzer tool, user can generate a set of statistic data files including. The *_accessed_key_stats.txt, *-accessed_value_size_distribution.txt, *-iterator_length_distribution.txt, and *-qps_stats.txt are mainly used to fit the Matlab model fitting. After that, user can get the parameters of the workload distributions (the modeling details are described: [here](https://github.com/facebook/rocksdb/wiki/RocksDB-Trace%2C-Replay%2C-and-Analyzer))

The key access distribution follows the The two-term power model. The probability density function is: `f(x) = ax^{b}+c`. The corresponding parameters are key_dist_a, key_dist_b, and key_dist_c in db_bench

For the value size distribution and iterator scan length distribution, they both follow the Generalized Pareto Distribution. The probability density function is `f(x) = (1/sigma)(1+k*(x-theta)/sigma))^{-1-1/k)`. The parameters are: value_k, value_theta, value_sigma and iter_k, iter_theta, iter_sigma. For more information about the Generalized Pareto Distribution, users can find the [wiki](https://en.wikipedia.org/wiki/Generalized_Pareto_distribution) and [Matalb page](https://www.mathworks.com/help/stats/generalized-pareto-distribution.html)

As for the QPS, it follows the diurnal pattern. So Sine is a good model to fit it. `F(x) = sine_a*sin(sine_b*x + sine_c) + sine_d`. The trace_will tell you the average QPS in the print out resutls, which is sine_d. After user fit the "*-qps_stats.txt" to the Matlab model, user can get the sine_a, sine_b, and sine_c. By using the 4 parameters, user can control the QPS variation including the period, average, changes.

To use the bench mark, user can indicate the following parameters as examples:

```

-benchmarks="mixgraph" -key_dist_a=0.002312 -key_dist_b=0.3467 -value_k=0.9233 -value_sigma=226.4092 -iter_k=2.517 -iter_sigma=14.236 -mix_get_ratio=0.7 -mix_put_ratio=0.25 -mix_seek_ratio=0.05 -sine_mix_rate_interval_milliseconds=500 -sine_a=15000 -sine_b=1 -sine_d=20000

```

Pull Request resolved: https://github.com/facebook/rocksdb/pull/4788

Differential Revision: D13573940

Pulled By: sagar0

fbshipit-source-id: e184c27e07b4f1bc0b436c2be36c5090c1fb0222

6 years ago

|

|

|

"mixgraph,"

|

|

|

|

|

"readseq,"

|

|

|

|

|

"readtorowcache,"

|

|

|

|

|

"readtocache,"

|

|

|

|

|

"readreverse,"

|

|

|

|

|

"readwhilewriting,"

|

|

|

|

|

"readwhilemerging,"

|

|

|

|

|

"readwhilescanning,"

|

|

|

|

|

"readrandomwriterandom,"

|

|

|

|

|

"updaterandom,"

|

|

|

|

|

"xorupdaterandom,"

|

For ApproximateSizes, pro-rate table metadata size over data blocks (#6784)

Summary:

The implementation of GetApproximateSizes was inconsistent in

its treatment of the size of non-data blocks of SST files, sometimes

including and sometimes now. This was at its worst with large portion

of table file used by filters and querying a small range that crossed

a table boundary: the size estimate would include large filter size.

It's conceivable that someone might want only to know the size in terms

of data blocks, but I believe that's unlikely enough to ignore for now.

Similarly, there's no evidence the internal function AppoximateOffsetOf

is used for anything other than a one-sided ApproximateSize, so I intend

to refactor to remove redundancy in a follow-up commit.

So to fix this, GetApproximateSizes (and implementation details

ApproximateSize and ApproximateOffsetOf) now consistently include in

their returned sizes a portion of table file metadata (incl filters

and indexes) based on the size portion of the data blocks in range. In

other words, if a key range covers data blocks that are X% by size of all

the table's data blocks, returned approximate size is X% of the total

file size. It would technically be more accurate to attribute metadata

based on number of keys, but that's not computationally efficient with

data available and rarely a meaningful difference.

Also includes miscellaneous comment improvements / clarifications.

Also included is a new approximatesizerandom benchmark for db_bench.

No significant performance difference seen with this change, whether ~700 ops/sec with cache_index_and_filter_blocks and small cache or ~150k ops/sec without cache_index_and_filter_blocks.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6784

Test Plan:

Test added to DBTest.ApproximateSizesFilesWithErrorMargin.

Old code running new test...

[ RUN ] DBTest.ApproximateSizesFilesWithErrorMargin

db/db_test.cc:1562: Failure

Expected: (size) <= (11 * 100), actual: 9478 vs 1100

Other tests updated to reflect consistent accounting of metadata.

Reviewed By: siying

Differential Revision: D21334706

Pulled By: pdillinger

fbshipit-source-id: 6f86870e45213334fedbe9c73b4ebb1d8d611185

5 years ago

|

|

|

"approximatesizerandom,"

|

|

|

|

|

"randomwithverify,"

|

|

|

|

|

"fill100K,"

|

|

|

|

|

"crc32c,"

|

|

|

|

|

"xxhash,"

|

|

|

|

|

"compress,"

|

|

|

|

|

"uncompress,"

|

|

|

|

|

"acquireload,"

|

|

|

|

|

"fillseekseq,"

|

|

|

|

|

"randomtransaction,"

|

|

|

|

|

"randomreplacekeys,"

|

New API to get all merge operands for a Key (#5604)

Summary:

This is a new API added to db.h to allow for fetching all merge operands associated with a Key. The main motivation for this API is to support use cases where doing a full online merge is not necessary as it is performance sensitive. Example use-cases:

1. Update subset of columns and read subset of columns -

Imagine a SQL Table, a row is encoded as a K/V pair (as it is done in MyRocks). If there are many columns and users only updated one of them, we can use merge operator to reduce write amplification. While users only read one or two columns in the read query, this feature can avoid a full merging of the whole row, and save some CPU.

2. Updating very few attributes in a value which is a JSON-like document -

Updating one attribute can be done efficiently using merge operator, while reading back one attribute can be done more efficiently if we don't need to do a full merge.

----------------------------------------------------------------------------------------------------

API :

Status GetMergeOperands(

const ReadOptions& options, ColumnFamilyHandle* column_family,

const Slice& key, PinnableSlice* merge_operands,

GetMergeOperandsOptions* get_merge_operands_options,

int* number_of_operands)

Example usage :

int size = 100;

int number_of_operands = 0;

std::vector<PinnableSlice> values(size);

GetMergeOperandsOptions merge_operands_info;

db_->GetMergeOperands(ReadOptions(), db_->DefaultColumnFamily(), "k1", values.data(), merge_operands_info, &number_of_operands);

Description :

Returns all the merge operands corresponding to the key. If the number of merge operands in DB is greater than merge_operands_options.expected_max_number_of_operands no merge operands are returned and status is Incomplete. Merge operands returned are in the order of insertion.

merge_operands-> Points to an array of at-least merge_operands_options.expected_max_number_of_operands and the caller is responsible for allocating it. If the status returned is Incomplete then number_of_operands will contain the total number of merge operands found in DB for key.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5604

Test Plan:

Added unit test and perf test in db_bench that can be run using the command:

./db_bench -benchmarks=getmergeoperands --merge_operator=sortlist

Differential Revision: D16657366

Pulled By: vjnadimpalli

fbshipit-source-id: 0faadd752351745224ee12d4ae9ef3cb529951bf

5 years ago

|

|

|

"timeseries,"

|

|

|

|

|

"getmergeoperands",

|

|

|

|

|

|

|

|

|

|

"Comma-separated list of operations to run in the specified"

|

|

|

|

|

" order. Available benchmarks:\n"

|

|

|

|

|

"\tfillseq -- write N values in sequential key"

|

|

|

|

|

" order in async mode\n"

|

|

|

|

|

"\tfillseqdeterministic -- write N values in the specified"

|

|

|

|

|

" key order and keep the shape of the LSM tree\n"

|

|

|

|

|

"\tfillrandom -- write N values in random key order in async"

|

|

|

|

|

" mode\n"

|

|

|

|

|

"\tfilluniquerandomdeterministic -- write N values in a random"

|

|

|

|

|

" key order and keep the shape of the LSM tree\n"

|

|

|

|

|

"\toverwrite -- overwrite N values in random key order in"

|

|

|

|

|

" async mode\n"

|

|

|

|

|

"\tfillsync -- write N/1000 values in random key order in "

|

|

|

|

|

"sync mode\n"

|

|

|

|

|

"\tfill100K -- write N/1000 100K values in random order in"

|

|

|

|

|

" async mode\n"

|

|

|

|

|

"\tdeleteseq -- delete N keys in sequential order\n"

|

|

|

|

|

"\tdeleterandom -- delete N keys in random order\n"

|

|

|

|

|

"\treadseq -- read N times sequentially\n"

|

|

|

|

|

"\treadtocache -- 1 thread reading database sequentially\n"

|

|

|

|

|

"\treadreverse -- read N times in reverse order\n"

|

|

|

|

|

"\treadrandom -- read N times in random order\n"

|

|

|

|

|

"\treadmissing -- read N missing keys in random order\n"

|

|

|

|

|

"\treadwhilewriting -- 1 writer, N threads doing random "

|

|

|

|

|

"reads\n"

|

|

|

|

|

"\treadwhilemerging -- 1 merger, N threads doing random "

|

|

|

|

|

"reads\n"

|

|

|

|

|

"\treadwhilescanning -- 1 thread doing full table scan, "

|

|

|

|

|

"N threads doing random reads\n"

|

|

|

|

|

"\treadrandomwriterandom -- N threads doing random-read, "

|

|

|

|

|

"random-write\n"

|

|

|

|

|

"\tupdaterandom -- N threads doing read-modify-write for random "

|

|

|

|

|

"keys\n"

|

|

|

|

|

"\txorupdaterandom -- N threads doing read-XOR-write for "

|

|

|

|

|

"random keys\n"

|

|

|

|

|

"\tappendrandom -- N threads doing read-modify-write with "

|

|

|

|

|

"growing values\n"

|

|

|

|

|

"\tmergerandom -- same as updaterandom/appendrandom using merge"

|

|

|

|

|

" operator. "

|

|

|

|

|

"Must be used with merge_operator\n"

|

|

|

|

|

"\treadrandommergerandom -- perform N random read-or-merge "

|

|

|

|

|

"operations. Must be used with merge_operator\n"

|

|

|

|

|

"\tnewiterator -- repeated iterator creation\n"

|

|

|

|

|

"\tseekrandom -- N random seeks, call Next seek_nexts times "

|

|

|

|

|

"per seek\n"

|

|

|

|

|

"\tseekrandomwhilewriting -- seekrandom and 1 thread doing "

|

|

|

|

|

"overwrite\n"

|

|

|

|

|

"\tseekrandomwhilemerging -- seekrandom and 1 thread doing "

|

|

|

|

|

"merge\n"

|

|

|

|

|

"\tcrc32c -- repeated crc32c of 4K of data\n"

|

|

|

|

|

"\txxhash -- repeated xxHash of 4K of data\n"

|

|

|

|

|

"\tacquireload -- load N*1000 times\n"

|

|

|

|

|

"\tfillseekseq -- write N values in sequential key, then read "

|

|

|

|

|

"them by seeking to each key\n"

|

|

|

|

|

"\trandomtransaction -- execute N random transactions and "

|

|

|

|

|

"verify correctness\n"

|

|

|

|

|

"\trandomreplacekeys -- randomly replaces N keys by deleting "

|

|

|

|

|

"the old version and putting the new version\n\n"

|

|

|

|

|

"\ttimeseries -- 1 writer generates time series data "

|

|

|

|

|

"and multiple readers doing random reads on id\n\n"

|

|

|

|

|

"Meta operations:\n"

|

|

|

|

|

"\tcompact -- Compact the entire DB; If multiple, randomly choose one\n"

|

|

|

|

|

"\tcompactall -- Compact the entire DB\n"

|

|

|

|

|

"\tstats -- Print DB stats\n"

|

|

|

|

|

"\tresetstats -- Reset DB stats\n"

|

|

|

|

|

"\tlevelstats -- Print the number of files and bytes per level\n"

|

|

|

|

|

"\tsstables -- Print sstable info\n"

|

|

|

|

|

"\theapprofile -- Dump a heap profile (if supported by this port)\n"

|

New API to get all merge operands for a Key (#5604)

Summary:

This is a new API added to db.h to allow for fetching all merge operands associated with a Key. The main motivation for this API is to support use cases where doing a full online merge is not necessary as it is performance sensitive. Example use-cases:

1. Update subset of columns and read subset of columns -

Imagine a SQL Table, a row is encoded as a K/V pair (as it is done in MyRocks). If there are many columns and users only updated one of them, we can use merge operator to reduce write amplification. While users only read one or two columns in the read query, this feature can avoid a full merging of the whole row, and save some CPU.

2. Updating very few attributes in a value which is a JSON-like document -

Updating one attribute can be done efficiently using merge operator, while reading back one attribute can be done more efficiently if we don't need to do a full merge.

----------------------------------------------------------------------------------------------------

API :

Status GetMergeOperands(

const ReadOptions& options, ColumnFamilyHandle* column_family,

const Slice& key, PinnableSlice* merge_operands,

GetMergeOperandsOptions* get_merge_operands_options,

int* number_of_operands)

Example usage :

int size = 100;

int number_of_operands = 0;

std::vector<PinnableSlice> values(size);

GetMergeOperandsOptions merge_operands_info;

db_->GetMergeOperands(ReadOptions(), db_->DefaultColumnFamily(), "k1", values.data(), merge_operands_info, &number_of_operands);

Description :

Returns all the merge operands corresponding to the key. If the number of merge operands in DB is greater than merge_operands_options.expected_max_number_of_operands no merge operands are returned and status is Incomplete. Merge operands returned are in the order of insertion.

merge_operands-> Points to an array of at-least merge_operands_options.expected_max_number_of_operands and the caller is responsible for allocating it. If the status returned is Incomplete then number_of_operands will contain the total number of merge operands found in DB for key.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5604

Test Plan:

Added unit test and perf test in db_bench that can be run using the command:

./db_bench -benchmarks=getmergeoperands --merge_operator=sortlist

Differential Revision: D16657366

Pulled By: vjnadimpalli

fbshipit-source-id: 0faadd752351745224ee12d4ae9ef3cb529951bf

5 years ago

|

|

|

"\treplay -- replay the trace file specified with trace_file\n"

|

|

|

|

|

"\tgetmergeoperands -- Insert lots of merge records which are a list of "

|

|

|

|

|

"sorted ints for a key and then compare performance of lookup for another "

|

|

|

|

|

"key "

|

|

|

|

|

"by doing a Get followed by binary searching in the large sorted list vs "

|

|

|

|

|

"doing a GetMergeOperands and binary searching in the operands which are"

|

|

|

|

|

"sorted sub-lists. The MergeOperator used is sortlist.h\n");

|

|

|

|

|

|

|

|

|

|

DEFINE_int64(num, 1000000, "Number of key/values to place in database");

|

|

|

|

|

|

|

|

|

|

DEFINE_int64(numdistinct, 1000,

|

|

|

|

|

"Number of distinct keys to use. Used in RandomWithVerify to "

|

|

|

|

|

"read/write on fewer keys so that gets are more likely to find the"

|

|

|

|

|

" key and puts are more likely to update the same key");

|

|

|

|

|

|

|

|

|

|

DEFINE_int64(merge_keys, -1,

|

|

|

|

|

"Number of distinct keys to use for MergeRandom and "

|

|

|

|

|

"ReadRandomMergeRandom. "

|

|

|

|

|

"If negative, there will be FLAGS_num keys.");

|

|

|

|

|

DEFINE_int32(num_column_families, 1, "Number of Column Families to use.");

|

|

|

|

|

|

|

|

|

|

DEFINE_int32(

|

|

|

|

|

num_hot_column_families, 0,

|

|

|

|

|

"Number of Hot Column Families. If more than 0, only write to this "

|

|

|

|

|

"number of column families. After finishing all the writes to them, "

|

|

|

|

|

"create new set of column families and insert to them. Only used "

|

|

|

|

|

"when num_column_families > 1.");

|

|

|

|

|

|

|

|

|

|

DEFINE_string(column_family_distribution, "",

|

|

|

|

|

"Comma-separated list of percentages, where the ith element "

|

|

|

|

|

"indicates the probability of an op using the ith column family. "

|

|

|

|

|

"The number of elements must be `num_hot_column_families` if "

|

|

|

|

|

"specified; otherwise, it must be `num_column_families`. The "

|

|

|

|

|

"sum of elements must be 100. E.g., if `num_column_families=4`, "

|

|

|

|

|

"and `num_hot_column_families=0`, a valid list could be "

|

|

|

|

|

"\"10,20,30,40\".");

|

|

|

|

|

|

|

|

|

|

DEFINE_int64(reads, -1, "Number of read operations to do. "

|

|

|

|

|

"If negative, do FLAGS_num reads.");

|

|

|

|

|

|

|

|

|

|

DEFINE_int64(deletes, -1, "Number of delete operations to do. "

|

|

|

|

|

"If negative, do FLAGS_num deletions.");

|

|

|

|

|

|

|

|

|

|

DEFINE_int32(bloom_locality, 0, "Control bloom filter probes locality");

|

|

|

|

|

|

|

|

|

|

DEFINE_int64(seed, 0, "Seed base for random number generators. "

|

|

|

|

|

"When 0 it is deterministic.");

|

|

|

|

|

|

|

|

|

|

DEFINE_int32(threads, 1, "Number of concurrent threads to run.");

|

|

|

|

|

|

|

|

|

|

DEFINE_int32(duration, 0, "Time in seconds for the random-ops tests to run."

|

|

|

|

|

" When 0 then num & reads determine the test duration");

|

|

|

|

|

|

|

|

|

|

DEFINE_string(value_size_distribution_type, "fixed",

|

|

|

|

|

"Value size distribution type: fixed, uniform, normal");

|

|

|

|

|

|

|

|

|

|

DEFINE_int32(value_size, 100, "Size of each value in fixed distribution");

|

|

|

|

|

static unsigned int value_size = 100;

|

|

|

|

|

|

|

|

|

|

DEFINE_int32(value_size_min, 100, "Min size of random value");

|

|

|

|

|

|

|

|

|

|

DEFINE_int32(value_size_max, 102400, "Max size of random value");

|

|

|

|

|

|

|

|

|

|

DEFINE_int32(seek_nexts, 0,

|

|

|

|

|

"How many times to call Next() after Seek() in "

|

|

|

|

|

"fillseekseq, seekrandom, seekrandomwhilewriting and "

|

|

|

|

|

"seekrandomwhilemerging");

|

SkipListRep::LookaheadIterator

Summary:

This diff introduces the `lookahead` argument to `SkipListFactory()`. This is an

optimization for the tailing use case which includes many seeks. E.g. consider

the following operations on a skip list iterator:

Seek(x), Next(), Next(), Seek(x+2), Next(), Seek(x+3), Next(), Next(), ...

If `lookahead` is positive, `SkipListRep` will return an iterator which also

keeps track of the previously visited node. Seek() then first does a linear

search starting from that node (up to `lookahead` steps). As in the tailing

example above, this may require fewer than ~log(n) comparisons as with regular

skip list search.

Test Plan:

Added a new benchmark (`fillseekseq`) which simulates the usage pattern. It

first writes N records (with consecutive keys), then measures how much time it

takes to read them by calling `Seek()` and `Next()`.

$ time ./db_bench -num 10000000 -benchmarks fillseekseq -prefix_size 1 \

-key_size 8 -write_buffer_size $[1024*1024*1024] -value_size 50 \

-seekseq_next 2 -skip_list_lookahead=0

[...]

DB path: [/dev/shm/rocksdbtest/dbbench]

fillseekseq : 0.389 micros/op 2569047 ops/sec;

real 0m21.806s

user 0m12.106s

sys 0m9.672s

$ time ./db_bench [...] -skip_list_lookahead=2

[...]

DB path: [/dev/shm/rocksdbtest/dbbench]

fillseekseq : 0.153 micros/op 6540684 ops/sec;

real 0m19.469s

user 0m10.192s

sys 0m9.252s

Reviewers: ljin, sdong, igor

Reviewed By: igor

Subscribers: dhruba, leveldb, march, lovro

Differential Revision: https://reviews.facebook.net/D23997

10 years ago

|

|

|

|

|

|

|

|

DEFINE_bool(reverse_iterator, false,

|

|

|

|

|

"When true use Prev rather than Next for iterators that do "

|

|

|

|

|

"Seek and then Next");

|

|

|

|

|

|

|

|

|

|

DEFINE_int64(max_scan_distance, 0,

|

|

|

|

|

"Used to define iterate_upper_bound (or iterate_lower_bound "

|

|

|

|

|

"if FLAGS_reverse_iterator is set to true) when value is nonzero");

|

|

|

|

|

|

|

|

|

|

DEFINE_bool(use_uint64_comparator, false, "use Uint64 user comparator");

|

|

|

|

|

|

|

|

|

|

DEFINE_int64(batch_size, 1, "Batch size");

|

|

|

|

|

|

|

|

|

|

static bool ValidateKeySize(const char* /*flagname*/, int32_t /*value*/) {

|

|

|

|

|

return true;

|

|

|

|

|

}

|

|

|

|

|

|

|

|

|

|

static bool ValidateUint32Range(const char* flagname, uint64_t value) {

|

|

|

|

|

if (value > std::numeric_limits<uint32_t>::max()) {

|

Fixing race condition in DBTest.DynamicMemtableOptions

Summary:

This patch fixes a race condition in DBTEst.DynamicMemtableOptions. In rare cases,

it was possible that the main thread would fill up both memtables before the flush

job acquired its work. Then, the flush job was flushing both memtables together,

producing only one L0 file while the test expected two. Now, the test waits for

flushes to finish earlier, to make sure that the memtables are flushed in separate

flush jobs.

Test Plan:

Insert "usleep(10000);" after "IOSTATS_SET_THREAD_POOL_ID(Env::Priority::HIGH);" in BGWorkFlush()

to make the issue more likely. Then test with:

make db_test && time while ./db_test --gtest_filter=*DynamicMemtableOptions; do true; done

Reviewers: rven, sdong, yhchiang, anthony, igor

Reviewed By: igor

Subscribers: dhruba, leveldb

Differential Revision: https://reviews.facebook.net/D45429

9 years ago

|

|

|

fprintf(stderr, "Invalid value for --%s: %lu, overflow\n", flagname,

|

|

|

|

|

(unsigned long)value);

|

|

|

|

|

return false;

|

|

|

|

|

}

|

|

|

|

|

return true;

|

|

|

|

|

}

|

|

|

|

|

|

|

|

|

|

DEFINE_int32(key_size, 16, "size of each key");

|

|

|

|

|

|

|

|

|

|

DEFINE_int32(num_multi_db, 0,

|

|

|

|

|

"Number of DBs used in the benchmark. 0 means single DB.");

|

|

|

|

|

|

|

|

|

|

DEFINE_double(compression_ratio, 0.5, "Arrange to generate values that shrink"

|

|

|

|

|

" to this fraction of their original size after compression");

|

|

|

|

|

|

|

|

|

|

DEFINE_double(read_random_exp_range, 0.0,

|

|

|

|

|

"Read random's key will be generated using distribution of "

|

|

|

|

|

"num * exp(-r) where r is uniform number from 0 to this value. "

|

|

|

|

|

"The larger the number is, the more skewed the reads are. "

|

|

|

|

|

"Only used in readrandom and multireadrandom benchmarks.");

|

|

|

|

|

|

|

|

|

|

DEFINE_bool(histogram, false, "Print histogram of operation timings");

|

|

|

|

|

|

|

|

|

|

DEFINE_bool(enable_numa, false,

|

|

|

|

|

"Make operations aware of NUMA architecture and bind memory "

|

|

|

|

|

"and cpus corresponding to nodes together. In NUMA, memory "

|

|

|

|

|

"in same node as CPUs are closer when compared to memory in "

|

|

|

|

|

"other nodes. Reads can be faster when the process is bound to "

|

|

|

|

|

"CPU and memory of same node. Use \"$numactl --hardware\" command "

|

|

|

|

|

"to see NUMA memory architecture.");

|

|

|

|

|

|

|

|

|

|

DEFINE_int64(db_write_buffer_size,

|

|

|

|

|

ROCKSDB_NAMESPACE::Options().db_write_buffer_size,

|

|

|

|

|

"Number of bytes to buffer in all memtables before compacting");

|

|

|

|

|

|

|

|

|

|

DEFINE_bool(cost_write_buffer_to_cache, false,

|

|

|

|

|

"The usage of memtable is costed to the block cache");

|

|

|

|

|

|

|

|

|

|

DEFINE_int64(write_buffer_size, ROCKSDB_NAMESPACE::Options().write_buffer_size,

|

|

|

|

|

"Number of bytes to buffer in memtable before compacting");

|

|

|

|

|

|

|

|

|

|

DEFINE_int32(max_write_buffer_number,

|

|

|

|

|

ROCKSDB_NAMESPACE::Options().max_write_buffer_number,

|

|

|

|

|

"The number of in-memory memtables. Each memtable is of size"

|

|

|

|

|

" write_buffer_size bytes.");

|

|

|

|

|

|

|

|

|

|

DEFINE_int32(min_write_buffer_number_to_merge,

|

|

|

|

|

ROCKSDB_NAMESPACE::Options().min_write_buffer_number_to_merge,

|

|

|

|

|

"The minimum number of write buffers that will be merged together"

|

|

|

|

|

"before writing to storage. This is cheap because it is an"

|

|

|

|

|

"in-memory merge. If this feature is not enabled, then all these"

|

|

|

|

|

"write buffers are flushed to L0 as separate files and this "

|

|

|

|

|

"increases read amplification because a get request has to check"

|

|

|

|

|

" in all of these files. Also, an in-memory merge may result in"

|

|

|

|

|

" writing less data to storage if there are duplicate records "

|

|

|

|

|

" in each of these individual write buffers.");

|

|

|

|

|

|

Support saving history in memtable_list

Summary:

For transactions, we are using the memtables to validate that there are no write conflicts. But after flushing, we don't have any memtables, and transactions could fail to commit. So we want to someone keep around some extra history to use for conflict checking. In addition, we want to provide a way to increase the size of this history if too many transactions fail to commit.

After chatting with people, it seems like everyone prefers just using Memtables to store this history (instead of a separate history structure). It seems like the best place for this is abstracted inside the memtable_list. I decide to create a separate list in MemtableListVersion as using the same list complicated the flush/installalflushresults logic too much.

This diff adds a new parameter to control how much memtable history to keep around after flushing. However, it sounds like people aren't too fond of adding new parameters. So I am making the default size of flushed+not-flushed memtables be set to max_write_buffers. This should not change the maximum amount of memory used, but make it more likely we're using closer the the limit. (We are now postponing deleting flushed memtables until the max_write_buffer limit is reached). So while we might use more memory on average, we are still obeying the limit set (and you could argue it's better to go ahead and use up memory now instead of waiting for a write stall to happen to test this limit).

However, if people are opposed to this default behavior, we can easily set it to 0 and require this parameter be set in order to use transactions.

Test Plan: Added a xfunc test to play around with setting different values of this parameter in all tests. Added testing in memtablelist_test and planning on adding more testing here.

Reviewers: sdong, rven, igor

Reviewed By: igor

Subscribers: dhruba, leveldb

Differential Revision: https://reviews.facebook.net/D37443

10 years ago

|

|

|

DEFINE_int32(max_write_buffer_number_to_maintain,

|

|

|

|

|

ROCKSDB_NAMESPACE::Options().max_write_buffer_number_to_maintain,

|

Support saving history in memtable_list

Summary:

For transactions, we are using the memtables to validate that there are no write conflicts. But after flushing, we don't have any memtables, and transactions could fail to commit. So we want to someone keep around some extra history to use for conflict checking. In addition, we want to provide a way to increase the size of this history if too many transactions fail to commit.

After chatting with people, it seems like everyone prefers just using Memtables to store this history (instead of a separate history structure). It seems like the best place for this is abstracted inside the memtable_list. I decide to create a separate list in MemtableListVersion as using the same list complicated the flush/installalflushresults logic too much.

This diff adds a new parameter to control how much memtable history to keep around after flushing. However, it sounds like people aren't too fond of adding new parameters. So I am making the default size of flushed+not-flushed memtables be set to max_write_buffers. This should not change the maximum amount of memory used, but make it more likely we're using closer the the limit. (We are now postponing deleting flushed memtables until the max_write_buffer limit is reached). So while we might use more memory on average, we are still obeying the limit set (and you could argue it's better to go ahead and use up memory now instead of waiting for a write stall to happen to test this limit).

However, if people are opposed to this default behavior, we can easily set it to 0 and require this parameter be set in order to use transactions.

Test Plan: Added a xfunc test to play around with setting different values of this parameter in all tests. Added testing in memtablelist_test and planning on adding more testing here.

Reviewers: sdong, rven, igor

Reviewed By: igor

Subscribers: dhruba, leveldb

Differential Revision: https://reviews.facebook.net/D37443

10 years ago

|

|

|

"The total maximum number of write buffers to maintain in memory "

|

|

|

|

|

"including copies of buffers that have already been flushed. "

|

|

|

|

|

"Unlike max_write_buffer_number, this parameter does not affect "

|

|

|

|

|

"flushing. This controls the minimum amount of write history "

|

|

|

|

|

"that will be available in memory for conflict checking when "

|

|

|

|

|

"Transactions are used. If this value is too low, some "

|

|

|

|

|

"transactions may fail at commit time due to not being able to "

|

|

|

|

|

"determine whether there were any write conflicts. Setting this "

|

|

|

|

|

"value to 0 will cause write buffers to be freed immediately "

|

|

|

|

|

"after they are flushed. If this value is set to -1, "

|

|

|

|

|

"'max_write_buffer_number' will be used.");

|

|

|

|

|

|

Refactor trimming logic for immutable memtables (#5022)

Summary:

MyRocks currently sets `max_write_buffer_number_to_maintain` in order to maintain enough history for transaction conflict checking. The effectiveness of this approach depends on the size of memtables. When memtables are small, it may not keep enough history; when memtables are large, this may consume too much memory.

We are proposing a new way to configure memtable list history: by limiting the memory usage of immutable memtables. The new option is `max_write_buffer_size_to_maintain` and it will take precedence over the old `max_write_buffer_number_to_maintain` if they are both set to non-zero values. The new option accounts for the total memory usage of flushed immutable memtables and mutable memtable. When the total usage exceeds the limit, RocksDB may start dropping immutable memtables (which is also called trimming history), starting from the oldest one.

The semantics of the old option actually works both as an upper bound and lower bound. History trimming will start if number of immutable memtables exceeds the limit, but it will never go below (limit-1) due to history trimming.

In order the mimic the behavior with the new option, history trimming will stop if dropping the next immutable memtable causes the total memory usage go below the size limit. For example, assuming the size limit is set to 64MB, and there are 3 immutable memtables with sizes of 20, 30, 30. Although the total memory usage is 80MB > 64MB, dropping the oldest memtable will reduce the memory usage to 60MB < 64MB, so in this case no memtable will be dropped.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5022

Differential Revision: D14394062

Pulled By: miasantreble

fbshipit-source-id: 60457a509c6af89d0993f988c9b5c2aa9e45f5c5

5 years ago

|

|

|

DEFINE_int64(max_write_buffer_size_to_maintain,

|

|

|

|

|

ROCKSDB_NAMESPACE::Options().max_write_buffer_size_to_maintain,

|

Refactor trimming logic for immutable memtables (#5022)

Summary:

MyRocks currently sets `max_write_buffer_number_to_maintain` in order to maintain enough history for transaction conflict checking. The effectiveness of this approach depends on the size of memtables. When memtables are small, it may not keep enough history; when memtables are large, this may consume too much memory.

We are proposing a new way to configure memtable list history: by limiting the memory usage of immutable memtables. The new option is `max_write_buffer_size_to_maintain` and it will take precedence over the old `max_write_buffer_number_to_maintain` if they are both set to non-zero values. The new option accounts for the total memory usage of flushed immutable memtables and mutable memtable. When the total usage exceeds the limit, RocksDB may start dropping immutable memtables (which is also called trimming history), starting from the oldest one.

The semantics of the old option actually works both as an upper bound and lower bound. History trimming will start if number of immutable memtables exceeds the limit, but it will never go below (limit-1) due to history trimming.

In order the mimic the behavior with the new option, history trimming will stop if dropping the next immutable memtable causes the total memory usage go below the size limit. For example, assuming the size limit is set to 64MB, and there are 3 immutable memtables with sizes of 20, 30, 30. Although the total memory usage is 80MB > 64MB, dropping the oldest memtable will reduce the memory usage to 60MB < 64MB, so in this case no memtable will be dropped.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/5022

Differential Revision: D14394062

Pulled By: miasantreble

fbshipit-source-id: 60457a509c6af89d0993f988c9b5c2aa9e45f5c5

5 years ago

|

|

|

"The total maximum size of write buffers to maintain in memory "

|

|

|

|

|

"including copies of buffers that have already been flushed. "

|

|

|

|

|

"Unlike max_write_buffer_number, this parameter does not affect "

|

|

|

|

|

"flushing. This controls the minimum amount of write history "

|

|

|

|

|

"that will be available in memory for conflict checking when "

|

|

|

|

|

"Transactions are used. If this value is too low, some "

|

|

|

|

|

"transactions may fail at commit time due to not being able to "

|

|

|

|

|

"determine whether there were any write conflicts. Setting this "

|

|

|

|

|

"value to 0 will cause write buffers to be freed immediately "

|

|

|

|

|

"after they are flushed. If this value is set to -1, "

|

|

|

|

|

"'max_write_buffer_number' will be used.");

|

|

|

|

|

|

|

|

|

|

DEFINE_int32(max_background_jobs,

|

|

|

|

|

ROCKSDB_NAMESPACE::Options().max_background_jobs,

|

|

|

|

|

"The maximum number of concurrent background jobs that can occur "

|

|

|

|

|

"in parallel.");

|

|

|

|

|

|

|

|

|

|

DEFINE_int32(num_bottom_pri_threads, 0,

|

|

|

|

|

"The number of threads in the bottom-priority thread pool (used "

|

|

|

|

|

"by universal compaction only).");

|

|

|

|

|

|

|

|

|

|

DEFINE_int32(num_high_pri_threads, 0,

|

|

|

|

|

"The maximum number of concurrent background compactions"

|

|

|

|

|

" that can occur in parallel.");

|

|

|

|

|

|

|

|

|

|

DEFINE_int32(num_low_pri_threads, 0,

|

|

|

|

|

"The maximum number of concurrent background compactions"

|

|

|

|

|

" that can occur in parallel.");

|

|

|

|

|

|

|

|

|

|

DEFINE_int32(max_background_compactions,

|

|

|

|

|

ROCKSDB_NAMESPACE::Options().max_background_compactions,

|

|

|

|

|

"The maximum number of concurrent background compactions"

|

|

|

|

|

" that can occur in parallel.");

|

|

|

|

|

|

|

|

|

|

DEFINE_int32(base_background_compactions, -1, "DEPRECATED");

|

|

|

|

|

|

|

|

|

|

DEFINE_uint64(subcompactions, 1,

|

Fixing race condition in DBTest.DynamicMemtableOptions

Summary:

This patch fixes a race condition in DBTEst.DynamicMemtableOptions. In rare cases,

it was possible that the main thread would fill up both memtables before the flush

job acquired its work. Then, the flush job was flushing both memtables together,

producing only one L0 file while the test expected two. Now, the test waits for

flushes to finish earlier, to make sure that the memtables are flushed in separate

flush jobs.

Test Plan:

Insert "usleep(10000);" after "IOSTATS_SET_THREAD_POOL_ID(Env::Priority::HIGH);" in BGWorkFlush()

to make the issue more likely. Then test with:

make db_test && time while ./db_test --gtest_filter=*DynamicMemtableOptions; do true; done

Reviewers: rven, sdong, yhchiang, anthony, igor

Reviewed By: igor

Subscribers: dhruba, leveldb

Differential Revision: https://reviews.facebook.net/D45429

9 years ago

|

|

|

"Maximum number of subcompactions to divide L0-L1 compactions "

|

|

|

|

|

"into.");

|

|

|

|

|

static const bool FLAGS_subcompactions_dummy

|

|

|

|

|

__attribute__((__unused__)) = RegisterFlagValidator(&FLAGS_subcompactions,

|

Fixing race condition in DBTest.DynamicMemtableOptions

Summary:

This patch fixes a race condition in DBTEst.DynamicMemtableOptions. In rare cases,

it was possible that the main thread would fill up both memtables before the flush

job acquired its work. Then, the flush job was flushing both memtables together,

producing only one L0 file while the test expected two. Now, the test waits for

flushes to finish earlier, to make sure that the memtables are flushed in separate

flush jobs.

Test Plan:

Insert "usleep(10000);" after "IOSTATS_SET_THREAD_POOL_ID(Env::Priority::HIGH);" in BGWorkFlush()

to make the issue more likely. Then test with:

make db_test && time while ./db_test --gtest_filter=*DynamicMemtableOptions; do true; done

Reviewers: rven, sdong, yhchiang, anthony, igor

Reviewed By: igor

Subscribers: dhruba, leveldb

Differential Revision: https://reviews.facebook.net/D45429

9 years ago

|

|

|

&ValidateUint32Range);

|

|

|

|

|

|

|

|

|

|

DEFINE_int32(max_background_flushes,

|

|

|

|

|

ROCKSDB_NAMESPACE::Options().max_background_flushes,

|

|

|

|

|

"The maximum number of concurrent background flushes"

|

|

|

|

|

" that can occur in parallel.");

|

|

|

|

|

|

|

|

|

|

static ROCKSDB_NAMESPACE::CompactionStyle FLAGS_compaction_style_e;

|

|

|

|

|

DEFINE_int32(compaction_style,

|

|

|

|

|

(int32_t)ROCKSDB_NAMESPACE::Options().compaction_style,

|

|

|

|

|

"style of compaction: level-based, universal and fifo");

|

|

|

|

|

|

|

|

|

|

static ROCKSDB_NAMESPACE::CompactionPri FLAGS_compaction_pri_e;

|

|

|

|

|

DEFINE_int32(compaction_pri,

|

|

|

|

|

(int32_t)ROCKSDB_NAMESPACE::Options().compaction_pri,

|

|

|

|

|

"priority of files to compaction: by size or by data age");

|

|

|

|

|

|

|

|

|

|

DEFINE_int32(universal_size_ratio, 0,

|

|

|

|

|

"Percentage flexibility while comparing file size"

|

|

|

|

|

" (for universal compaction only).");

|

|

|

|

|

|

|

|

|

|

DEFINE_int32(universal_min_merge_width, 0, "The minimum number of files in a"

|

|

|

|

|

" single compaction run (for universal compaction only).");

|

|

|

|

|

|

|

|

|

|

DEFINE_int32(universal_max_merge_width, 0, "The max number of files to compact"

|

|

|

|

|

" in universal style compaction");

|

|

|

|

|

|

|

|

|

|

DEFINE_int32(universal_max_size_amplification_percent, 0,

|

|

|

|

|

"The max size amplification for universal style compaction");

|

|

|

|

|

|

|

|

|

|

DEFINE_int32(universal_compression_size_percent, -1,

|

|

|

|

|

"The percentage of the database to compress for universal "

|

|

|

|

|

"compaction. -1 means compress everything.");

|

|

|

|

|

|

|

|

|

|

DEFINE_bool(universal_allow_trivial_move, false,

|

|

|

|

|

"Allow trivial move in universal compaction.");

|

|

|

|

|

|

|

|

|

|

DEFINE_int64(cache_size, 8 << 20, // 8MB

|

|

|

|

|

"Number of bytes to use as a cache of uncompressed data");

|

|

|

|

|

|

|

|

|

|

DEFINE_int32(cache_numshardbits, 6,

|

|

|

|

|

"Number of shards for the block cache"

|

|

|

|

|

" is 2 ** cache_numshardbits. Negative means use default settings."

|

|

|

|

|

" This is applied only if FLAGS_cache_size is non-negative.");

|

|

|

|

|

|

|

|

|

|

DEFINE_double(cache_high_pri_pool_ratio, 0.0,

|

|

|

|

|

"Ratio of block cache reserve for high pri blocks. "

|

|

|

|

|

"If > 0.0, we also enable "

|

|

|

|

|

"cache_index_and_filter_blocks_with_high_priority.");

|

|

|

|

|

|

|

|

|

|

DEFINE_bool(use_clock_cache, false,

|

|

|

|

|

"Replace default LRU block cache with clock cache.");

|

|

|

|

|

|

|

|

|

|

DEFINE_int64(simcache_size, -1,

|

|

|

|

|

"Number of bytes to use as a simcache of "

|

|

|

|

|

"uncompressed data. Nagative value disables simcache.");

|

|

|

|

|

|

|

|

|

|

DEFINE_bool(cache_index_and_filter_blocks, false,

|

|

|

|

|

"Cache index/filter blocks in block cache.");

|

|

|

|

|

|

Provide an allocator for new memory type to be used with RocksDB block cache (#6214)

Summary:

New memory technologies are being developed by various hardware vendors (Intel DCPMM is one such technology currently available). These new memory types require different libraries for allocation and management (such as PMDK and memkind). The high capacities available make it possible to provision large caches (up to several TBs in size), beyond what is achievable with DRAM.

The new allocator provided in this PR uses the memkind library to allocate memory on different media.

**Performance**

We tested the new allocator using db_bench.

- For each test, we vary the size of the block cache (relative to the size of the uncompressed data in the database).

- The database is filled sequentially. Throughput is then measured with a readrandom benchmark.

- We use a uniform distribution as a worst-case scenario.

The plot shows throughput (ops/s) relative to a configuration with no block cache and default allocator.

For all tests, p99 latency is below 500 us.

**Changes**

- Add MemkindKmemAllocator

- Add --use_cache_memkind_kmem_allocator db_bench option (to create an LRU block cache with the new allocator)

- Add detection of memkind library with KMEM DAX support

- Add test for MemkindKmemAllocator

**Minimum Requirements**

- kernel 5.3.12

- ndctl v67 - https://github.com/pmem/ndctl

- memkind v1.10.0 - https://github.com/memkind/memkind

**Memory Configuration**

The allocator uses the MEMKIND_DAX_KMEM memory kind. Follow the instructions on[ memkind’s GitHub page](https://github.com/memkind/memkind) to set up NVDIMM memory accordingly.

Note on memory allocation with NVDIMM memory exposed as system memory.

- The MemkindKmemAllocator will only allocate from NVDIMM memory (using memkind_malloc with MEMKIND_DAX_KMEM kind).

- The default allocator is not restricted to RAM by default. Based on NUMA node latency, the kernel should allocate from local RAM preferentially, but it’s a kernel decision. numactl --preferred/--membind can be used to allocate preferentially/exclusively from the local RAM node.

**Usage**

When creating an LRU cache, pass a MemkindKmemAllocator object as argument.

For example (replace capacity with the desired value in bytes):

```

#include "rocksdb/cache.h"

#include "memory/memkind_kmem_allocator.h"

NewLRUCache(

capacity /*size_t*/,

6 /*cache_numshardbits*/,

false /*strict_capacity_limit*/,

false /*cache_high_pri_pool_ratio*/,

std::make_shared<MemkindKmemAllocator>());

```

Refer to [RocksDB’s block cache documentation](https://github.com/facebook/rocksdb/wiki/Block-Cache) to assign the LRU cache as block cache for a database.

Pull Request resolved: https://github.com/facebook/rocksdb/pull/6214

Reviewed By: cheng-chang

Differential Revision: D19292435

fbshipit-source-id: 7202f47b769e7722b539c86c2ffd669f64d7b4e1

5 years ago

|

|

|

DEFINE_bool(use_cache_memkind_kmem_allocator, false,

|

|

|

|

|

"Use memkind kmem allocator for block cache.");

|

|

|

|

|

|

|

|

|

|

DEFINE_bool(partition_index_and_filters, false,

|

|

|

|

|

"Partition index and filter blocks.");

|

|

|

|

|

|

|

|

|

|

DEFINE_bool(partition_index, false, "Partition index blocks");

|

|

|

|

|

|

|

|

|

|

DEFINE_bool(index_with_first_key, false, "Include first key in the index");

|

|

|

|

|

|

Minimize memory internal fragmentation for Bloom filters (#6427)

Summary:

New experimental option BBTO::optimize_filters_for_memory builds

filters that maximize their use of "usable size" from malloc_usable_size,

which is also used to compute block cache charges.

Rather than always "rounding up," we track state in the

BloomFilterPolicy object to mix essentially "rounding down" and

"rounding up" so that the average FP rate of all generated filters is

the same as without the option. (YMMV as heavily accessed filters might

be unluckily lower accuracy.)

Thus, the option near-minimizes what the block cache considers as

"memory used" for a given target Bloom filter false positive rate and

Bloom filter implementation. There are no forward or backward

compatibility issues with this change, though it only works on the

format_version=5 Bloom filter.

With Jemalloc, we see about 10% reduction in memory footprint (and block

cache charge) for Bloom filters, but 1-2% increase in storage footprint,

due to encoding efficiency losses (FP rate is non-linear with bits/key).

Why not weighted random round up/down rather than state tracking? By